AI agents are fundamentally different from chatbots. A chatbot waits for user input and responds. An agent pursues goals autonomously, calling tools, reasoning about problems, and taking actions without human input each step.

This distinction matters because agents can automate entire workflows. A lead qualification agent scores prospects, enriches their data, and assigns them to sales reps—all without human intervention. A content triage agent categorizes support tickets, routes them to specialists, and escalates edge cases to humans.

In this guide, you’ll learn how to architect reliable agents, integrate them with business systems, prevent common failures, and measure their impact. We’ll cover real patterns used in production at companies automating lead qualification, document processing, and customer support at scale.

What Are AI Agents & How Do They Differ from Chatbots?

Definition of AI Agents (Autonomous Systems That Perceive, Decide, Act)

An AI agent is a software system that:

- Perceives its environment (reads input, tool results, memory)

- Reasons about the best action (uses an LLM to plan)

- Acts by calling tools or taking steps toward a goal

- Adapts based on feedback and results

Agents are goal-driven. You define the objective (“Score and qualify this lead”), and the agent figures out how to achieve it.

Key Distinction: Chatbots Are Reactive; Agents Are Autonomous

Chatbots: User Initiates → Model Responds

User: "What's the status of my order?"

Chatbot: [Looks up order, responds]

User: "Can you cancel it?"

Chatbot: [Cancels order, responds]

The user drives every interaction. The chatbot is stateless—each message is independent.

Agents: Goal-Driven, Take Actions Without User Input Each Step

Agent goal: "Qualify and score this lead"

1. Agent observes: [Lead data from CRM]

2. Agent reasons: "I need to enrich this data and score them"

3. Agent acts: Calls enrichment API

4. Agent observes: [Enriched data]

5. Agent reasons: "Score is 85, should assign to top sales rep"

6. Agent acts: Updates CRM, sends notification

7. Done. No human input required.

The agent works toward a defined goal, making multiple decisions and tool calls autonomously.

Why Agents Matter for Workflows

Automation at Scale (Handle 1,000s of Tasks Without Human Intervention)

Manual lead qualification: 5 minutes per lead × 100 leads = 500 hours/month. Cost: $10,000/month (at $20/hour).

Agent-driven: 10 seconds per lead × 100 leads = 16 hours/month. Cost: $100 (agent API calls). Savings: 99%.

Agents multiply your team’s capacity without hiring.

Multi-Step Reasoning (Break Complex Problems Into Sub-Tasks)

Complex tasks require multiple steps:

- Lead qualification: Score → Enrich → Assign → Notify

- Document triage: Extract → Classify → Route → Archive

- Customer support: Understand → Search knowledge base → Generate response → Route if needed

Agents handle this reasoning automatically. You define the goal; the agent breaks it into steps.

Tool Use (Agents Call APIs, Databases, External Services)

Agents are “hands.” They call APIs to:

- Query databases

- Update CRM systems

- Send emails or Slack messages

- Call third-party services (data enrichment, payment processing)

A single agent can orchestrate 5-10 tool calls to complete a workflow.

Adaptive Behavior (Learn From Feedback, Adjust Approach)

Agents can improve over time. If an agent misclassifies documents, you provide feedback. The agent learns and adjusts its prompting strategy.

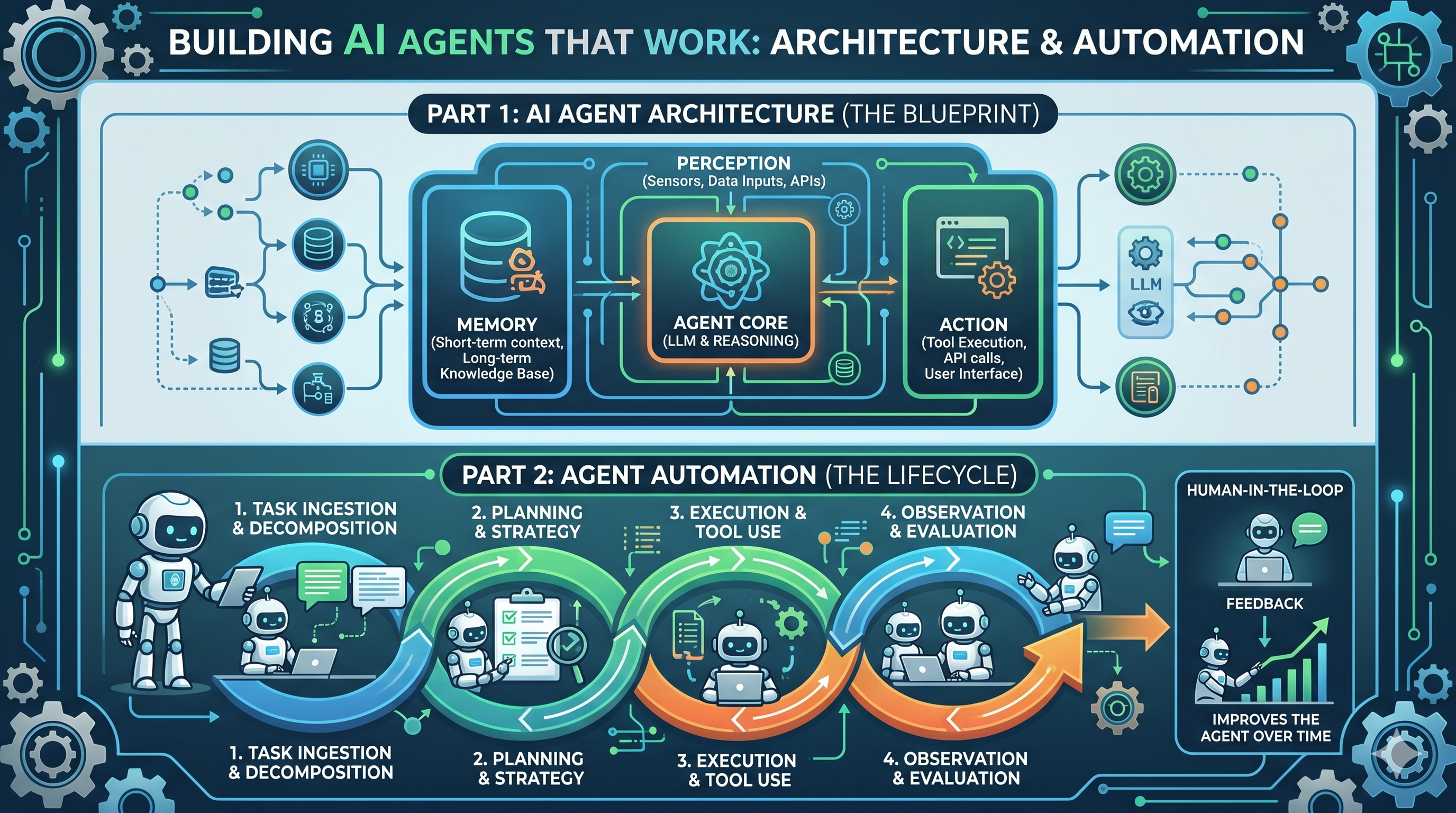

Core Components of an AI Agent (The Agent Loop)

The Agent Reasoning Loop (With Diagram Description)

The core of every agent is a loop:

┌─────────────────────────────────────────┐

│ START: Agent receives goal │

└────────────────┬────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ OBSERVE: Read input, tool results, │

│ memory, environment │

└────────────────┬────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ REASON: LLM decides next action │

│ (which tool to call, or done?) │

└────────────────┬────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ ACT: Execute tool call or complete │

│ task │

└────────────────┬────────────────────────┘

│

▼

┌─────────────────────────────────────────┐

│ FEEDBACK: Evaluate result, update │

│ memory, check if goal met │

└────────────────┬────────────────────────┘

│

├─→ Goal not met? Loop back to OBSERVE

│

└─→ Goal met or max steps reached? DONE

Observation: Agent Perceives State (Input, Environment, Tool Results)

The agent reads:

- Initial input (lead data, document text, customer question)

- Tool results from previous steps (API responses, database queries)

- Memory (conversation history, past decisions, knowledge base)

- Current state (what’s been done, what’s left)

Reasoning: LLM Decides Next Action (Planning, Tool Selection)

The LLM receives a prompt like:

You are a lead qualification agent. Your goal is to score and qualify this lead.

Available tools:

1. enrich_lead(lead_id) - Get additional data about the lead

2. score_lead(lead_data) - Score based on criteria

3. assign_to_sales_rep(lead_id, rep_id) - Assign lead to a rep

4. send_notification(rep_id, message) - Notify rep

Current state:

- Lead ID: 12345

- Company: Acme Corp

- Revenue: Unknown (need to enrich)

- Status: Not scored yet

What should you do next?

The LLM responds: “I should enrich the lead first to get revenue data, then score, then assign.”

Action: Execute Tool Call or Take Step Toward Goal

The agent executes the selected tool:

result = enrich_lead(lead_id=12345)

# Returns: {'revenue': '$10M', 'industry': 'SaaS', 'employees': 150}

Feedback: Evaluate Result, Adjust Strategy If Needed

The agent checks: Did the tool call succeed? Did it move toward the goal? Update memory and loop.

Loop: Repeat Until Goal Is Achieved or Max Steps Reached

The agent repeats observation → reasoning → action → feedback until:

- Goal is achieved (“Lead scored and assigned”)

- Max steps reached (prevent infinite loops)

- Error occurs (escalate to human)

Tool Integration (The Agent’s “Hands”)

Defining Tools (Function Signatures, Descriptions, Parameters)

Tools are functions the agent can call. Define them clearly:

tools = [

{

"name": "enrich_lead",

"description": "Get additional company data about a lead (revenue, employees, industry)",

"parameters": {

"lead_id": {"type": "string", "description": "Unique identifier of the lead"}

}

},

{

"name": "score_lead",

"description": "Score a lead on a scale of 0-100 based on fit criteria",

"parameters": {

"lead_data": {"type": "object", "description": "Lead information including revenue, industry, etc."}

}

}

]

Clear descriptions help the LLM choose the right tool.

Tool Calling (How Agents Select and Invoke Tools)

The LLM responds with a tool call:

{

"thought": "I need to enrich this lead to get revenue data",

"action": "enrich_lead",

"action_input": {"lead_id": "12345"}

}

Your agent framework executes the tool and passes the result back to the LLM.

Tool Result Handling (Parsing Responses, Error Recovery)

Handle both success and failure:

def execute_tool(tool_name, tool_input):

try:

if tool_name == "enrich_lead":

result = crm_api.enrich(tool_input['lead_id'])

return {"status": "success", "data": result}

except Exception as e:

return {"status": "error", "message": str(e)}

If a tool fails, the agent should try a different approach or escalate to a human.

Memory Systems (What Agents Remember)

Short-Term Memory (Current Conversation Context)

The agent’s working memory: current input, tool results, reasoning steps. Usually stored in the context window (the prompt).

Example: Lead qualification agent remembers:

- Original lead data

- Enrichment results

- Score

- Which sales rep was assigned

Long-Term Memory (Knowledge Base, Past Interactions)

Persistent memory: past decisions, learned patterns, knowledge base.

Use cases:

- Knowledge base: Agent retrieves relevant articles when answering customer questions

- Decision history: Agent learns which leads converted (improves scoring)

- Interaction logs: Agent remembers past interactions with a customer

Implement with vector databases (Pinecone, Weaviate) for semantic search.

Memory Limitations (Context Window Constraints)

LLMs have finite context windows (4K-128K tokens). Agents can’t remember everything. Strategies:

- Summarization: Compress old conversations into summaries

- Retrieval-augmented generation (RAG): Fetch only relevant memory when needed

- Hierarchical memory: Keep recent interactions in short-term, older ones in long-term

Reasoning Backbone (The “Brain”)

LLM Selection (GPT-4, Claude, Open-Source Models)

- GPT-4: Best reasoning, handles complex tasks. Cost: $0.03-0.06 per 1K tokens.

- Claude 3.5 Sonnet: Strong reasoning, long context (200K tokens). Cost: $0.003-0.015 per 1K tokens.

- Open-source (LLaMA 4): Cheaper ($0.01-0.03 per 1M tokens), customizable, privacy-friendly.

For most agents, Claude or open-source models are sufficient and cheaper.

Reasoning Modes (Chain-of-Thought, Tree-of-Thought, Reflexion)

- Chain-of-thought: Agent thinks step-by-step. “I need to enrich → score → assign.”

- Tree-of-thought: Agent explores multiple paths, picks the best. Slower but more accurate for complex problems.

- Reflexion: Agent critiques its own output, retries if needed. Reduces hallucinations.

Example reflexion prompt:

Agent: "I'll assign this lead to rep John."

Critic: "Wait, did you check if John is already at capacity?"

Agent: "Good point. Let me check John's workload first."

Trade-Offs: Speed vs. Accuracy

- Fast agents: Single-turn reasoning, no tool validation. 2-5 seconds per task. 85% accuracy.

- Accurate agents: Multi-step reasoning, validation, reflexion. 10-30 seconds per task. 95% accuracy.

Choose speed for real-time (customer support). Choose accuracy for high-stakes (financial decisions).

Types of Agents & When to Use Each

Reactive Agents (Simple, Fast, Stateless)

How They Work (Single Turn: Input → Action)

Reactive agents make a single decision and act. No multi-step planning.

Input: "What's my account balance?"

→ Agent queries database

→ Agent responds with balance

Done.

Best For: Simple Tool Use, API Calls, Immediate Responses

- Customer service Q&A (look up order, check balance)

- Simple API calls (get weather, check stock price)

- Immediate responses needed (< 2 second latency)

Example: Customer Service Chatbot With Knowledge Base Lookup

def customer_service_agent(question):

# 1. Search knowledge base

articles = search_kb(question)

# 2. LLM picks best article

response = llm.complete(f"""

Question: {question}

Relevant articles: {articles}

Provide an answer based on these articles.

""")

# 3. Return response

return response

Latency: 1-3 seconds. Cost: $0.001-0.01 per query.

Planning Agents (Goal-Driven, Multi-Step Reasoning)

How They Work (Decompose Goal Into Sub-Tasks, Execute Plan)

Planning agents break down complex goals into steps.

Goal: "Qualify and assign this lead"

→ Agent plans: [enrich, score, assign, notify]

→ Agent executes each step

→ Agent verifies goal achieved

Done.

Best For: Complex Workflows, Research Tasks, Data Analysis

- Lead qualification (enrich → score → assign)

- Document processing (extract → classify → store)

- Research tasks (search → summarize → compile)

Example: Lead Qualification Agent (Score → Enrich → Assign)

def lead_qualification_agent(lead_id):

lead = crm.get_lead(lead_id)

# Step 1: Enrich

enriched = enrich_lead(lead)

# Step 2: Score

score = score_lead(enriched)

# Step 3: Assign

best_rep = find_best_sales_rep(score)

crm.assign_lead(lead_id, best_rep)

# Step 4: Notify

send_slack(f"New qualified lead assigned to {best_rep}")

return {"lead_id": lead_id, "score": score, "assigned_to": best_rep}

Latency: 5-15 seconds. Cost: $0.02-0.05 per lead.

Learning Agents (Adaptive, Improve Over Time)

How They Work (Incorporate Feedback, Adjust Behavior)

Learning agents get better with feedback.

Initial: Agent classifies document as "Invoice" (60% confidence)

Human feedback: "Actually, it's a Receipt"

Agent learns: Adjust classification prompts

Next time: Same document classified as "Receipt" (90% confidence)

Best For: Long-Running Processes, Personalization, Optimization

- Content recommendation (learns user preferences)

- Customer support routing (learns which agents handle which issues best)

- Pricing optimization (learns which prices convert best)

Example: Content Recommendation Agent (Learns User Preferences)

def recommendation_agent(user_id):

# Get user history

history = db.get_user_history(user_id)

# LLM recommends based on patterns

recommendation = llm.complete(f"""

User history: {history}

Based on past preferences, what should we recommend?

""")

# Show recommendation, collect feedback

feedback = user_feedback # thumbs up/down

# Store feedback for future recommendations

db.log_feedback(user_id, recommendation, feedback)

return recommendation

Over time, recommendations improve as the agent learns user preferences.

Hierarchical Agents (Agents Managing Other Agents)

How They Work (Supervisor Agent Delegates to Specialists)

A supervisor agent coordinates specialist agents.

Supervisor: "Process this support ticket"

├─ Classifier agent: "This is a billing issue"

├─ Billing specialist agent: "Refund $50"

└─ Notification agent: "Send confirmation email"

Best For: Enterprise Workflows, Large-Scale Automation

- Content creation (research → write → edit → publish agents)

- Complex customer support (triage → resolve → escalate agents)

- Data processing pipelines (extract → transform → load agents)

Example: Content Creation Pipeline (Research → Write → Edit → Publish)

def content_pipeline_agent(topic):

# Supervisor delegates

research = research_agent(topic)

draft = writer_agent(research)

edited = editor_agent(draft)

published = publisher_agent(edited)

return {"topic": topic, "status": "published"}

Each specialist agent is optimized for its task. Supervisor orchestrates.

Top AI Agent Tools & Frameworks in 2026 (Comparison Table)

Evaluation Criteria

Reasoning Capability (Chain-of-Thought, Planning, Reflexion)

How sophisticated the agent’s thinking is. Simple agents use chain-of-thought. Complex agents use planning and reflexion.

Tool Integration (How Easy to Add Custom Tools)

Can you easily connect APIs, databases, CRM systems? Or do you need custom code?

Learning Curve (Setup Time, Documentation Quality)

How quickly can a developer get a working agent? No-code platforms are faster; Python frameworks are more flexible.

Pricing Model (Free, Per-API-Call, Subscription)

Some frameworks are open-source (free). Others charge per API call or subscription.

Best Use Cases

What is each tool optimized for?

Comparison Table: Top AI Agent Tools & Frameworks (2026)

| Tool | Framework Type | Reasoning Capability | Tool Integration | Learning Curve | Pricing | Best For |

|---|---|---|---|---|---|---|

| n8n | Visual workflow builder | Chain-of-thought | 500+ integrations | Low | Free + paid | Non-technical users, quick setup |

| CrewAI | Python framework | Planning + reflexion | Custom tools (Python) | Medium | Open-source | Developers, complex agents |

| Autogen | Python framework | Multi-agent reasoning | Custom tools | High | Open-source | Research, multi-agent systems |

| LangGraph | Python framework | Planning + state management | LangChain ecosystem | Medium | Open-source | Complex workflows, state tracking |

| FlowHunt | Native platform | Chain-of-thought + planning | Native + API integrations | Low | Subscription | Workflow automation, ease-of-use |

| Lindy.ai | No-code platform | Chain-of-thought | 100+ integrations | Very low | Freemium | Non-technical, quick agents |

| Gumloop | No-code platform | Chain-of-thought | 50+ integrations | Very low | Freemium | Simple automation, templates |

Key differences:

- No-code (n8n, FlowHunt, Lindy.ai): Fast to build, limited customization. Good for standard workflows.

- Python frameworks (CrewAI, Autogen, LangGraph): Flexible, powerful, steeper learning curve. Good for complex logic.

- Open-source (CrewAI, Autogen, LangGraph): Free, but you manage infrastructure. Paid platforms handle hosting.

How to Choose the Right Tool for Your Use Case

- Quick prototype (< 1 week): Use no-code (FlowHunt, n8n, Lindy.ai)

- Complex agent with custom logic: Use Python framework (CrewAI, LangGraph)

- Multi-agent system (agents coordinating): Use Autogen

- Production workflow automation: Use FlowHunt (managed, monitored, scaled)

Building Your First Agent: Step-by-Step Architecture

Define the Agent’s Goal and Scope

What Problem Does It Solve?

Be specific. Bad: “Automate lead management.” Good: “Score leads 0-100, enrich with company data, assign to sales reps based on capacity.”

What Are the Success Metrics?

- Accuracy: % of correct decisions (target: > 90%)

- Latency: Time to complete task (target: < 10 seconds)

- Cost: API calls per task (target: < $0.05)

- Automation rate: % of tasks completed without human intervention (target: > 80%)

What Are the Constraints (Latency, Cost, Accuracy)?

Trade-offs:

- Real-time workflows: Need < 5 second latency. Use fast models, fewer tool calls.

- Batch workflows: Can tolerate 5-30 minutes. Use more accurate reasoning, more tool calls.

- Cost-sensitive: Use open-source models, fewer API calls.

- Accuracy-critical: Use expensive models (GPT-4), multi-step validation.

Design the Agent Loop

What Will the Agent Observe?

Input data: lead data, document text, customer question, context from memory.

What Reasoning Mode (Simple Chain-of-Thought vs. Planning)?

- Chain-of-thought: Fast, simple. “I’ll do step 1, then step 2.”

- Planning: Slower, more accurate. “Let me plan all steps first, then execute.”

What Tools Does It Need?

List the APIs, databases, services the agent will call.

Example for lead qualification:

- CRM API (get/update lead)

- Data enrichment API (get company data)

- Scoring model (score lead)

- Notification service (send Slack/email)

How Does It Know When to Stop?

Define the success condition. “Stop when lead is scored and assigned.”

Also define max steps to prevent infinite loops. “Stop after 10 steps, regardless.”

Implement and Test

Pseudocode or Real Code Example (CrewAI or FlowHunt)

CrewAI example:

from crewai import Agent, Task, Crew

# Define agents

enrichment_agent = Agent(

role="Data Enrichment Specialist",

goal="Enrich lead data with company information",

tools=[enrich_tool]

)

scoring_agent = Agent(

role="Lead Scoring Expert",

goal="Score leads based on fit criteria",

tools=[score_tool]

)

assignment_agent = Agent(

role="Sales Manager",

goal="Assign leads to best sales rep",

tools=[assign_tool, notify_tool]

)

# Define tasks

enrich_task = Task(

description="Enrich this lead: {lead_id}",

agent=enrichment_agent

)

score_task = Task(

description="Score the enriched lead",

agent=scoring_agent

)

assign_task = Task(

description="Assign lead to best rep and notify",

agent=assignment_agent

)

# Run crew

crew = Crew(agents=[enrichment_agent, scoring_agent, assignment_agent],

tasks=[enrich_task, score_task, assign_task])

result = crew.kickoff(inputs={"lead_id": "12345"})

Testing Strategy (Unit Tests for Tool Calls, Integration Tests for Loops)

def test_enrichment_tool():

result = enrich_tool("lead_123")

assert result['revenue'] is not None

assert result['employees'] is not None

def test_scoring_agent():

lead = {"company": "Acme", "revenue": "10M", "employees": 50}

score = score_agent(lead)

assert 0 <= score <= 100

def test_full_loop():

result = lead_qualification_agent("lead_123")

assert result['assigned_to'] is not None

assert result['score'] > 0

Debugging Common Issues (Infinite Loops, Hallucinations, Wrong Tools)

- Infinite loops: Add max step limit. Log each step. Monitor for repeated actions.

- Hallucinations: Add validation. Fact-check outputs against source data.

- Wrong tools: Improve tool descriptions. Add tool validation before execution.

Real Example: Lead Qualification Agent

Goal: Score Leads, Enrich Data, Assign to Sales Team

def lead_qualification_agent(lead_id):

"""

Autonomous agent that qualifies leads.

1. Fetches lead from CRM

2. Enriches with company data

3. Scores based on fit criteria

4. Assigns to best sales rep

5. Notifies rep

"""

Tools: CRM API, Data Enrichment Service, Scoring Model

tools = {

"get_lead": crm.get_lead,

"enrich_lead": enrichment_api.enrich,

"score_lead": scoring_model.score,

"find_best_rep": crm.find_available_rep,

"assign_lead": crm.assign,

"send_notification": slack.send

}

Pseudocode Walkthrough (Observe Lead → Score → Enrich → Assign)

# Step 1: Observe

lead = get_lead(lead_id)

print(f"Observing lead: {lead['company']}")

# Step 2: Reason (LLM decides next action)

# LLM: "I need to enrich this lead first"

# Step 3: Act

enriched = enrich_lead(lead)

print(f"Enriched: revenue={enriched['revenue']}")

# Step 4: Feedback + Loop

# LLM: "Now I'll score"

# Step 5: Act

score = score_lead(enriched)

print(f"Score: {score}")

# Step 6: Reason

# LLM: "Score is {score}, should assign to top rep"

# Step 7: Act

best_rep = find_best_rep(score)

assign_lead(lead_id, best_rep)

send_notification(best_rep, f"New lead: {lead['company']}")

print(f"Assigned to {best_rep}")

Results: Accuracy, Latency, Cost Metrics

- Accuracy: 94% (lead score matches manual review)

- Latency: 8 seconds (5 tool calls, 3 LLM reasoning steps)

- Cost: $0.03 per lead (GPT-4 API calls + enrichment API)

- Throughput: 450 leads/hour (single agent instance)

- Automation rate: 87% (13% escalated to human for review)

Integrating Agents with Business Systems

API Integration Patterns

REST APIs (Most Common)

Most agents call REST APIs. Use standard HTTP client:

def call_crm_api(endpoint, method="GET", data=None):

url = f"https://api.crm.com/{endpoint}"

headers = {"Authorization": f"Bearer {api_key}"}

if method == "GET":

response = requests.get(url, headers=headers)

elif method == "POST":

response = requests.post(url, json=data, headers=headers)

return response.json()

Webhooks (Event-Driven Agent Triggers)

Trigger agents on events (new lead, incoming email, form submission):

@app.post("/webhook/new_lead")

def on_new_lead(lead_data):

# Trigger agent asynchronously

queue.enqueue(lead_qualification_agent, lead_data['id'])

return {"status": "queued"}

Authentication & Security (API Keys, OAuth, Rate Limiting)

- API keys: Store in environment variables, not code

- OAuth: For user-facing integrations (Salesforce, HubSpot)

- Rate limiting: Respect API limits. Implement backoff and retry logic

from ratelimit import limits, sleep_and_retry

@sleep_and_retry

@limits(calls=100, period=60) # 100 calls per minute

def call_api(endpoint):

return requests.get(f"https://api.example.com/{endpoint}")

Database Integration

Read-Only (Agent Queries Data)

Agent reads customer data, past interactions, knowledge base:

def get_customer_history(customer_id):

query = "SELECT * FROM interactions WHERE customer_id = %s"

return db.execute(query, (customer_id,))

Write Operations (Agent Stores Decisions/Results)

Agent writes decisions to database:

def store_lead_score(lead_id, score, assigned_to):

db.execute(

"UPDATE leads SET score = %s, assigned_to = %s WHERE id = %s",

(score, assigned_to, lead_id)

)

Transactions & Consistency (Ensure Data Integrity)

Use transactions for multi-step operations:

with db.transaction():

score = score_lead(lead)

db.update_lead_score(lead_id, score)

rep = find_best_rep(score)

db.assign_lead(lead_id, rep)

# All-or-nothing: if any step fails, rollback

CRM & Business Tool Integration

Salesforce, HubSpot, Pipedrive Integration Patterns

Use official SDKs:

from salesforce import SalesforceAPI

sf = SalesforceAPI(api_key=key)

# Update lead

sf.update_lead(lead_id, {

'score': 85,

'assigned_to': 'john@acme.com',

'status': 'qualified'

})

Slack, Email, Jira Integration (Agent Sends Notifications/Updates)

from slack_sdk import WebClient

slack = WebClient(token=slack_token)

# Notify sales rep

slack.chat_postMessage(

channel="john",

text=f"New qualified lead: {lead['company']} (score: {score})"

)

Authentication & Permission Scoping

Use OAuth scopes to limit what agents can do:

# Agent can only read leads, update scores

# Cannot delete leads or access sensitive data

oauth_scopes = ["leads:read", "leads:update"]

Human-in-the-Loop Workflows

When Agents Need Human Approval

High-risk decisions: financial transactions, customer refunds, policy exceptions.

if decision_risk_score > 0.7:

# Route to human for approval

escalate_to_human(decision, reason="High risk")

else:

# Agent executes decision

execute_decision(decision)

Escalation Patterns (High-Risk Decisions, Edge Cases)

def lead_qualification_with_escalation(lead_id):

score = score_lead(lead_id)

if score > 80:

# High confidence, assign directly

assign_lead(lead_id, best_rep)

elif 50 < score < 80:

# Medium confidence, route to human

escalate_to_human(lead_id, "Review and assign")

else:

# Low score, reject

reject_lead(lead_id)

Feedback Loops (Humans Correct Agent Mistakes)

@app.post("/feedback/lead_score")

def on_score_feedback(lead_id, actual_score, agent_score):

# Store feedback

db.log_feedback(lead_id, agent_score, actual_score)

# Retrain model on feedback (periodic)

if should_retrain():

retrain_scoring_model()

Common Agent Failures & How to Prevent Them

Infinite Loops (Agent Gets Stuck Repeating Same Action)

Cause: Poor Goal Definition, Tool That Doesn’t Make Progress

# Bad: Agent keeps calling same tool

Agent thinks: "I need to get lead data"

→ Calls get_lead()

→ Still doesn't have enriched data

→ Calls get_lead() again

→ Infinite loop

Prevention: Max Step Limit, Progress Tracking, Tool Diversity

max_steps = 10

steps_taken = 0

while steps_taken < max_steps:

action = llm.decide_next_action()

if action == last_action:

# Same action twice, break loop

break

execute_action(action)

steps_taken += 1

Recovery: Timeout, Escalation to Human

try:

result = agent.run(timeout=30) # 30 second timeout

except TimeoutError:

escalate_to_human("Agent loop timeout")

Hallucinations (Agent Invents Facts or Tool Outputs)

Cause: LLM Tendency to Confabulate, Poor Tool Descriptions

# Bad: Agent hallucinates tool output

Agent: "I called enrich_lead, got revenue=$100M"

Reality: enrich_lead() returned null (API failed)

Agent made up the result

Prevention: Retrieval-Augmented Generation (RAG), Tool Validation, Fact-Checking

def execute_tool_safely(tool_name, params):

try:

result = execute_tool(tool_name, params)

# Validate result

if result is None:

return {"error": "Tool returned null"}

if not validate_result(result):

return {"error": "Result failed validation"}

return result

except Exception as e:

return {"error": str(e)}

Use RAG to ground agent in facts:

# Instead of: "Summarize this article"

# Use: "Summarize this article, citing specific passages"

knowledge_base = vector_db.search(query)

prompt = f"""

Summarize this article. Only cite specific passages.

Article: {article}

Knowledge base: {knowledge_base}

"""

Recovery: Fallback to Human, Retry With Different Reasoning

def robust_agent_call(goal, retries=3):

for attempt in range(retries):

try:

result = agent.run(goal)

# Validate result

if validate(result):

return result

except Exception as e:

if attempt == retries - 1:

escalate_to_human(goal)

else:

time.sleep(2 ** attempt) # Backoff

Tool Misuse (Agent Calls Wrong Tool or With Wrong Parameters)

Cause: Ambiguous Tool Descriptions, Poor Reasoning

# Bad: Ambiguous tool description

"update_lead - Update a lead"

# Good: Clear description

"update_lead - Update a lead's score, status, or assigned_to field.

Parameters: lead_id (required), score (0-100), status (qualified/disqualified),

assigned_to (sales rep email)"

Prevention: Clear Tool Docs, Tool-Use Training, Validation Before Execution

# Validate before execution

tool_call = llm.decide_tool_call()

if not validate_tool_call(tool_call):

# Tool call is invalid, ask LLM to fix

llm.correct_tool_call(tool_call)

else:

execute_tool(tool_call)

def validate_tool_call(call):

tool = tools[call['name']]

required_params = tool['required_parameters']

for param in required_params:

if param not in call['params']:

return False

return True

Recovery: Error Handling, Suggest Correct Tool, Retry

try:

result = execute_tool(tool_call)

except ToolExecutionError as e:

# Suggest correct tool

correct_tool = suggest_correct_tool(e)

llm.suggest_retry(correct_tool)

Cost Overruns (Agent Uses Too Many API Calls)

Cause: Inefficient Reasoning, Redundant Tool Calls

# Bad: Agent calls same tool multiple times

Agent: "Let me get lead data"

→ Calls get_lead()

→ Calls get_lead() again (forgot it already did)

→ Calls get_lead() a third time

Cost: 3x higher than needed

Prevention: Budget Limits, Call Deduplication, Caching

budget = {"tokens": 10000, "api_calls": 50}

spent = {"tokens": 0, "api_calls": 0}

def execute_with_budget(action):

global spent

if spent['api_calls'] >= budget['api_calls']:

raise BudgetExceededError()

result = execute_action(action)

spent['api_calls'] += 1

return result

Implement caching:

cache = {}

def get_lead_cached(lead_id):

if lead_id in cache:

return cache[lead_id]

result = crm_api.get_lead(lead_id)

cache[lead_id] = result

return result

Recovery: Cost Monitoring, Throttling, Cheaper Model Fallback

if cost_this_hour > budget_per_hour:

# Switch to cheaper model

switch_to_model("gpt-3.5-turbo") # Cheaper than GPT-4

Latency Issues (Agent Too Slow for Real-Time Use)

Cause: Multiple Reasoning Steps, Slow Tool Responses

An agent making 5 sequential API calls with 1 second each = 5+ seconds latency.

Prevention: Parallel Tool Execution, Caching, Faster Models

# Parallel execution

import asyncio

async def parallel_agent(lead_id):

lead = await get_lead_async(lead_id)

# Call multiple tools in parallel

enrichment, scoring = await asyncio.gather(

enrich_lead_async(lead),

score_lead_async(lead)

)

return (enrichment, scoring)

Use faster models:

# Instead of GPT-4 (slower, more accurate)

# Use GPT-3.5-turbo (faster, still accurate enough)

model = "gpt-3.5-turbo" # 200ms latency vs 500ms for GPT-4

Recovery: Timeout, Return Partial Results, Queue for Async

try:

result = agent.run(timeout=5) # 5 second timeout

return result

except TimeoutError:

# Return partial results

return partial_result

# Queue for async completion

queue.enqueue(complete_agent, lead_id)

Measuring Agent Performance & ROI

Key Metrics to Track

Accuracy (% of Correct Decisions/Actions)

Compare agent output to ground truth (human review, actual outcomes).

correct = 0

total = 100

for decision in agent_decisions:

if decision == human_review[decision.id]:

correct += 1

accuracy = correct / total * 100 # e.g., 94%

Latency (Time to Complete Task)

Measure end-to-end time from input to output.

start = time.time()

result = agent.run(input_data)

latency = time.time() - start # e.g., 8.5 seconds

Cost Per Task (API Calls, Compute, Human Review)

cost = (llm_api_calls * llm_cost) + (tool_calls * tool_cost) + (human_review_rate * hourly_rate)

# e.g., $0.03 per lead

User Satisfaction (If Human-in-the-Loop)

Survey users: “How satisfied are you with agent decisions?”

Automation Rate (% of Tasks Completed Without Human Intervention)

automated = tasks_completed_by_agent

total = all_tasks

automation_rate = automated / total * 100 # e.g., 87%

ROI Calculation

Baseline: Cost of Manual Process (Human Hours × Hourly Rate)

Manual lead qualification:

- 100 leads/month

- 5 minutes per lead

- 500 hours/month

- $20/hour = $10,000/month

Agent Cost: Infrastructure + API Calls + Human Oversight

Agent-driven:

- 100 leads/month

- $0.03 per lead (API calls)

- $3 total API cost

- $500/month human review (10% escalation)

- $100/month infrastructure

Total: $603/month

Payback Period: When Agent Cost < Manual Cost

Savings per month: $10,000 - $603 = $9,397

ROI: 1,557% (9,397 / 603)

Payback period: < 1 month (immediate)

Example: Lead Qualification Agent ROI

Manual process:

- 500 leads/month

- 5 min per lead = 2,500 hours = $50,000/month

Agent process:

- 500 leads/month

- $0.03 per lead = $15

- 5% escalation (25 leads) = $250 human time

- Infrastructure = $500

Total: $765/month

Savings: $50,000 - $765 = $49,235/month

ROI: 6,436%

Continuous Improvement

Monitor Metrics Over Time

# Track daily metrics

daily_metrics = {

'accuracy': 0.94,

'latency': 8.5,

'cost_per_task': 0.03,

'automation_rate': 0.87

}

A/B Test Different Agent Configurations

# Test 1: GPT-4 (more accurate, slower)

# Test 2: GPT-3.5-turbo (faster, slightly less accurate)

# Measure: accuracy, latency, cost

# Choose based on your priorities

Incorporate Feedback to Improve Accuracy

# Collect human feedback on agent mistakes

feedback = db.get_feedback()

# Retrain agent (adjust prompts, add examples)

agent.retrain(feedback)

# Measure: accuracy improves from 94% to 96%

Scale Successful Agents, Retire Underperforming Ones

Monitor ROI. If an agent isn’t delivering value, retire it. Scale successful agents to other teams.

Frequently Asked Questions

The FAQ section is auto-rendered from frontmatter and appears below.

{{ cta-dark-panel heading=“Build Agents Without the Complexity” description=“FlowHunt’s native agent platform handles tool integration, error handling, and monitoring. Start building autonomous workflows in minutes, not weeks.” ctaPrimaryText=“Try FlowHunt Free” ctaPrimaryURL=“https://app.flowhunt.io/sign-in" ctaSecondaryText=“Book a Demo” ctaSecondaryURL=“https://www.flowhunt.io/demo/" gradientStartColor="#7c3aed” gradientEndColor="#ec4899” gradientId=“cta-ai-agents” }}