FlowHunt 2.6.12: Slack Integration, Intent Classification and More

FlowHunt 2.6.12 introduces the Slack integration, intent classification, and Gemini model, enhancing AI chatbot functionality, customer insights, and team workf...

Connect any LLM (Claude, GPT, Gemini, Grok, Llama, Mistral) to Slack using FlowHunt’s no-code flow builder. The setup is the same for every model — pick the model that fits your use case, then ship in minutes.

Adding an AI assistant to Slack used to mean picking a vendor, writing integration code, and rebuilding everything when a better model shipped six months later. With FlowHunt, the integration is decoupled from the model: you build the Slack flow once, plug in the LLM you want — Claude, GPT, Gemini, Grok, Llama, Mistral — and swap it any time without touching the rest.

This guide walks through the full setup. The first half is the same for every model. The second half breaks down which model to pick for which use case, with notes specific to each LLM family. Skip to the section that matches your stack, or read end-to-end if you’re starting from zero.

Slack is where teams ask questions. An AI agent that lives there answers them instantly — without context-switching to a separate chat tool, dashboard, or knowledge base. Common deployments:

The bot lives in Slack, so adoption is automatic — no one has to learn a new tool.

The setup is identical regardless of which AI model you choose. Pick your model in step 4; everything else stays the same.

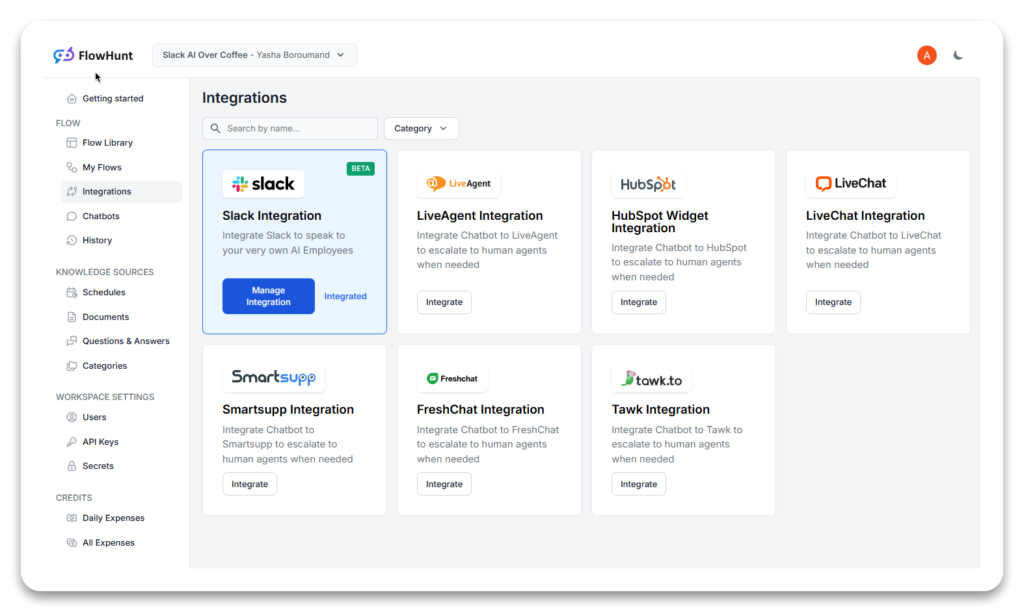

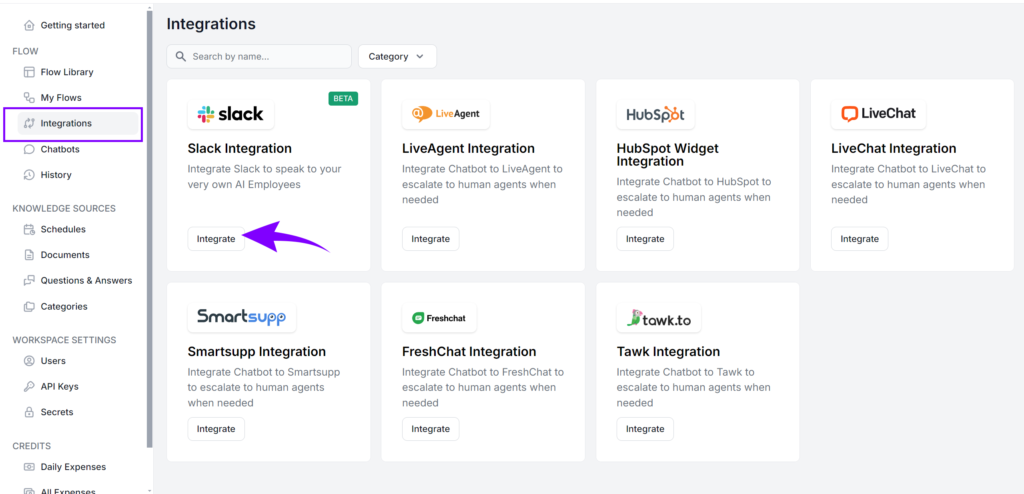

Log in to your FlowHunt account and open the Integrations tab. Select Slack, click Connect, and authorize the app on Slack’s OAuth screen. Grant the read/write permissions FlowHunt requests — these let the bot receive messages and post replies in your workspace.

Your workspace URL appears in the top-left corner of the Slack desktop or web app — copy it from there if FlowHunt asks. Once authorized, Slack is connected and ready to use in any flow.

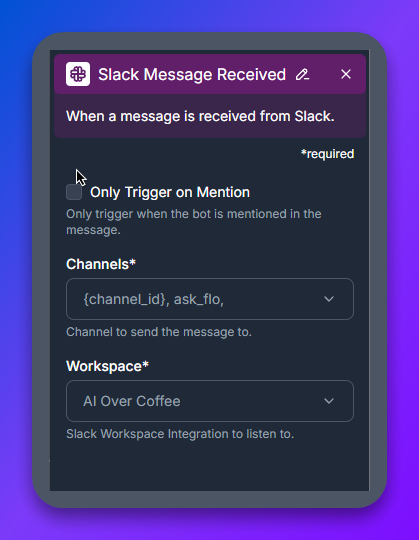

In FlowHunt’s flow builder, drop a Slack Message Received component on the canvas. This block listens for incoming Slack messages and triggers the rest of the flow.

Configure two settings:

#ai-assistant channel is the cleanest setup.

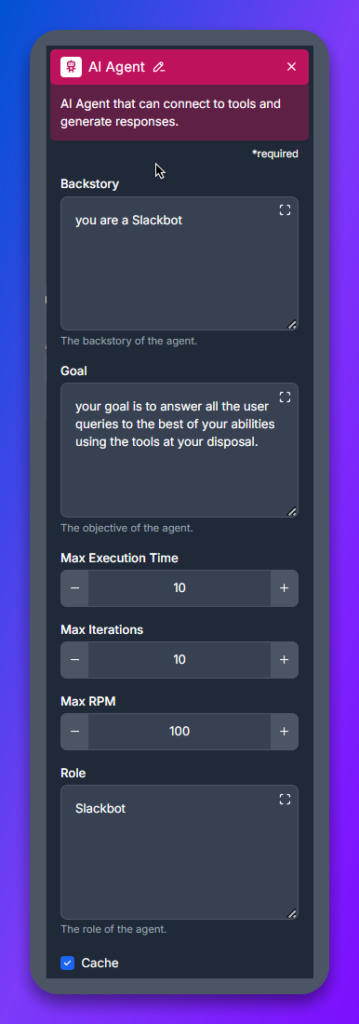

The AI Agent block is the bot’s reasoning layer. It takes the user’s message, decides what tools to use, and crafts the response.

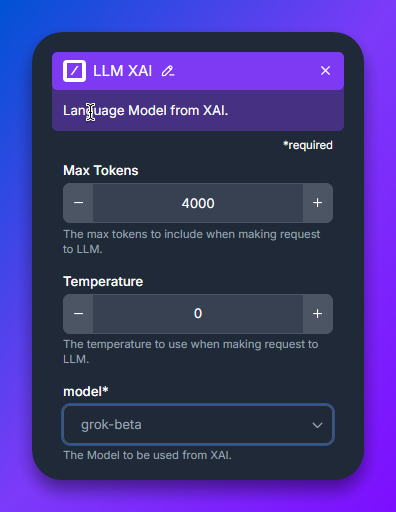

Connect an LLM component to the AI Agent. This is where you choose which AI model powers the bot. FlowHunt has a separate LLM component for each provider — LLM OpenAI, LLM Anthropic, LLM Google, LLM Meta, LLM Mistral, LLM xAI — and inside each you select the specific model variant.

This is the only step that differs per model. Jump to the Pick the right AI model section below for a comparison and per-family notes.

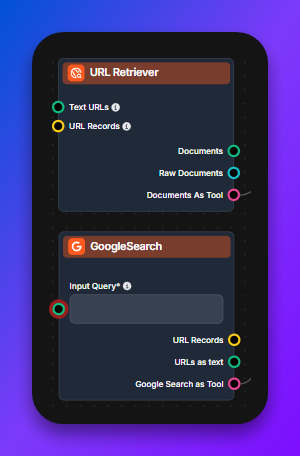

The AI Agent gets dramatically more useful when it can use tools. Common ones:

Tools are model-agnostic. Any LLM you pick in step 4 can use any tool you wire in.

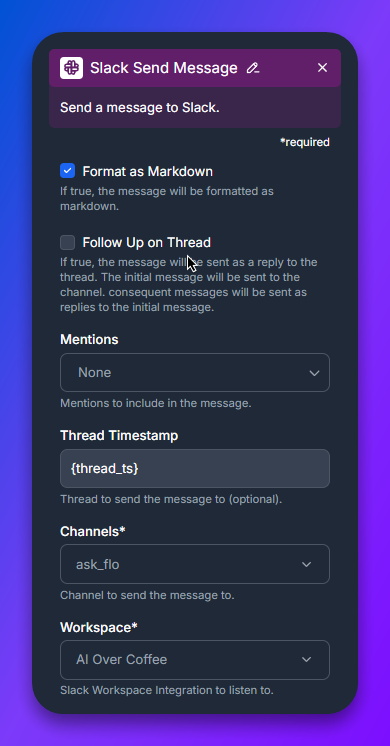

Finish the flow with a Slack Send Message component, configured for the same channel and workspace as step 2. Save the flow, open Slack, and @-mention the bot in your test channel. The bot should respond using the model you picked in step 4.

That’s the entire setup. Switching models later is a one-click change in step 4 — no code edits, no flow rebuild.

Every major LLM family works in FlowHunt’s Slack flow. The differences come down to cost, latency, context window, reasoning depth, and tool-calling quality. Use the table to shortlist, then read the family-specific section for setup notes.

| Model family | Best for | Latency | Cost | Notes |

|---|---|---|---|---|

| Claude (Anthropic) | Long-context analysis, careful reasoning, code review | Medium | Medium–High | Strong at following nuanced instructions; excellent for internal Q&A over docs |

| GPT / o-series (OpenAI) | General-purpose, broad tool ecosystem, multimodal | Low–Medium | Low (mini) – High (o-series) | GPT-4o Mini is the default sweet spot; o1 / o3 for hard reasoning |

| Gemini (Google) | Massive context windows, fast multimodal, search-grounded | Low | Low–Medium | 1.5 Pro handles 1M+ tokens; great for whole-doc Slack Q&A |

| Grok (xAI) | Real-time / news-aware queries, X (Twitter) data, casual tone | Low | Medium | Best when the bot needs current-events awareness |

| Llama (Meta) | Self-hosted / private deployments, cost-sensitive workloads | Depends on host | Low (self-hosted) | Open weights — use when data residency matters |

| Mistral | Open-weight, balanced cost/quality, EU-friendly hosting | Low | Low–Medium | Mistral Large competes with GPT-4o at lower cost |

Pick one to start. Switching models in FlowHunt is a one-click change in the LLM component, so over-thinking the initial choice doesn’t pay off — ship with a sensible default, measure quality on real Slack traffic, iterate.

Each section below is self-contained. Pick the section for the model family you’re connecting and follow its notes.

Claude is Anthropic’s family of LLMs, well-suited for Slackbots that handle nuanced internal Q&A, document summarization, code review, and careful instruction-following. To connect Claude to Slack, drop the LLM Anthropic component in step 4 and pick the variant:

For internal-knowledge Slackbots over Notion or Confluence, Claude 3.5 Sonnet plus a Document Retriever is the most reliable starting point.

OpenAI’s GPT and o-series models are the broadest choice for Slack — strong general-purpose performance, the most mature tool-calling, and multimodal input (vision, audio). Drop the LLM OpenAI component in step 4 and pick the variant:

For most teams, start with GPT-4o Mini. Promote to GPT-4o or o1 only on flows where users complain about answer quality.

Google Gemini is the strongest choice when context window matters — Gemini 1.5 Pro handles over 1M tokens, enough to drop entire codebases or document sets into a single Slack query. Drop the LLM Google component in step 4 and pick the variant:

If your Slackbot needs to reason over your full knowledge base in a single pass (no retrieval step), Gemini Pro’s context window is the cleanest answer.

xAI Grok is built into FlowHunt’s Slack flow the same way as the other models — drop the LLM xAI component (or use the LLM OpenAI component pointing at the Grok endpoint, depending on your FlowHunt version) and pick the Grok variant. Grok’s distinguishing trait is real-time awareness — it has access to live information, including X (Twitter) data, making it the best choice when the Slackbot needs current-events context: news, market data, breaking developments. Pair it with the Google Search Tool for even broader web access.

Meta’s Llama family is the open-weight option — use it when data residency, self-hosting, or per-token cost rules out hosted APIs. Drop the LLM Meta component in step 4 and pick the variant:

Llama is the right answer when your security or compliance team requires the model to run on infrastructure you control, or when high message volume makes hosted-API costs prohibitive.

Mistral is the European open-weight contender — strong models, EU-friendly hosting, and good price-performance. Drop the LLM Mistral component in step 4 and pick the variant:

Choose Mistral when EU data residency matters, or when you want open-weight flexibility with closer-to-frontier quality than Llama 3.x in some benchmarks.

Three flow patterns cover most Slack deployments. Build any of them on top of the setup above by adjusting the AI Agent’s tools and prompt:

These patterns layer cleanly: a single Slack flow can combine knowledge-base retrieval, live web search, and internal API calls, with the LLM choosing the right tool per query.

The bot doesn’t respond to messages. Check that “Only Trigger on Mention” matches how you’re testing — if it’s enabled, you must @-mention the bot. Confirm the channel in Slack Message Received matches the channel you’re posting in.

The bot responds but the answer is poor. Iterate on the AI Agent’s backstory and goal first — they’re more impactful than swapping models. If quality is still off after prompt iteration, promote to a stronger model in the LLM component (Mini → standard → top-tier).

Permissions errors after Slack auth. Reconnect the Slack integration in FlowHunt’s Integrations tab and re-grant permissions. Slack occasionally invalidates tokens after workspace owner changes.

Long replies get truncated in Slack. Slack has a per-message character limit. Add a post-processing step in the flow to split long responses, or instruct the AI Agent in its goal to keep responses under 3,000 characters when posting to Slack.

The whole setup — connecting Slack, building the flow, picking a model — is a one-evening project in FlowHunt. The flow you build today works with any future model: when GPT-6 or Claude 5 ship, you swap the LLM component and the rest of the flow keeps running.

Start with FlowHunt’s free tier , connect Slack, and ship a working AI Slackbot before lunch.

Arshia is an AI Workflow Engineer at FlowHunt. With a background in computer science and a passion for AI, he specializes in creating efficient workflows that integrate AI tools into everyday tasks, enhancing productivity and creativity.

FlowHunt's no-code flow builder connects Slack to every major LLM — Claude, GPT, Gemini, Grok, Llama, Mistral — through one consistent flow. No code, no infrastructure to manage.

FlowHunt 2.6.12 introduces the Slack integration, intent classification, and Gemini model, enhancing AI chatbot functionality, customer insights, and team workf...

FlowHunt’s Slack integration enables seamless AI collaboration directly within your Slack workspace. Bring any Flow into Slack, automate workflows, provide real...

Integrate FlowHunt with Slack to automate messaging, monitor channels, reply to threads, and build AI-powered bots that work 24/7 across your workspace.