Enterprise-Scale No-Code AI Agent Platform Pricing Models in Late 2025

Comprehensive guide to pricing strategies for enterprise no-code AI agent platforms, including subscription models, consumption-based pricing, hybrid approaches...

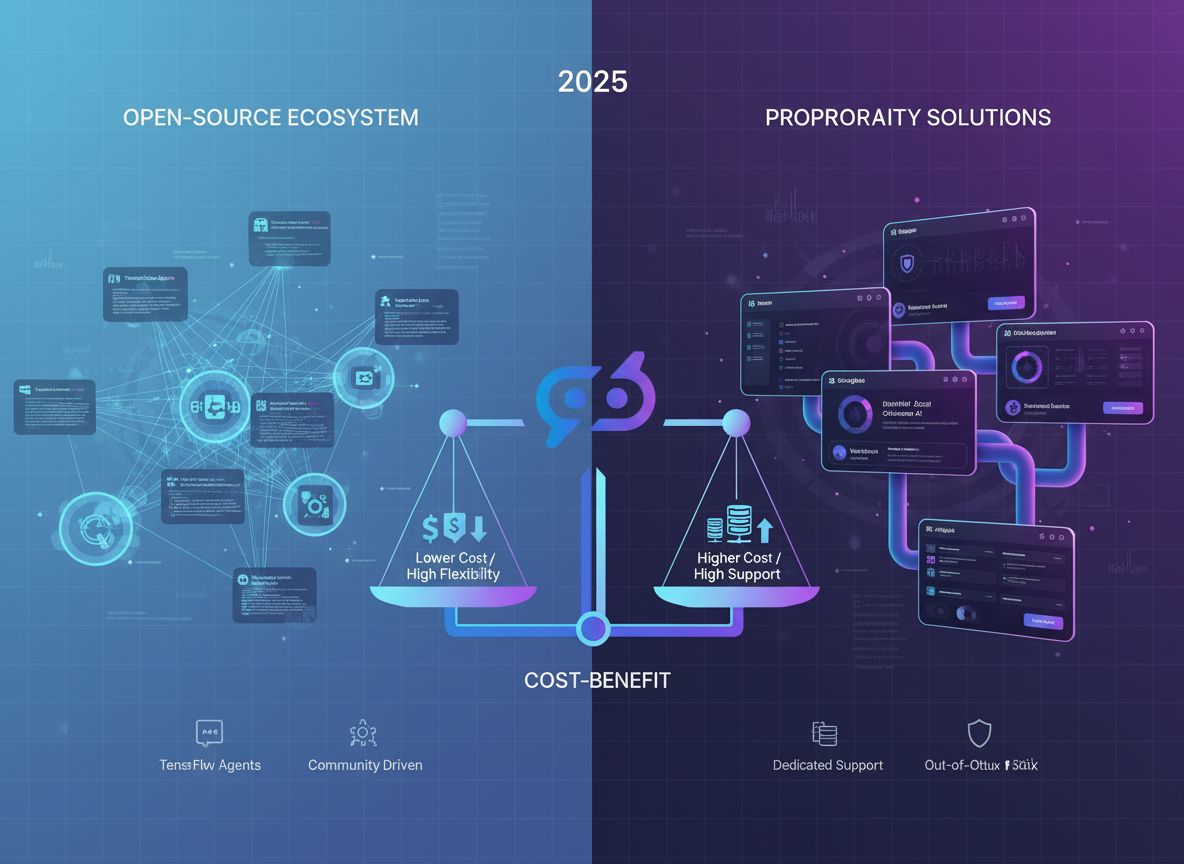

A comprehensive analysis of open-source and proprietary AI agent builders in 2025, examining costs, flexibility, performance, and ROI to help organizations make informed decisions.

Here’s a cost comparison between open-source and proprietary AI agent builders:

| Cost Category | Open-Source | Proprietary |

|---|---|---|

| Licensing Fees | $0 | $5,000–$50,000+/year |

| Infrastructure (Annual) | $30,000–$100,000+ | $10,000–$30,000 |

| Development Team (Annual) | $200,000–$500,000+ | $50,000–$150,000 |

| Security & Compliance | $20,000–$60,000 | Included |

| Support & Training | Community (variable) | $10,000–$30,000 |

| Total Year 1 TCO | $250,000–$660,000+ | $75,000–$260,000 |

| Scaling Costs | Increases significantly | Predictable, linear |

AI agent builders are frameworks, platforms, and tools that enable developers to create autonomous AI systems capable of understanding goals, planning actions, and executing tasks with minimal human oversight. Unlike traditional chatbots or generative AI applications that respond to user input, AI agents operate proactively, making decisions based on environmental context and predefined objectives.

The significance of AI agent builders in 2025 cannot be overstated. We are witnessing what industry analysts call the “agentic era”—a fundamental shift in how artificial intelligence creates value. Rather than serving as sophisticated search engines or content generators, AI agents now function as autonomous workers, project managers, and decision-making systems. They can manage complex workflows, integrate with multiple data sources, handle exceptions, and continuously improve their performance through feedback loops.

This evolution has created unprecedented demand for robust, scalable, and cost-effective agent development platforms. Organizations across healthcare, finance, manufacturing, and professional services are racing to deploy AI agents that can automate knowledge work, reduce operational costs, and unlock new revenue streams. The question of whether to build on open-source or proprietary foundations has become one of the most consequential technology decisions facing enterprises today.

The open-source AI agent ecosystem has matured dramatically. Frameworks like LangChain, AutoGen, Crew AI, and SuperAGI have created vibrant communities of developers contributing innovations, sharing best practices, and building specialized tools. The appeal is immediate: zero licensing costs, complete transparency, and the ability to customize every aspect of your agent architecture.

Open-source solutions offer unparalleled flexibility. You control the entire codebase, can modify algorithms to suit your specific use cases, and avoid vendor lock-in. For organizations with sophisticated AI/ML teams, this freedom enables rapid experimentation and the ability to implement cutting-edge techniques before they appear in proprietary products. The open-source community often innovates faster than commercial vendors, with new capabilities and improvements appearing continuously on GitHub.

However, this flexibility comes with substantial hidden costs. Building and maintaining an open-source AI agent infrastructure requires significant technical expertise. Your team must handle infrastructure provisioning, security hardening, performance optimization, and ongoing maintenance. You’re responsible for monitoring security vulnerabilities, applying patches, and ensuring compliance with data protection regulations. These operational responsibilities accumulate quickly, transforming what appears to be a cost-free solution into a labor-intensive undertaking.

The infrastructure costs associated with open-source AI agents are particularly significant. Running large language models, managing vector databases, orchestrating distributed computing resources, and maintaining high-availability systems demands substantial computational resources. Organizations often underestimate these costs, discovering only after deployment that infrastructure spending represents 30% or more of their total AI project budget.

Proprietary AI agent builders—platforms like those offered by major cloud providers, specialized AI companies, and enterprise software vendors—take a fundamentally different approach. They provide pre-built, optimized solutions with professional support, comprehensive documentation, and integrated features designed for enterprise deployment.

The primary advantage of proprietary solutions is time-to-value. Organizations can move from concept to production in weeks rather than months. Pre-built integrations with popular business applications, data sources, and communication platforms eliminate the need to build custom connectors. Professional support teams provide SLAs, ensuring rapid response to issues. Comprehensive documentation and training resources reduce the learning curve for development teams.

Proprietary platforms also excel at handling the operational complexity of AI systems at scale. They manage infrastructure provisioning, security hardening, compliance monitoring, and performance optimization transparently. Organizations benefit from the vendor’s investments in reliability, security, and scalability without needing to replicate these capabilities internally. For teams without deep AI/ML expertise, this managed approach dramatically reduces risk and accelerates time-to-market.

The trade-off is reduced flexibility and potential vendor lock-in. Proprietary platforms typically offer customization within predefined boundaries. If your requirements fall outside the platform’s design assumptions, you may face significant constraints. Additionally, migrating from one proprietary platform to another requires substantial effort, creating a form of lock-in that can limit your strategic options over time.

Understanding the true cost of each approach requires looking beyond licensing fees to total cost of ownership (TCO). This analysis must account for direct costs, infrastructure expenses, personnel requirements, and opportunity costs.

| Cost Category | Open-Source | Proprietary |

|---|---|---|

| Licensing Fees | $0 | $5,000–$50,000+/year |

| Infrastructure (Annual) | $30,000–$100,000+ | $10,000–$30,000 |

| Development Team (Annual) | $200,000–$500,000+ | $50,000–$150,000 |

| Security & Compliance | $20,000–$60,000 | Included |

| Support & Training | Community (variable) | $10,000–$30,000 |

| Total Year 1 TCO | $250,000–$660,000+ | $75,000–$260,000 |

| Scaling Costs | Increases significantly | Predictable, linear |

This table reveals a critical insight: while open-source has zero licensing costs, the total cost of ownership often exceeds proprietary solutions, particularly in the first 1-2 years. The gap narrows over time as organizations amortize development investments, but the initial financial burden of open-source is substantial.

Open-source solutions eliminate licensing fees entirely. You can deploy unlimited instances without paying per-seat, per-API-call, or per-deployment fees. This advantage is particularly significant for organizations planning large-scale deployments across multiple business units or geographies.

Proprietary solutions typically employ one of three pricing models: subscription-based (monthly or annual fees), consumption-based (pay-per-API-call or per-token), or hybrid models combining both. Subscription costs range from $5,000 to $50,000 annually depending on features and scale. Consumption-based pricing can become expensive at scale—a single large-scale AI agent deployment might generate millions of API calls monthly, resulting in substantial bills.

However, proprietary vendors often provide volume discounts, committed-use discounts, and bundled pricing that can reduce effective costs for large deployments. Additionally, the predictability of subscription pricing enables accurate budgeting, whereas consumption-based open-source infrastructure costs can fluctuate significantly based on usage patterns.

This is where the true cost of open-source becomes apparent. Running AI agents at scale requires substantial computational resources. Large language models demand GPU or TPU capacity, vector databases require persistent storage and indexing infrastructure, and orchestration systems need reliable, high-availability platforms.

Open-source deployments typically require:

Annual infrastructure costs for a production open-source AI agent system typically range from $30,000 to $100,000 or more, depending on scale and performance requirements.

Proprietary solutions abstract away much of this complexity. The vendor manages infrastructure provisioning, scaling, and optimization. Organizations pay for consumption through the vendor’s pricing model, but the vendor’s economies of scale typically result in lower per-unit costs. Additionally, proprietary platforms handle auto-scaling, load balancing, and disaster recovery automatically, reducing operational overhead.

The most significant hidden cost of open-source AI agent development is personnel. Building, deploying, and maintaining open-source AI systems requires specialized expertise that commands premium salaries.

A typical open-source AI agent project requires:

A modest team of 5-6 engineers costs $650,000–$1,200,000 annually. For organizations without existing AI/ML capabilities, building this team represents a multi-year commitment and substantial financial investment.

Proprietary solutions reduce personnel requirements significantly. Organizations can often deploy and manage proprietary AI agent platforms with smaller teams—sometimes just 1-2 engineers plus business analysts. This reduction in headcount directly translates to lower personnel costs and faster time-to-productivity.

Where open-source solutions excel is in flexibility and customization. You have complete control over the codebase, can modify algorithms, integrate custom components, and tailor the system to your specific requirements.

This flexibility is invaluable for organizations with unique requirements:

Proprietary solutions, by contrast, offer customization within predefined boundaries. Most platforms provide configuration options, API extensions, and plugin architectures, but fundamental architectural changes are typically not possible. If your requirements fall outside the platform’s design assumptions, you may face significant constraints.

This trade-off is critical: open-source provides maximum flexibility but requires substantial expertise to realize that flexibility. Proprietary solutions provide less flexibility but make it easier to achieve your goals within the platform’s design parameters.

Performance and scalability considerations differ significantly between open-source and proprietary approaches.

Open-source AI agent frameworks are inherently flexible but require careful optimization to achieve production-grade performance. Performance depends entirely on your implementation choices—the infrastructure you provision, the models you select, the algorithms you implement, and the optimizations you apply. Organizations with strong engineering teams can achieve excellent performance, but suboptimal implementations can result in slow, unreliable systems.

Scalability with open-source requires sophisticated infrastructure management. Scaling from handling 100 concurrent agents to 10,000 concurrent agents requires careful planning around distributed computing, load balancing, caching strategies, and database optimization. Many organizations underestimate the complexity of scaling open-source systems, discovering only in production that their architecture doesn’t scale as expected.

Proprietary solutions are typically optimized for scale from the ground up. Vendors invest heavily in performance optimization, having learned from thousands of deployments. Auto-scaling, load balancing, and failover mechanisms are built-in and transparent. Organizations can scale from small pilots to enterprise-wide deployments without architectural changes.

However, proprietary solutions may impose performance constraints. If your use case requires extreme performance optimization or specialized hardware, proprietary platforms might not offer the flexibility to achieve your goals. Additionally, proprietary platforms may have performance limitations based on their architectural choices—limitations that open-source solutions could overcome through customization.

Security and compliance considerations are paramount for enterprise AI deployments, and the approaches differ significantly.

Open-source solutions place security responsibility entirely on the organization. You must:

While open-source code transparency enables security audits, it also means security vulnerabilities are visible to potential attackers. Organizations must maintain vigilance, applying security patches promptly and monitoring security advisories continuously.

Compliance with regulations like GDPR, HIPAA, SOC 2, and industry-specific requirements falls entirely on the organization. You must implement controls, maintain documentation, and demonstrate compliance to auditors. For highly regulated industries, this responsibility is substantial.

Proprietary solutions typically include security and compliance features built into the platform. Vendors employ dedicated security teams, conduct regular security audits, and maintain compliance certifications. Organizations benefit from the vendor’s security investments without needing to replicate these capabilities internally.

However, proprietary solutions introduce different security considerations. You must trust the vendor’s security practices, have limited visibility into their infrastructure, and depend on their security roadmap. Additionally, proprietary solutions may impose constraints on data handling—for example, some cloud-based proprietary platforms may not support on-premises deployment, creating data residency challenges for regulated industries.

The support and documentation landscape differs dramatically between open-source and proprietary solutions.

Open-source solutions rely on community support. Documentation is often community-contributed, which can be comprehensive but may also be incomplete, outdated, or poorly organized. Support comes from community forums, GitHub issues, and Stack Overflow—responses are typically free but unpredictable in quality and response time. For critical issues, you may need to hire consultants or contribute fixes yourself.

This community-driven approach has advantages: the community often provides creative solutions, workarounds, and innovations. However, it also means you cannot rely on guaranteed response times or professional support for critical issues.

Proprietary solutions provide professional support with SLAs. Vendors employ support teams trained on their platforms, provide documentation written by professional technical writers, and offer multiple support channels (email, phone, chat). Response times are guaranteed, and escalation paths exist for critical issues.

For organizations without deep technical expertise, professional support dramatically reduces risk and accelerates problem resolution. For organizations with strong internal capabilities, community support may be sufficient, though it requires more self-sufficiency.

The pace of innovation differs between open-source and proprietary approaches, with trade-offs in both directions.

Open-source communities often innovate faster than proprietary vendors. New techniques, models, and capabilities appear first in open-source projects. Organizations with strong engineering teams can adopt these innovations immediately, gaining competitive advantages. The open-source community is particularly strong in research-oriented innovations—new architectures, training techniques, and optimization methods often appear first in open-source projects.

Proprietary vendors prioritize stability and reliability over rapid innovation. New features are tested extensively before release, ensuring they don’t destabilize production systems. This conservative approach reduces risk but means organizations may wait months or years for features available in open-source projects.

However, proprietary vendors often innovate in areas that matter for enterprise deployments: integration with business applications, compliance features, operational tooling, and performance optimization. These innovations may not be as visible as research-oriented innovations, but they directly impact productivity and operational efficiency.

Understanding how these trade-offs play out in practice requires examining realistic scenarios.

A startup building an AI-powered customer service platform with 10 employees and limited funding chooses open-source. Initial costs appear attractive: zero licensing fees, and the founding team includes two experienced ML engineers.

Year 1 Costs:

Challenges encountered:

Year 2 Costs:

By year 2, the startup realized that open-source was consuming more resources than anticipated. The team spent significant time on infrastructure and operational concerns rather than product innovation.

A large financial services company with 50 AI/ML engineers and established infrastructure chooses open-source for a new AI agent platform. The organization has the expertise to manage open-source complexity and values the flexibility to customize agents for specific business requirements.

Year 1 Costs:

Advantages realized:

Year 2 and beyond:

For this organization, open-source was the right choice. The existing expertise, substantial budget, and need for customization made the flexibility worth the cost.

A mid-market B2B SaaS company with 200 employees and limited AI expertise chooses a proprietary AI agent platform. The organization prioritizes rapid deployment and operational simplicity over customization.

Year 1 Costs:

Advantages realized:

Year 2 and beyond:

For this organization, proprietary was the right choice. The rapid deployment, minimal operational overhead, and professional support enabled the company to realize value quickly without requiring substantial AI expertise.

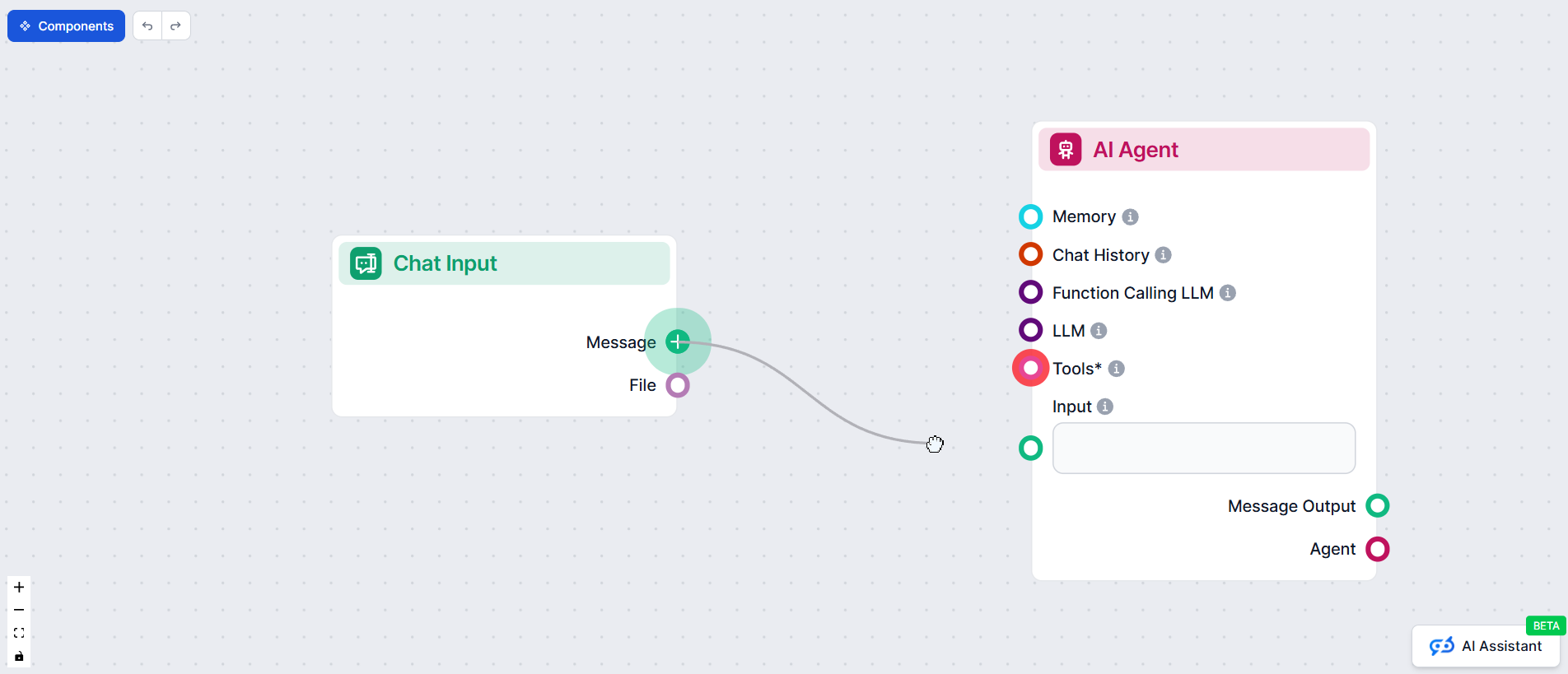

Organizations facing the open-source vs. proprietary decision often overlook a third option: using workflow automation platforms like FlowHunt to bridge the gap between these approaches.

FlowHunt enables organizations to leverage the flexibility of open-source AI agent frameworks while reducing operational complexity and accelerating time-to-value. Rather than choosing between open-source and proprietary, organizations can use FlowHunt to:

FlowHunt’s approach is particularly valuable for organizations that want the flexibility of open-source but need the operational simplicity of proprietary solutions. By automating workflow orchestration, monitoring, and deployment, FlowHunt reduces the personnel requirements and operational complexity that typically make open-source expensive.

For example, an organization might use open-source frameworks like LangChain or AutoGen for core agent logic while using FlowHunt to orchestrate agent workflows, manage data pipelines, and automate deployment. This hybrid approach combines the customization benefits of open-source with the operational simplicity of proprietary solutions.

Making the right choice between open-source and proprietary AI agent builders requires honest assessment of your organization’s capabilities, requirements, and constraints.

Choose open-source if:

Choose proprietary if:

Consider a hybrid approach if:

The AI agent builder market is evolving rapidly. Several trends are shaping the landscape:

Consolidation and specialization: The market is consolidating around specialized platforms serving specific industries or use cases. Rather than general-purpose platforms, we’re seeing emergence of industry-specific proprietary solutions (healthcare AI agents, financial services AI agents, etc.) alongside specialized open-source frameworks.

Hybrid architectures becoming standard: Organizations are increasingly adopting hybrid approaches, combining open-source components with proprietary platforms. This trend reflects the recognition that neither approach is universally superior—the optimal solution depends on specific requirements.

Managed open-source services: A new category of vendors is emerging that provides managed services around open-source AI frameworks. These vendors handle infrastructure, security, compliance, and support while preserving the flexibility of open-source. This category may represent the future for many organizations.

Increased focus on operational tooling: As AI agents move from research projects to production systems, operational tooling becomes increasingly important. Vendors are investing heavily in monitoring, debugging, and optimization tools that make AI agents easier to operate at scale.

Regulatory and compliance evolution: As AI agents become more prevalent, regulatory frameworks are evolving. Proprietary solutions with built-in compliance features may gain advantage in regulated industries, while open-source solutions will need to invest in compliance tooling.

Experience how FlowHunt automates your AI agent development, deployment, and monitoring — from orchestration and data pipelines to compliance and analytics — all in one intelligent platform.

Arshia is an AI Workflow Engineer at FlowHunt. With a background in computer science and a passion for AI, he specializes in creating efficient workflows that integrate AI tools into everyday tasks, enhancing productivity and creativity.

Automate your AI content workflows and reduce development complexity with intelligent automation tools designed for modern teams.

Comprehensive guide to pricing strategies for enterprise no-code AI agent platforms, including subscription models, consumption-based pricing, hybrid approaches...

Comprehensive guide to the best AI agent building platforms in 2025, featuring FlowHunt.io, OpenAI, and Google Cloud. Discover detailed reviews, rankings, and c...

Explore the top AI agent builders in 2026, from no-code platforms to enterprise-grade frameworks. Discover which tools are best for your use case and how FlowHu...