LLM Gemini

FlowHunt supports dozens of AI models, including Google Gemini. Learn how to use Gemini in your AI tools and chatbots, switch between models, and control advanc...

Component description

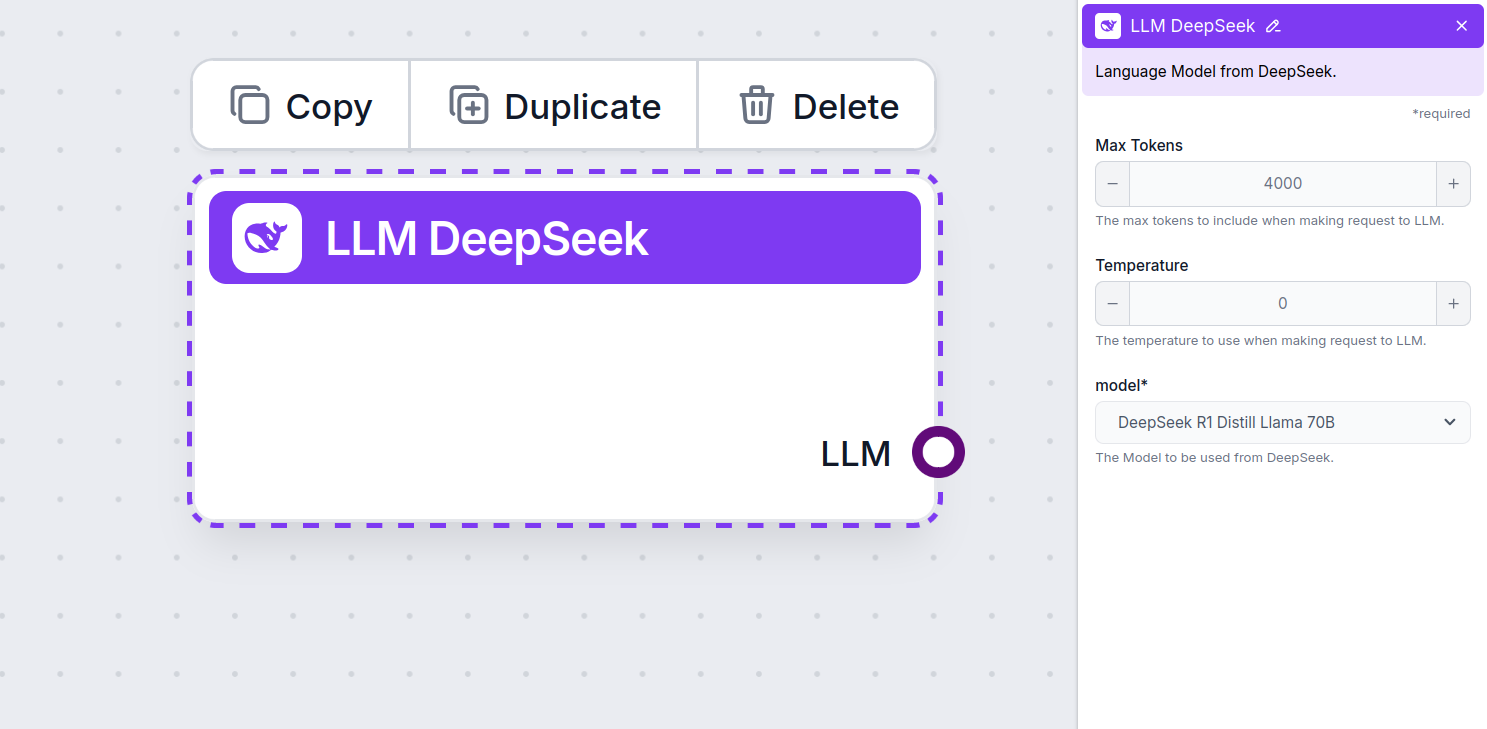

The LLM DeepSeek component connects the DeepSeek models to your Flow. While the Generators and Agents are where the actual magic happens, LLM components allow you to swap and control the model used.

Remember that connecting an LLM Component is optional. All components that use an LLM come with ChatGPT-4o as the default. The LLM components allow you to change the model and control model settings.

Tokens represent the individual units of text the model processes and generates. Token usage varies with models, and a single token can be anything from words or subwords to a single character. Models are usually priced in millions of tokens.

The max tokens setting limits the total number of tokens that can be processed in a single interaction or request, ensuring the responses are generated within reasonable bounds. The default limit is 4,000 tokens, the optimal size for summarizing documents and several sources to generate an answer.

Temperature controls the variability of answers, ranging from 0 to 1.

A temperature of 0.1 will make the responses very to the point but potentially repetitive and deficient.

A high temperature of 1 allows for maximum creativity in answers but creates the risk of irrelevant or even hallucinatory responses.

For example, the recommended temperature for a customer service bot is between 0.2 and 0.5. This level should keep the answers relevant and to the script while allowing for a natural response variation.

This is the model picker. Here, you’ll find all the supported DeepSeek models. We support all the latest Gemini models:

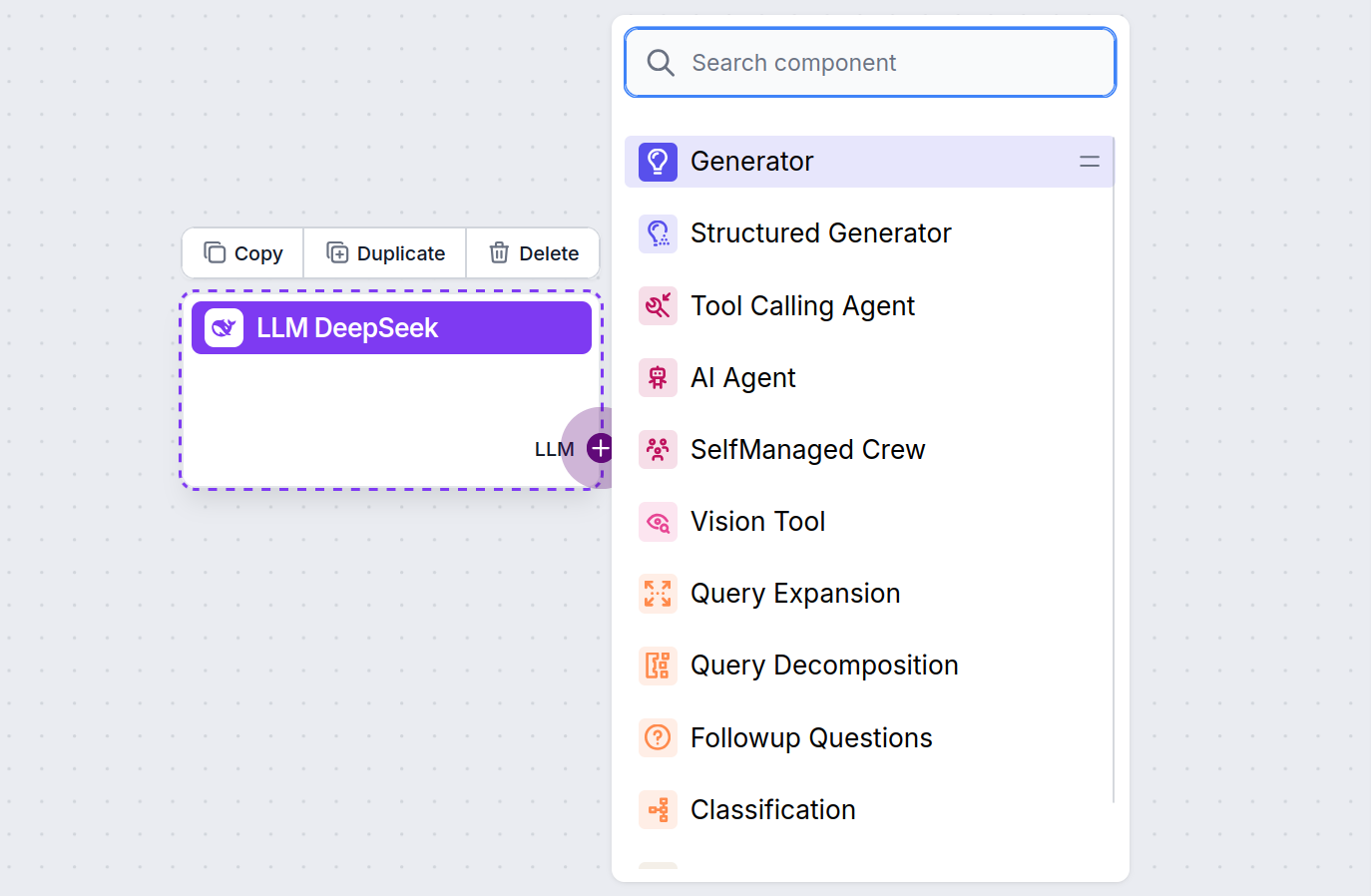

You’ll notice that all LLM components only have an output handle. Input doesn’t pass through the component, as it only represents the model, while the actual generation happens in AI Agents and Generators.

The LLM handle is always purple. The LLM input handle is found on any component that uses AI to generate text or process data. You can see the options by clicking the handle:

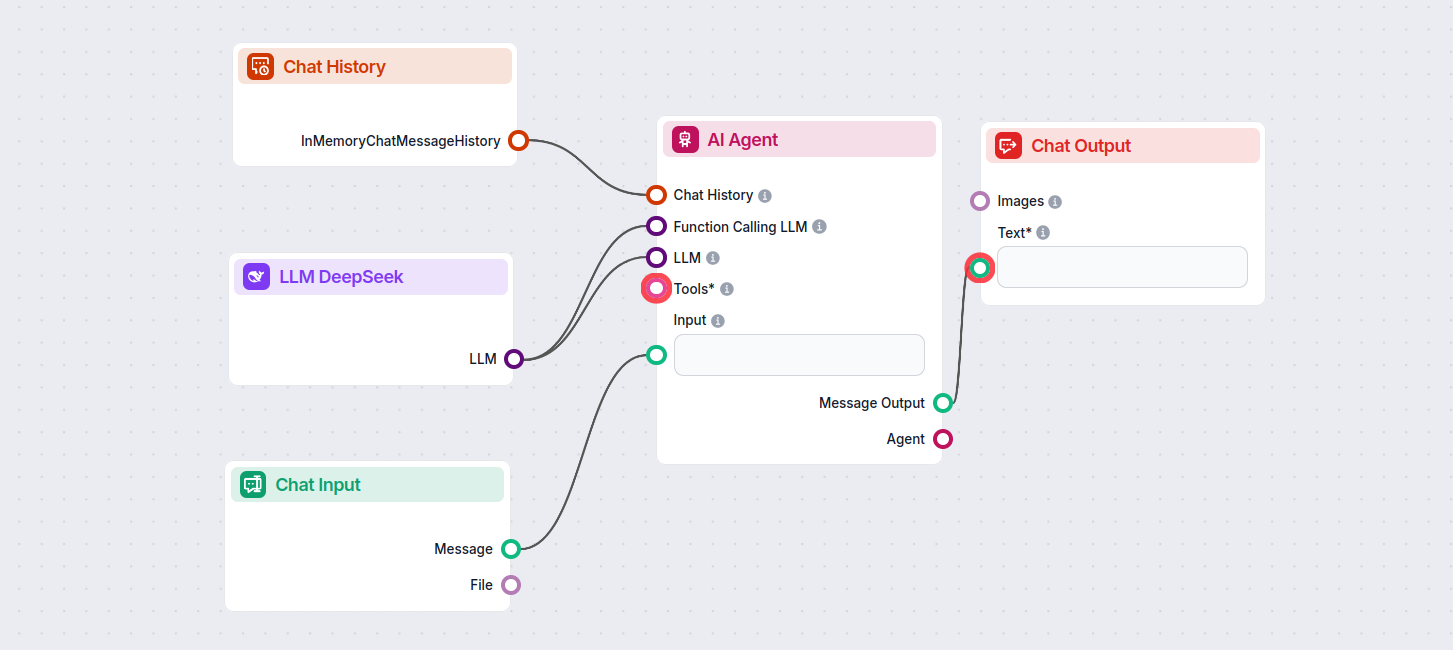

This allows you to create all sorts of tools. Let’s see the component in action. Here’s a simple AI Agent chatbot Flow using DeepSeek R1 to generate responses. You can think of it as a basic DeepSeek chatbot.

This simple Chatbot Flow includes:

LLM DeepSeek is a component in FlowHunt that allows you to connect and control DeepSeek AI models for text and image generation, enabling powerful chatbots and automated flows.

FlowHunt supports all the latest DeepSeek models, including DeepSeek R1, known for its speed and performance, especially compared to other leading AI models.

You can adjust max tokens for response length and temperature for response creativity, as well as swap between available DeepSeek models directly in your FlowHunt dashboard.

No, connecting an LLM component is optional. By default, FlowHunt uses ChatGPT-4o, but adding LLM DeepSeek lets you swap and control which AI model powers your flows.

Start building smarter AI chatbots and automation tools using advanced DeepSeek models—all without complex setup or multiple subscriptions.

FlowHunt supports dozens of AI models, including Google Gemini. Learn how to use Gemini in your AI tools and chatbots, switch between models, and control advanc...

FlowHunt supports dozens of text generation models, including models by xAI. Here's how to use the xAI models in your AI tools and chatbots.

FlowHunt supports dozens of text generation models, including Meta's Llama models. Learn how to integrate Llama into your AI tools and chatbots, customize setti...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.