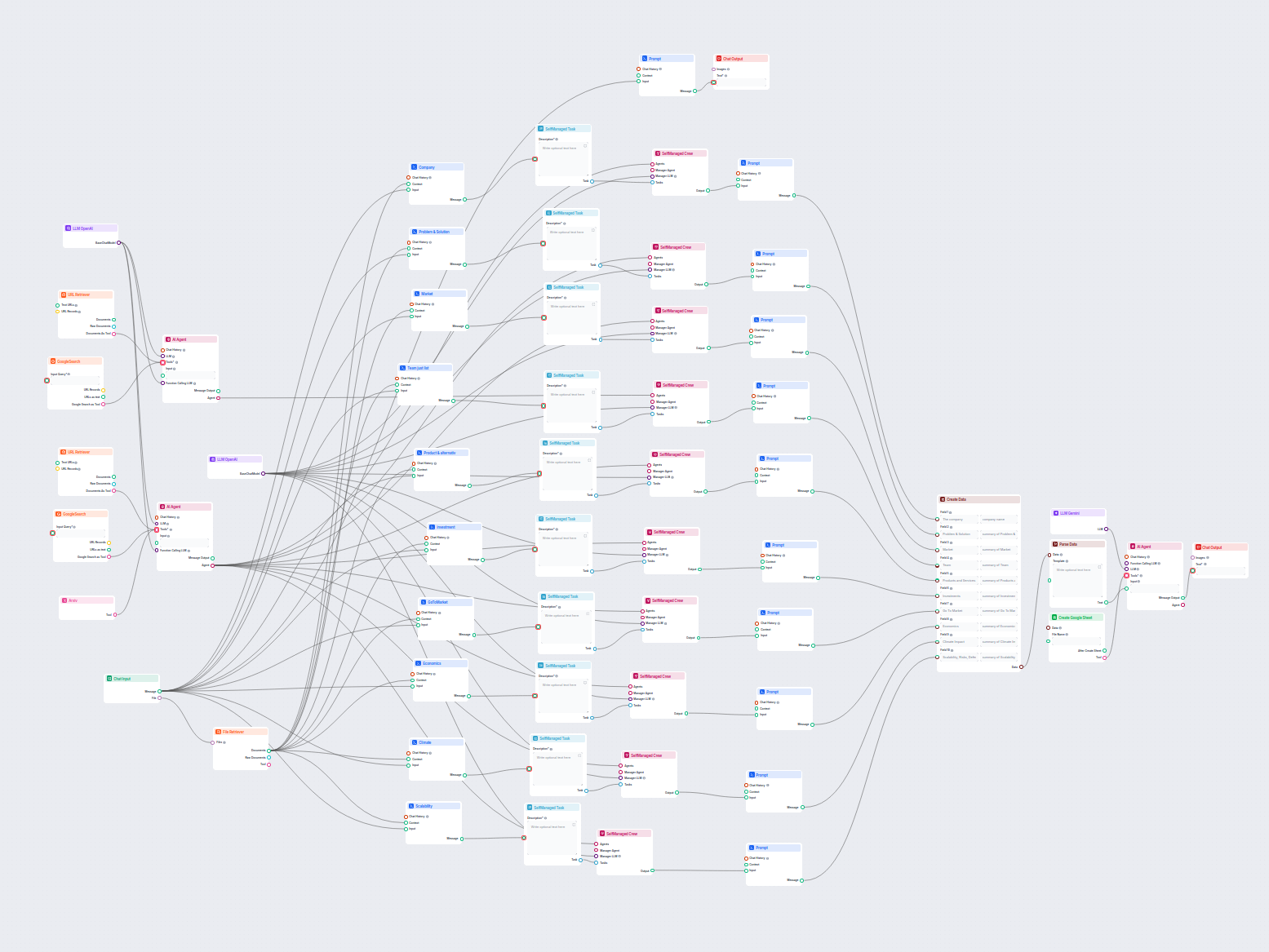

AI Company Analysis to Google Sheets

This AI-powered workflow delivers a comprehensive, data-driven company analysis. It gathers information on company background, market landscape, team, products,...

Component description

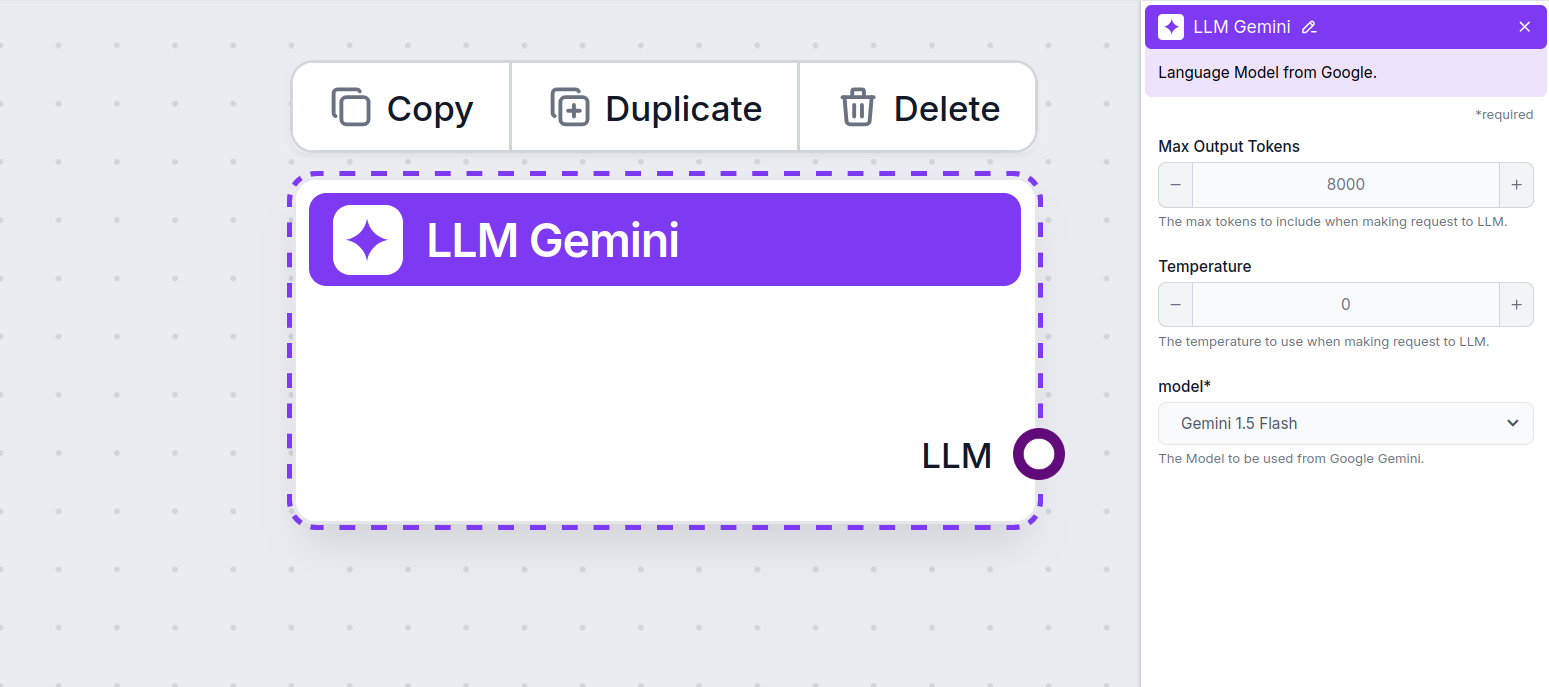

The LLM Gemini component connects the Gemini models from Google to your flow. While the Generators and Agents are where the actual magic happens, LLM components allow you to control the model used. All components come with ChatGPT-4 by default. You can connect this component if you wish to change the model or gain more control over it.

Remember that connecting an LLM Component is optional. All components that use an LLM come with ChatGPT-4o as the default. The LLM components allow you to change the model and control model settings.

Tokens represent the individual units of text the model processes and generates. Token usage varies with models, and a single token can be anything from words or subwords to a single character. Models are usually priced in millions of tokens.

The max tokens setting limits the total number of tokens that can be processed in a single interaction or request, ensuring the responses are generated within reasonable bounds. The default limit is 4,000 tokens, the optimal size for summarizing documents and several sources to generate an answer.

Temperature controls the variability of answers, ranging from 0 to 1.

A temperature of 0.1 will make the responses very to the point but potentially repetitive and deficient.

A high temperature of 1 allows for maximum creativity in answers but creates the risk of irrelevant or even hallucinatory responses.

For example, the recommended temperature for a customer service bot is between 0.2 and 0.5. This level should keep the answers relevant and to the script while allowing for a natural response variation.

This is the model picker. Here, you’ll find all the supported Gemini models from Google. We support all the latest Gemini models:

You’ll notice that all LLM components only have an output handle. Input doesn’t pass through the component, as it only represents the model, while the actual generation happens in AI Agents and Generators.

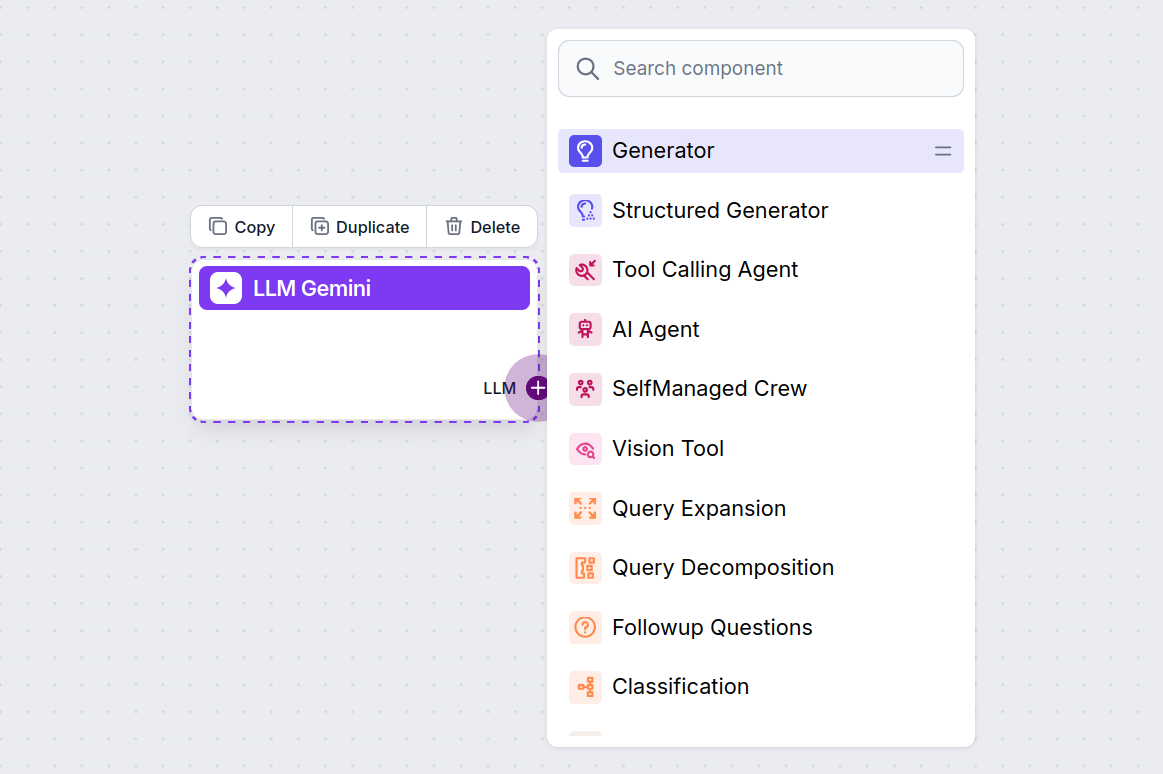

The LLM handle is always purple. The LLM input handle is found on any component that uses AI to generate text or process data. You can see the options by clicking the handle:

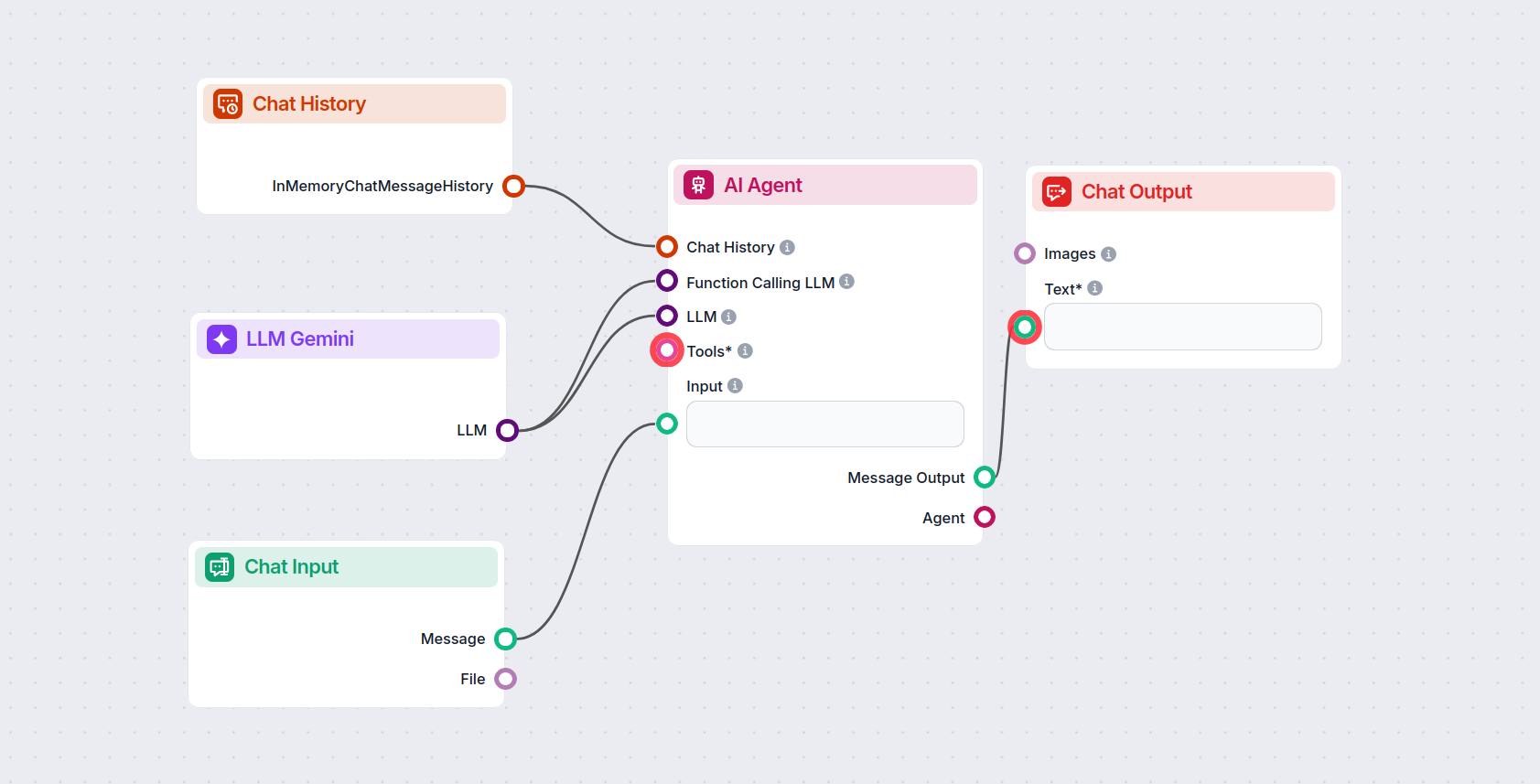

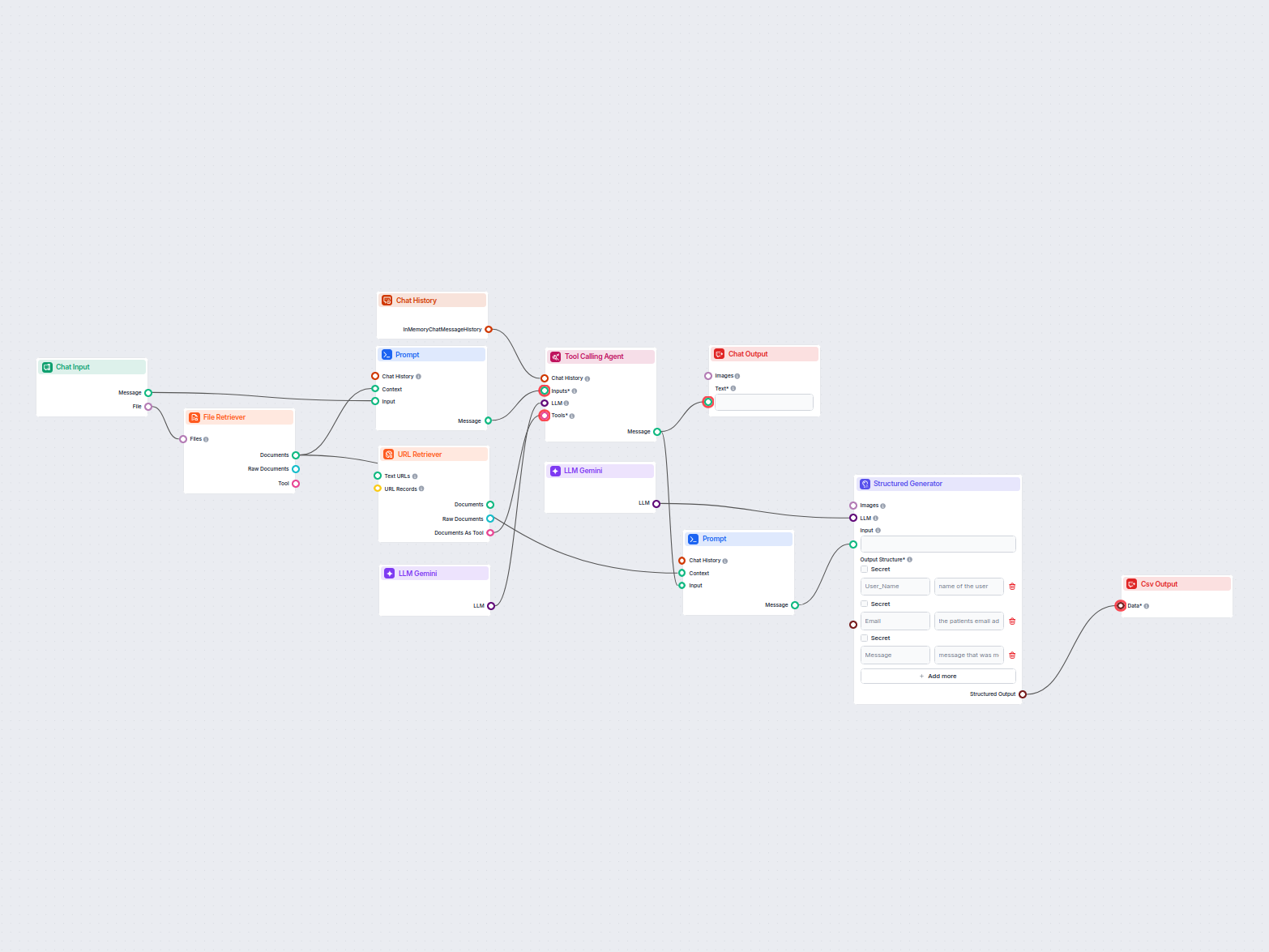

This allows you to create all sorts of tools. Let’s see the component in action. Here’s a simple AI Agent chatbot Flow that’s using Gemini 2.0 Flash Experimental to generate responses. You can think of it as a basic Gemini chatbot.

This simple Chatbot Flow includes:

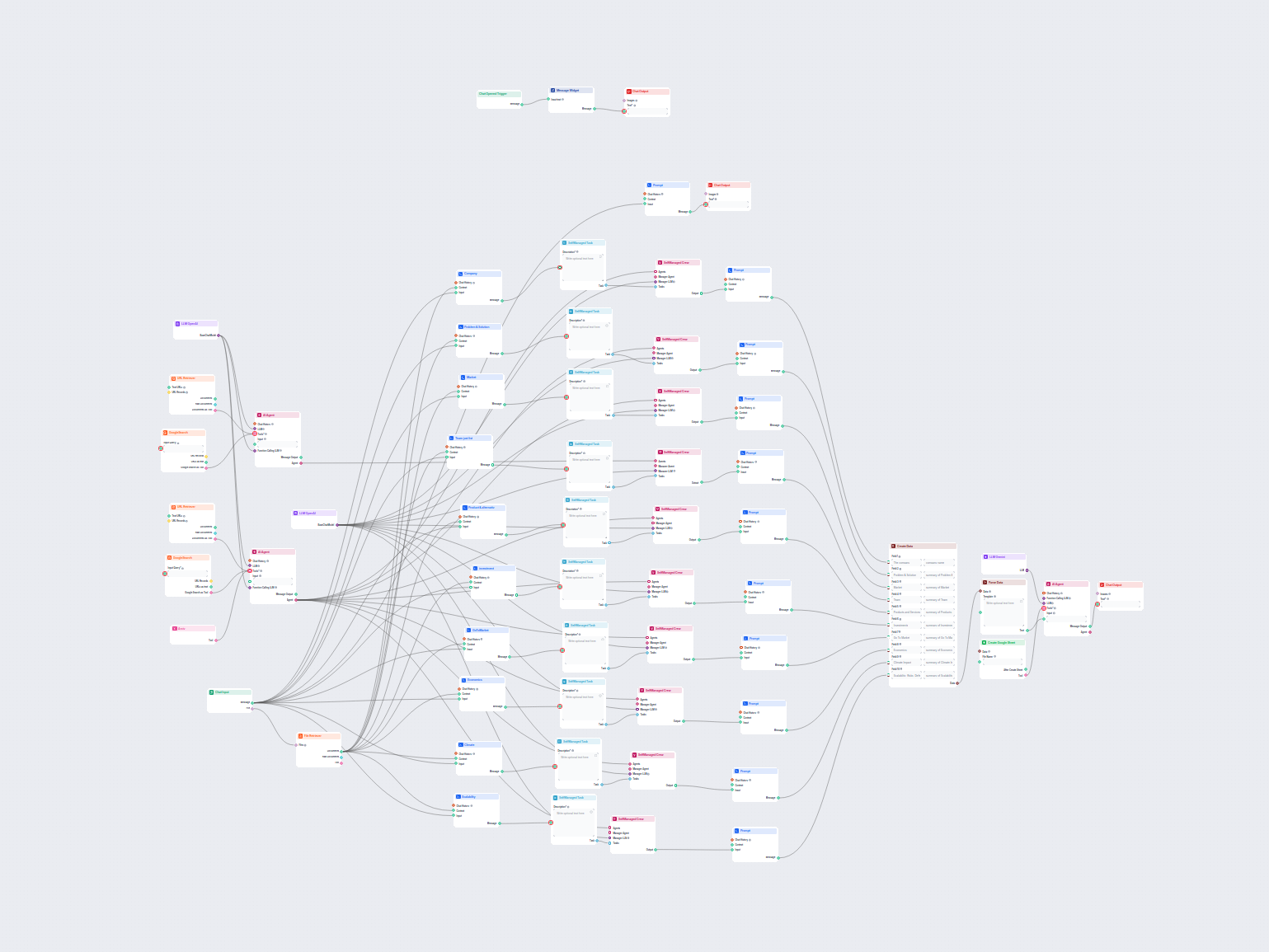

To help you get started quickly, we have prepared several example flow templates that demonstrate how to use the LLM Gemini component effectively. These templates showcase different use cases and best practices, making it easier for you to understand and implement the component in your own projects.

This AI-powered workflow delivers a comprehensive, data-driven company analysis. It gathers information on company background, market landscape, team, products,...

This AI workflow analyzes any company in depth by researching public data and documents, covering market, team, products, investments, and more. It synthesizes ...

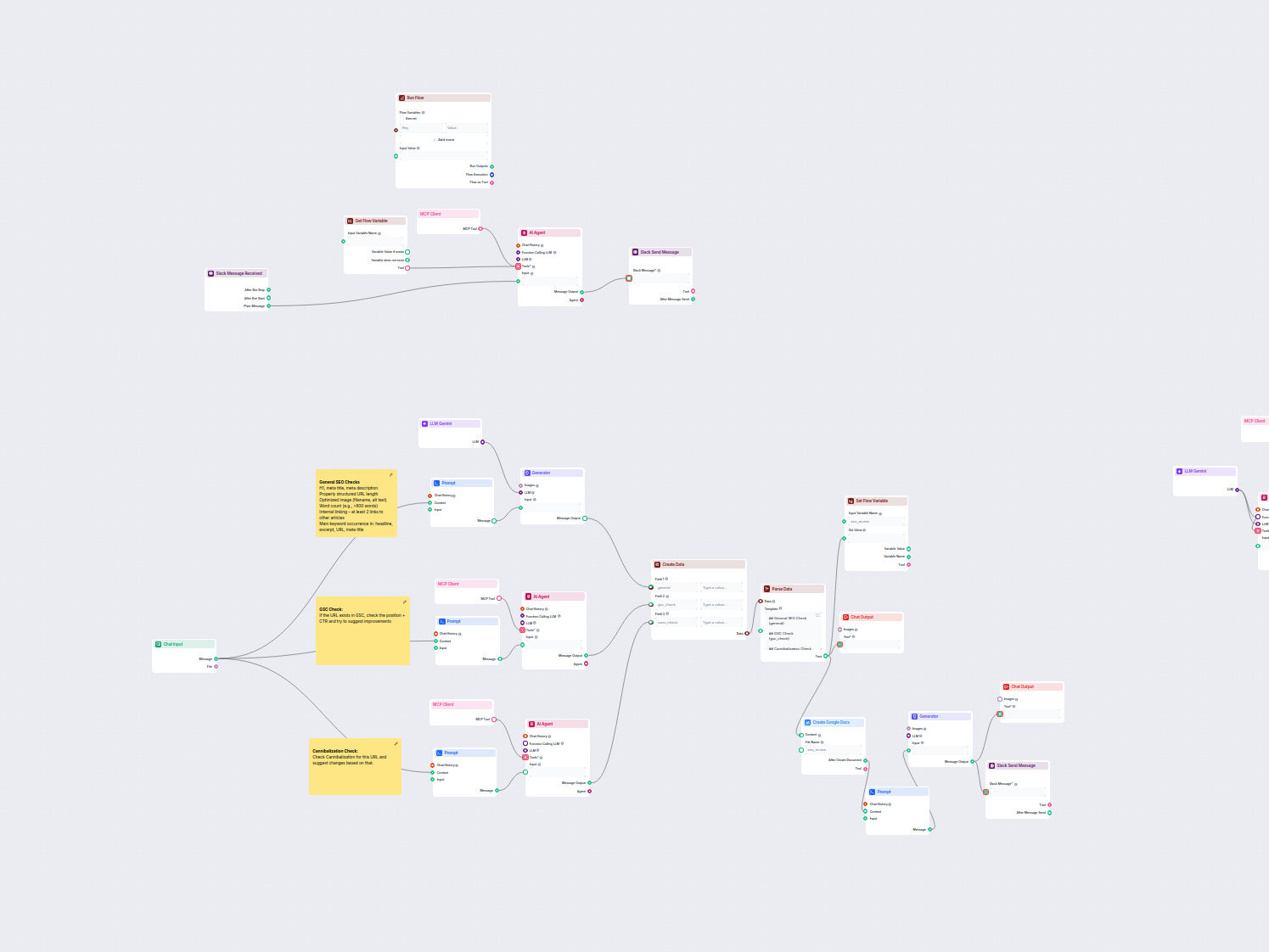

This workflow automates the SEO review and auditing process for website pages. It analyzes page content for SEO best practices, performs Google Search Console a...

This workflow extracts and organizes key information from emails and attached files, utilizes AI to process and structure the data, and outputs the results as a...

LLM Gemini connects Google's Gemini models to your FlowHunt AI flows, letting you choose from the latest Gemini variants for text generation and customize their behavior.

FlowHunt supports Gemini 2.0 Flash Experimental, Gemini 1.5 Flash, Gemini 1.5 Flash-8B, and Gemini 1.5 Pro—each offering unique capabilities for text, image, audio, and video inputs.

Max Tokens limit the response length, while Temperature controls creativity—lower values give focused answers, higher values allow more variety. Both can be set per model in FlowHunt.

No, using LLM components is optional. All AI flows come with ChatGPT-4o by default, but adding LLM Gemini lets you switch to Google models and fine-tune their settings.

Start building advanced AI chatbots and tools with Gemini and other top models—all in one dashboard. Switch models, customize settings, and streamline your workflows.

FlowHunt supports dozens of AI models, including the revolutionary DeepSeek models. Here's how to use DeepSeek in your AI tools and chatbots.

FlowHunt supports dozens of AI text models, including models by Mistral. Here's how to use Mistral in your AI tools and chatbots.

FlowHunt supports dozens of text generation models, including Meta's Llama models. Learn how to integrate Llama into your AI tools and chatbots, customize setti...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.