Agentic RAG: The Evolution of Intelligent Retrieval-Augmented Generation

Discover how Agentic RAG transforms traditional retrieval-augmented generation by enabling AI agents to make intelligent decisions, reason through complex probl...

Retrieval Augmented Generation (RAG) is an advanced AI framework that combines traditional information retrieval systems with generative large language models (LLMs), enabling AI to generate text that is more accurate, current, and contextually relevant by integrating external knowledge.

Retrieval-Augmented Generation (RAG) is an AI architecture in which a generative large language model (LLM) is paired with an external retrieval system. At query time, the retriever fetches the most relevant chunks of text from a knowledge source — a vector index, database, or document store — and the LLM uses those chunks as additional context when producing its answer.

The LLM is no longer limited to what it learned during training: its answers reflect whatever is in the connected knowledge base, which can be updated continuously without retraining the model.

A RAG pipeline has two stages:

RAG is the right choice whenever the answer depends on data that changes over time or that wasn’t in the LLM’s training set.

For an in-depth comparison with Cache-Augmented Generation (CAG) — the alternative that preloads a static knowledge set into the model’s context instead of retrieving at query time — see our blog post RAG vs CAG: which augmentation strategy fits your project .

For RAG with autonomous tool use and multi-step reasoning, see Agentic RAG .

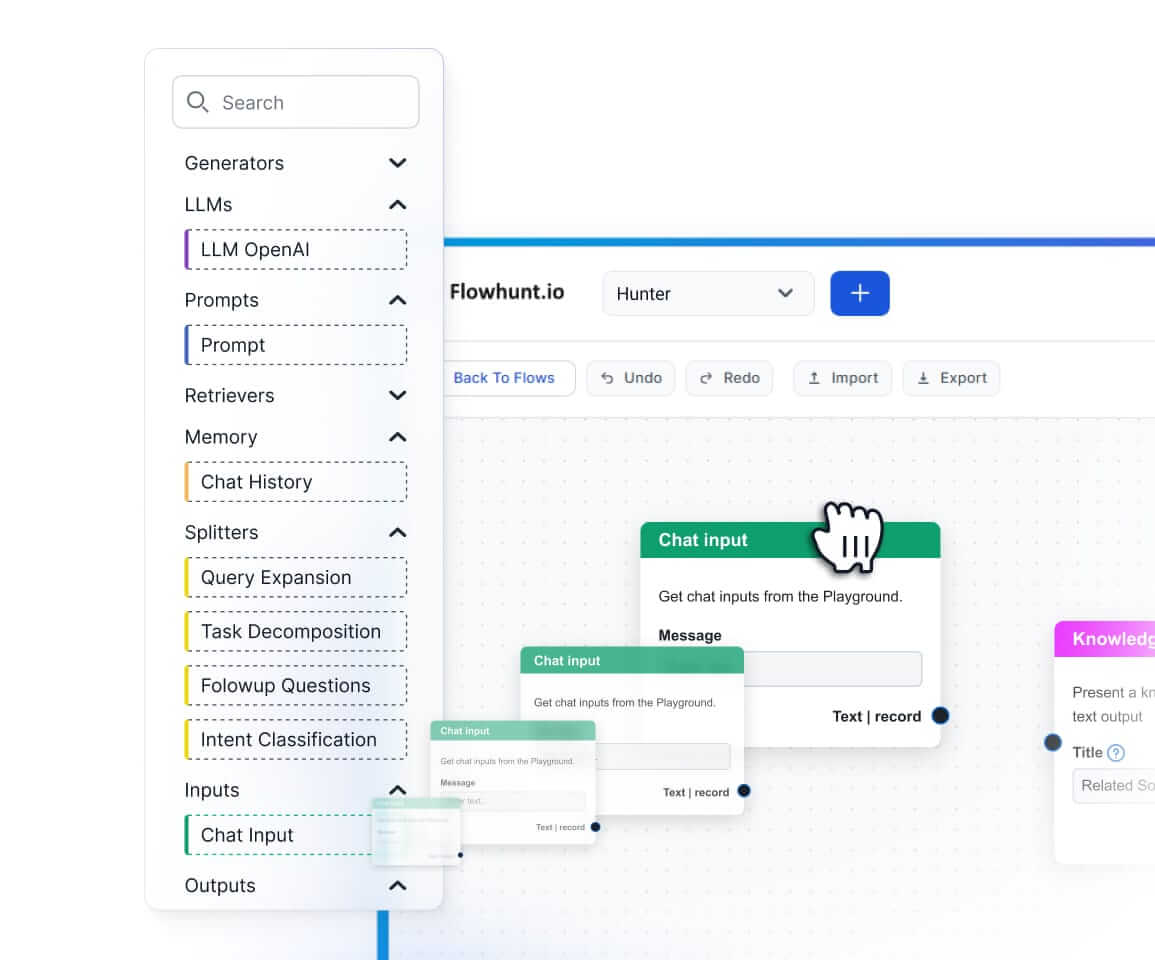

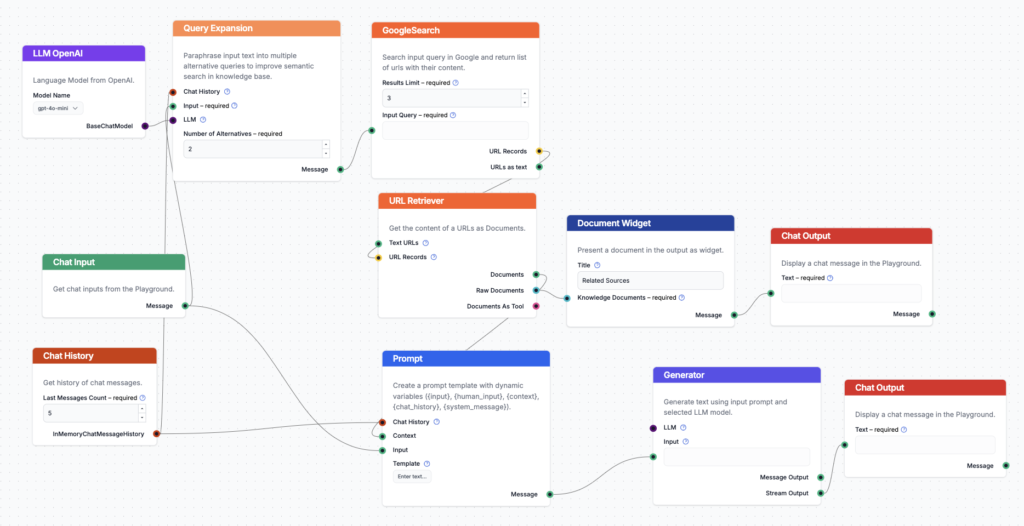

With FlowHunt you can index knowledge from any source on the internet — your website, PDFs, Google Search, Reddit, Wikipedia — and use it to power content generation or customer-support chatbots without writing retrieval code.

Leverage Retrieval Augmented Generation to build smarter chatbots and automated content solutions. Index knowledge from any source and enhance your AI capabilities.

Discover how Agentic RAG transforms traditional retrieval-augmented generation by enabling AI agents to make intelligent decisions, reason through complex probl...

Discover the key differences between Retrieval-Augmented Generation (RAG) and Cache-Augmented Generation (CAG) in AI. Learn how RAG dynamically retrieves real-t...

Discover how Retrieval-Augmented Generation (RAG) is transforming enterprise AI, from core principles to advanced Agentic architectures like FlowHunt. Learn how...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.