LLM Meta AI

FlowHunt supporta dozzine di modelli di generazione di testo, inclusi i modelli Llama di Meta. Scopri come integrare Llama nei tuoi strumenti e chatbot AI, pers...

LLM Mistral su FlowHunt consente un’integrazione flessibile di avanzati modelli AI Mistral per una generazione di testo fluida in chatbot e strumenti AI.

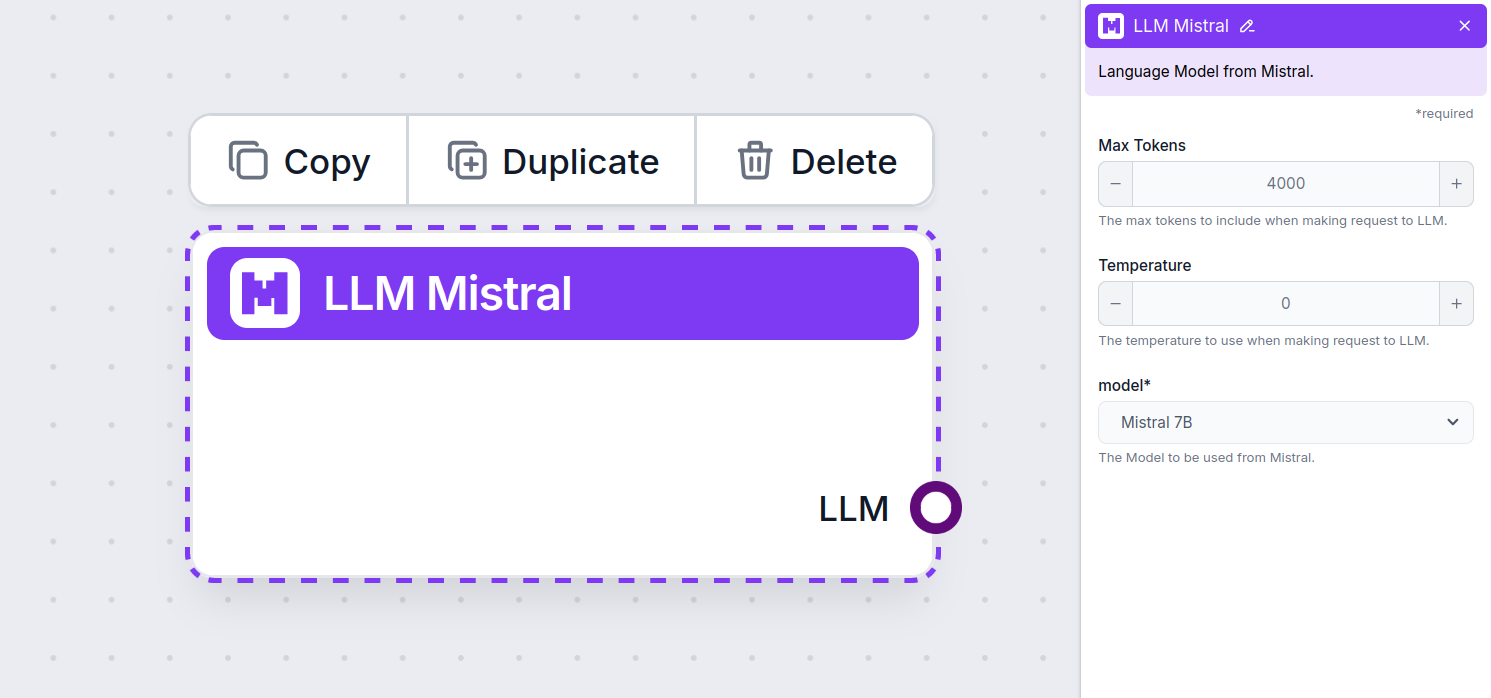

Descrizione del componente

The LLM Mistral component connects the Mistral models to your flow. While the Generators and Agents are where the actual magic happens, LLM components allow you to control the model used. All components come with ChatGPT-4 by default. You can connect this component if you wish to change the model or gain more control over it.

Remember that connecting an LLM Component is optional. All components that use an LLM come with ChatGPT-4o as the default. The LLM components allow you to change the model and control model settings.

Tokens represent the individual units of text the model processes and generates. Token usage varies with models, and a single token can be anything from words or subwords to a single character. Models are usually priced in millions of tokens.

The max tokens setting limits the total number of tokens that can be processed in a single interaction or request, ensuring the responses are generated within reasonable bounds. The default limit is 4,000 tokens, which is the optimal size for summarizing documents and several sources to generate an answer.

Temperature controls the variability of answers, ranging from 0 to 1.

A temperature of 0.1 will make the responses very to the point but potentially repetitive and deficient.

A high temperature of 1 allows for maximum creativity in answers but creates the risk of irrelevant or even hallucinatory responses.

For example, the recommended temperature for a customer service bot is between 0.2 and 0.5. This level should keep the answers relevant and to the script while allowing for a natural response variation.

This is the model picker. Here, you’ll find all the supported models from Mistral. We currently support the following models:

How To Add The LLM Mistral To Your Flow

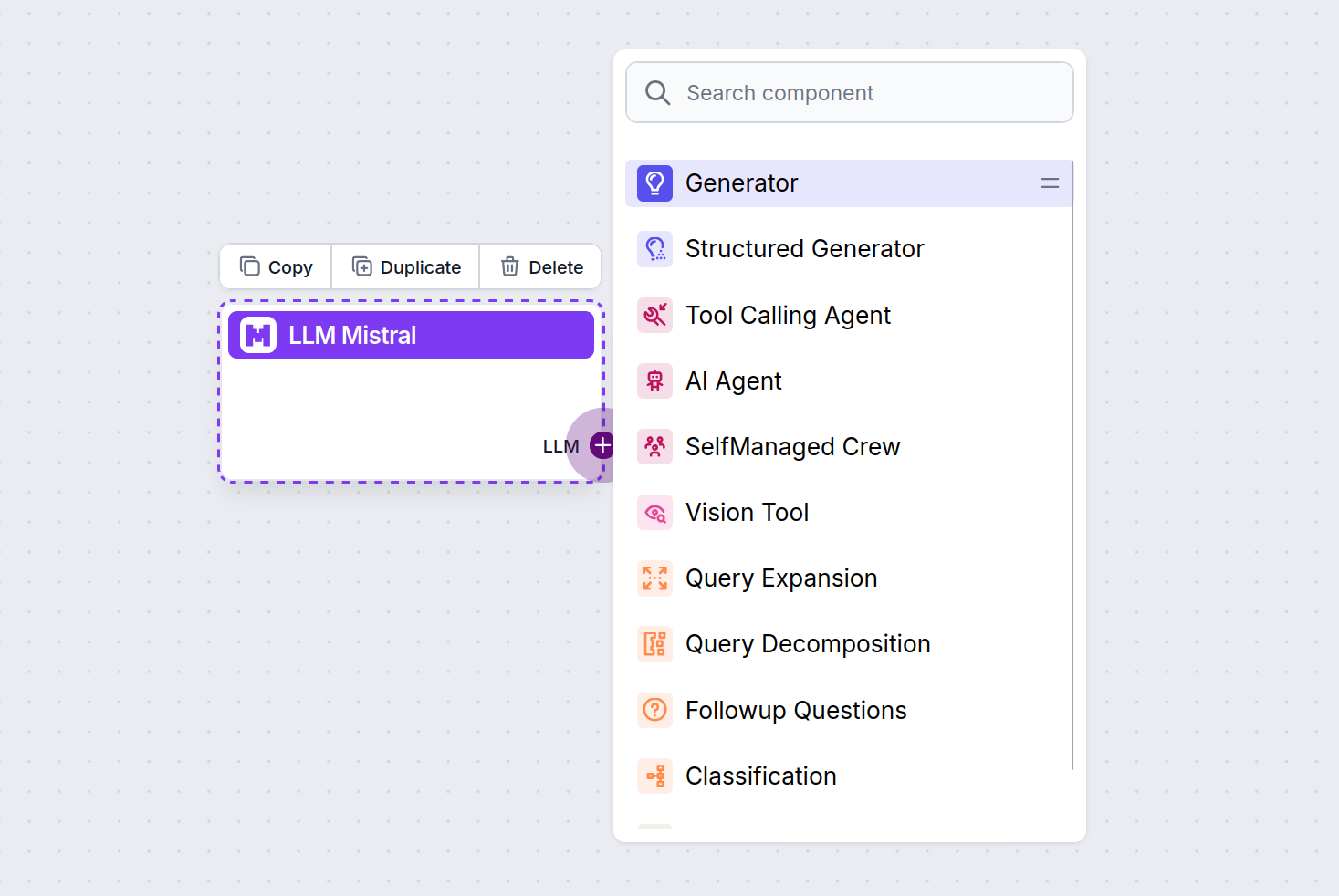

You’ll notice that all LLM components only have an output handle. Input doesn’t pass through the component, as it only represents the model, while the actual generation happens in AI Agents and Generators.

The LLM handle is always purple. The LLM input handle is found on any component that uses AI to generate text or process data. You can see the options by clicking the handle:

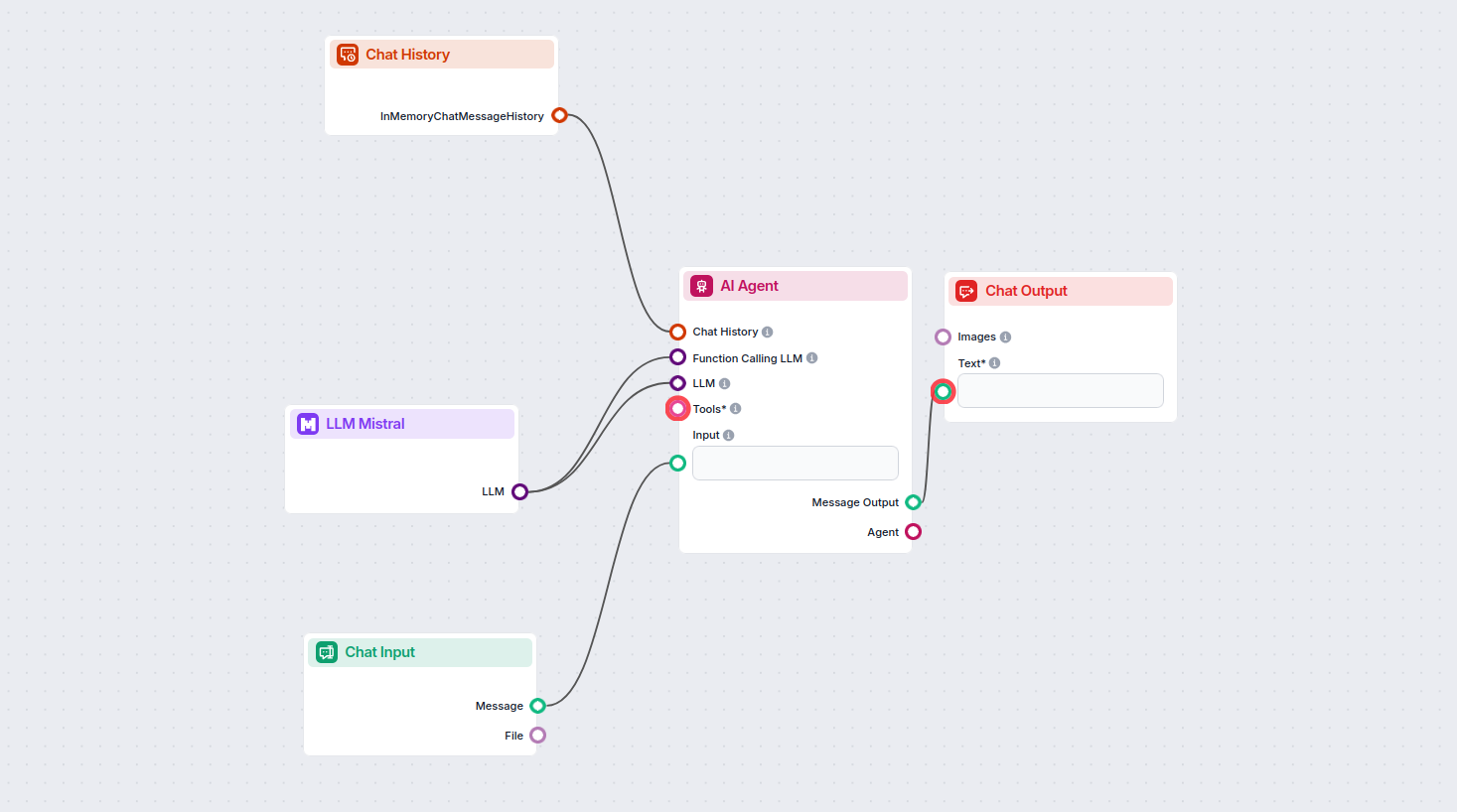

This allows you to create all sorts of tools. Let’s see the component in action. Here’s a simple AI Agent chatbot Flow that’s using the Mistral 7B model to generate responses. You can think of it as a basic Mistral chatbot.

This simple Chatbot Flow includes:

Il componente LLM Mistral ti permette di collegare i modelli AI di Mistral ai tuoi progetti FlowHunt, abilitando la generazione avanzata di testo per i tuoi chatbot e agenti AI. Consente di cambiare modello, controllare le impostazioni e integrare modelli come Mistral 7B, Mixtral (8x7B) e Mistral Large.

FlowHunt supporta Mistral 7B, Mixtral (8x7B) e Mistral Large, ognuno con diversi livelli di prestazioni e parametri per varie esigenze di generazione testo.

Puoi regolare impostazioni come max tokens e temperature, e scegliere tra i modelli Mistral supportati per controllare la lunghezza delle risposte, la creatività e il comportamento del modello nei tuoi flussi.

No, collegare un componente LLM è opzionale. Di default, i componenti FlowHunt utilizzano ChatGPT-4o. Usa il componente LLM Mistral quando vuoi più controllo o utilizzare un modello Mistral specifico.

Inizia a creare chatbot e strumenti AI più intelligenti integrando i potenti modelli linguistici di Mistral con la piattaforma no-code di FlowHunt.

FlowHunt supporta dozzine di modelli di generazione di testo, inclusi i modelli Llama di Meta. Scopri come integrare Llama nei tuoi strumenti e chatbot AI, pers...

FlowHunt supporta dozzine di modelli di generazione del testo, inclusi i modelli di xAI. Ecco come utilizzare i modelli xAI nei tuoi strumenti e chatbot AI.

FlowHunt supporta dozzine di modelli AI, inclusi i rivoluzionari modelli DeepSeek. Ecco come utilizzare DeepSeek nei tuoi strumenti AI e chatbot.