Azure Wiki Search MCP Server

The Azure Wiki Search MCP Server enables AI agents and developers to programmatically search and retrieve content from Azure DevOps wiki, streamlining access to...

Bridge your AI assistant with Wikidata’s structured knowledge using FlowHunt’s Wikidata MCP Server integration—enabling seamless semantic search, metadata extraction, and SPARQL querying.

FlowHunt provides an additional security layer between your internal systems and AI tools, giving you granular control over which tools are accessible from your MCP servers. MCP servers hosted in our infrastructure can be seamlessly integrated with FlowHunt's chatbot as well as popular AI platforms like ChatGPT, Claude, and various AI editors.

The Wikidata MCP Server is a server implementation of the Model Context Protocol (MCP), designed to interface directly with the Wikidata API. It provides a bridge between AI assistants and the vast structured knowledge in Wikidata, allowing developers and AI agents to seamlessly search for entity and property identifiers, extract metadata (such as labels and descriptions), and execute SPARQL queries. By exposing these capabilities as MCP tools, the server enables tasks like semantic search, knowledge extraction, and contextual enrichment in development workflows where external structured data is needed. This enhances AI-driven applications by allowing them to retrieve, query, and reason about up-to-date information from Wikidata.

No prompt templates are mentioned in the repository or documentation.

No explicit MCP resources are described in the repository or documentation.

mcpServers configuration using a JSON snippet like below."mcpServers": {

"wikidata-mcp": {

"command": "npx",

"args": ["@zzaebok/mcp-wikidata@latest"]

}

}

Securing API Keys (if needed):

{

"wikidata-mcp": {

"env": {

"WIKIDATA_API_KEY": "your-api-key"

},

"inputs": {

"some_input": "value"

}

}

}

"mcpServers": {

"wikidata-mcp": {

"command": "npx",

"args": ["@zzaebok/mcp-wikidata@latest"]

}

}

Securing API Keys:

{

"wikidata-mcp": {

"env": {

"WIKIDATA_API_KEY": "your-api-key"

}

}

}

"mcpServers": {

"wikidata-mcp": {

"command": "npx",

"args": ["@zzaebok/mcp-wikidata@latest"]

}

}

Securing API Keys:

{

"wikidata-mcp": {

"env": {

"WIKIDATA_API_KEY": "your-api-key"

}

}

}

"mcpServers": {

"wikidata-mcp": {

"command": "npx",

"args": ["@zzaebok/mcp-wikidata@latest"]

}

}

Securing API Keys:

{

"wikidata-mcp": {

"env": {

"WIKIDATA_API_KEY": "your-api-key"

}

}

}

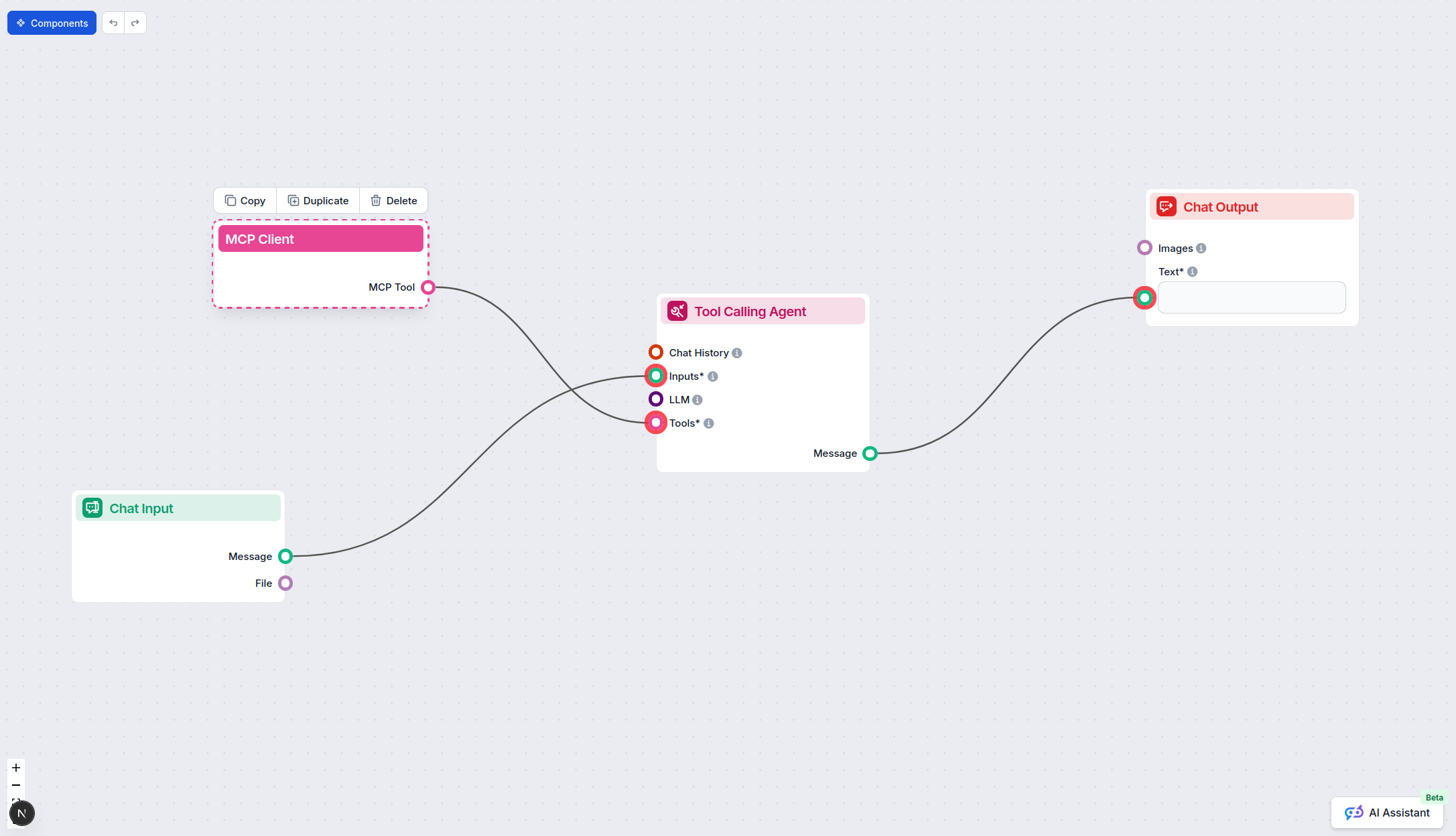

Using MCP in FlowHunt

To integrate MCP servers into your FlowHunt workflow, start by adding the MCP component to your flow and connecting it to your AI agent:

Click on the MCP component to open the configuration panel. In the system MCP configuration section, insert your MCP server details using this JSON format:

{

"wikidata-mcp": {

"transport": "streamable_http",

"url": "https://yourmcpserver.example/pathtothemcp/url"

}

}

Once configured, the AI agent is now able to use this MCP as a tool with access to all its functions and capabilities. Remember to change “wikidata-mcp” to whatever the actual name of your MCP server is and replace the URL with your own MCP server URL.

| Section | Availability | Details/Notes |

|---|---|---|

| Overview | ✅ | Overview available in README.md |

| List of Prompts | ⛔ | No prompt templates found |

| List of Resources | ⛔ | No explicit resources listed |

| List of Tools | ✅ | Tools detailed in README.md |

| Securing API Keys | ⛔ | No explicit API key requirement found |

| Sampling Support (less important in evaluation) | ⛔ | Not mentioned |

The Wikidata MCP Server is a simple but effective implementation, providing several useful tools for interacting with Wikidata via MCP. However, it lacks documentation on prompt templates, resources, and sampling/roots support, which limits its flexibility for more advanced or standardized MCP integrations. The presence of a license, clear tooling, and active updates make it a solid starting point for MCP use cases focused on Wikidata.

| Has a LICENSE | ✅ (MIT) |

|---|---|

| Has at least one tool | ✅ |

| Number of Forks | 5 |

| Number of Stars | 18 |

MCP Server Rating: 6/10

Solid core functionality, but lacking in standard MCP resource/prompt support and advanced features. Good for direct Wikidata integration use cases.

Enhance your AI’s reasoning and data capabilities by adding Wikidata as a structured knowledge source in your FlowHunt workflows.

The Azure Wiki Search MCP Server enables AI agents and developers to programmatically search and retrieve content from Azure DevOps wiki, streamlining access to...

The mcp-google-search MCP Server bridges AI assistants and the web, enabling real-time search and content extraction using the Google Custom Search API. It empo...

The DataHub MCP Server bridges FlowHunt AI agents with the DataHub metadata platform, enabling advanced data discovery, lineage analysis, automated metadata ret...