LLM Context

Versnel je AI-ondersteunde ontwikkeling door FlowHunt’s LLM Context te integreren. Injecteer naadloos relevante code en documentcontext in je favoriete chatinte...

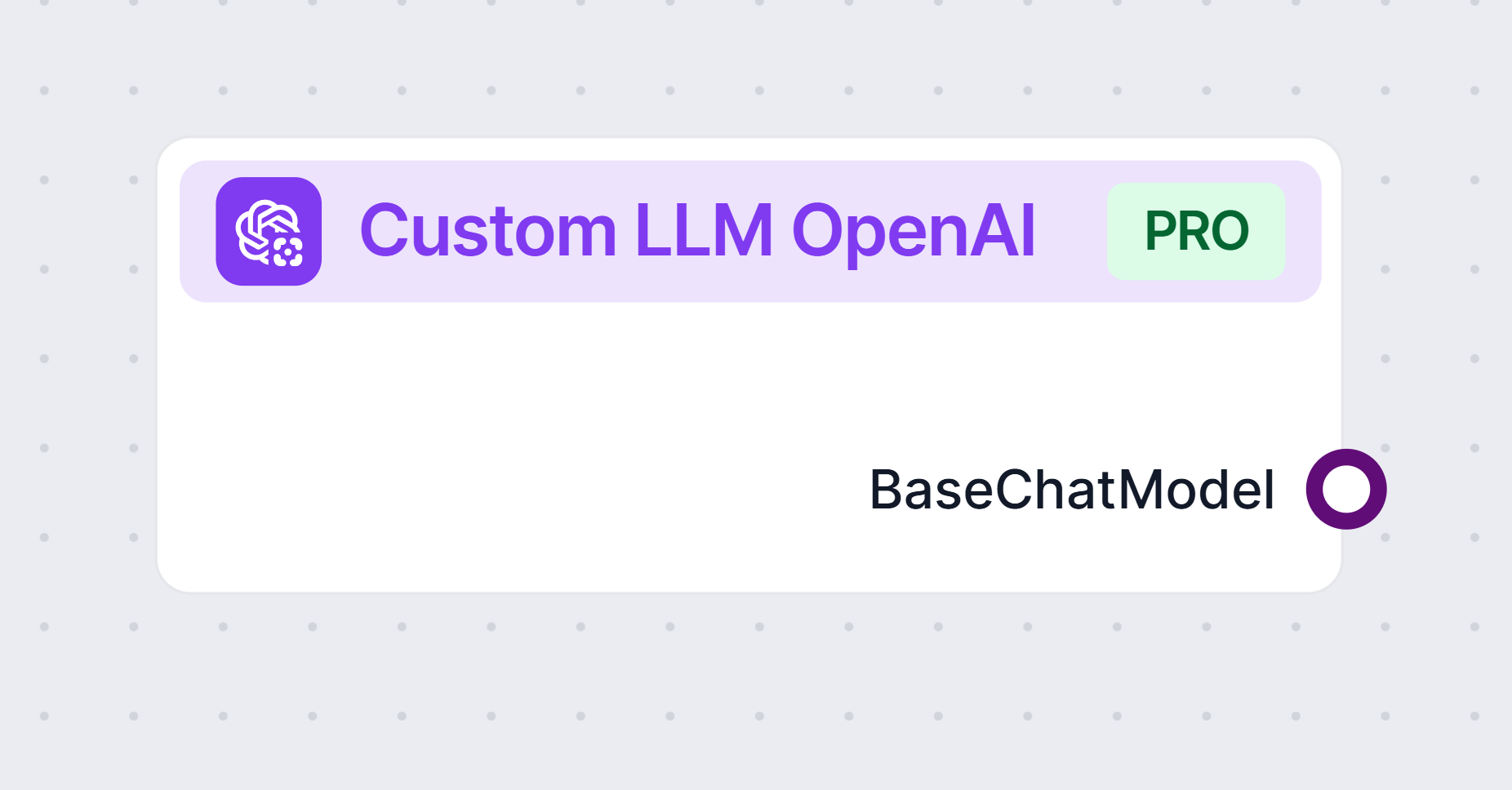

Met de Custom OpenAI LLM-component kun je je eigen OpenAI-compatibele taalmodellen verbinden en configureren voor flexibele, geavanceerde conversationele AI-flows.

Componentbeschrijving

The Custom LLM OpenAI component provides a flexible interface to interact with large language models that are compatible with the OpenAI API. This includes models not only from OpenAI, but also from alternative providers such as JinaChat, LocalAI, and Prem. The component is designed to be highly configurable, making it suitable for a variety of AI workflow scenarios where natural language processing is required.

This component acts as a bridge between your AI workflow and language models that follow the OpenAI API standard. By allowing you to specify the model provider, API endpoint, and other parameters, it enables you to generate or process text, chat, or other language-based outputs within your workflow. Whether you need to summarize content, answer questions, generate creative text, or perform other NLP tasks, this component can be tailored to your needs.

You can control the behavior of the component through several parameters:

| Parameter | Type | Required | Default | Description |

|---|---|---|---|---|

| Max Tokens | int | No | 3000 | Limits the maximum length of the generated text output. |

| Model Name | string | No | (empty) | Specify the exact model to use (e.g., gpt-3.5-turbo). |

| OpenAI API Base | string | No | (empty) | Allows you to set a custom API endpoint (e.g., for JinaChat, LocalAI, or Prem). Defaults to OpenAI if blank. |

| API Key | string | Yes | (empty) | Your secret API key for accessing the chosen language model provider. |

| Temperature | float | No | 0.7 | Controls the creativity of output. Lower values mean more deterministic results. Range: 0 to 1. |

| Use Cache | bool | No | true | Enable/disable caching of queries to improve efficiency and reduce costs. |

Note: All these configuration options are advanced settings, giving you fine-grained control over the model’s behavior and integration.

Inputs:

There are no input handles for this component.

Outputs:

BaseChatModel object, which can be used in subsequent components in your workflow for further processing or interaction.| Feature | Description |

|---|---|

| Provider Support | OpenAI, JinaChat, LocalAI, Prem, or any OpenAI API-compatible service |

| Output Type | BaseChatModel |

| API Endpoint | Configurable |

| Security | API Key required (kept secret) |

| Usability | Advanced settings for power users, but defaults work for most applications |

This component is ideal for anyone looking to integrate flexible, robust, and configurable LLM capabilities into their AI workflows, regardless of whether you use OpenAI directly or an alternative provider.

Verbind je eigen taalmodellen en geef je AI-workflows een boost. Probeer vandaag nog de Custom OpenAI LLM-component in FlowHunt.

Versnel je AI-ondersteunde ontwikkeling door FlowHunt’s LLM Context te integreren. Injecteer naadloos relevante code en documentcontext in je favoriete chatinte...

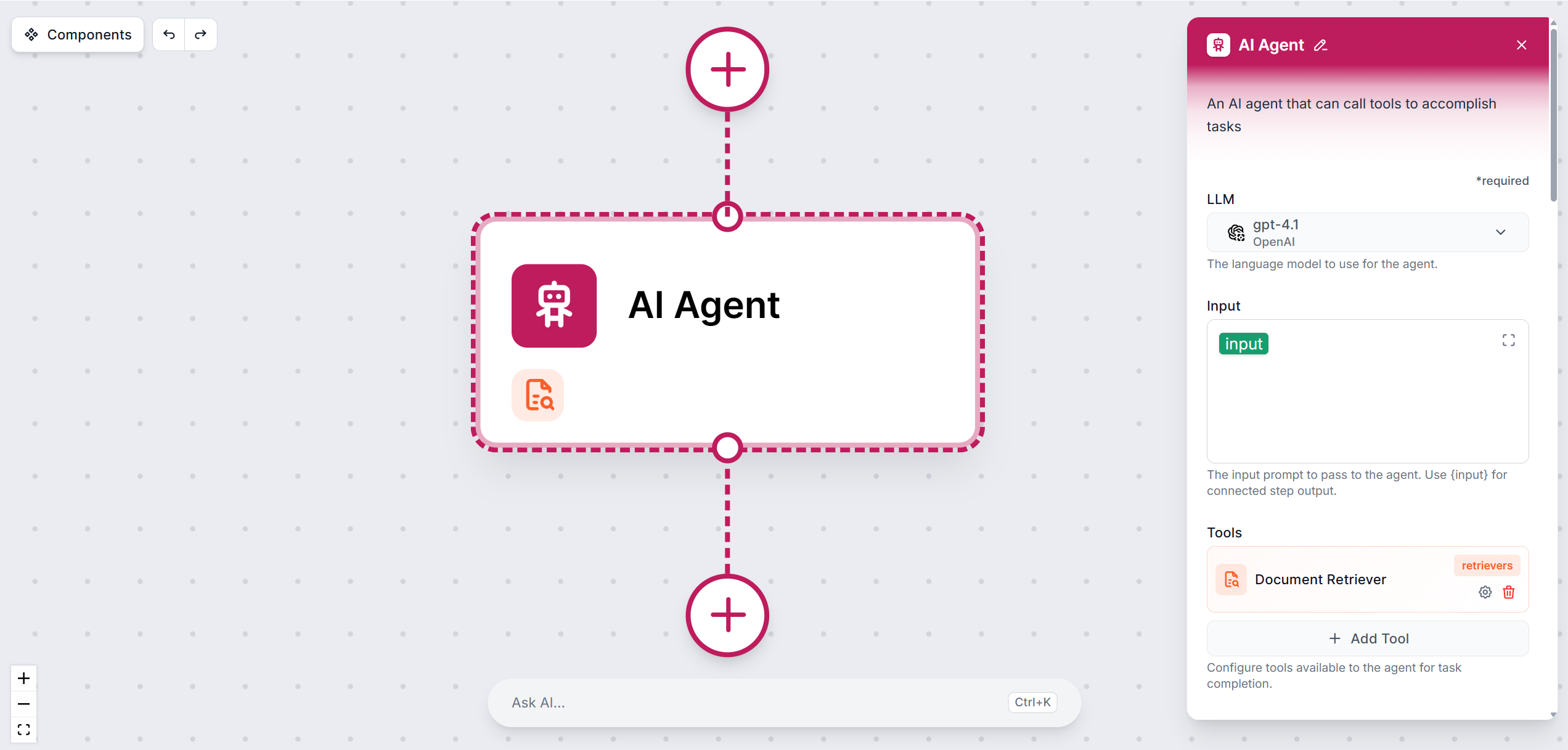

Beheers het AI Agent-onderdeel in FlowHunt-workflows. Leer hoe je systeemberichten configureert, gereedschappen verbindt, modellen selecteert en agentprestaties...