AI Software Development Training

Part 1 – Harness Engineering Foundations

You will learn:

- Why babysitting an AI editor does not scale

- Harness engineering: humans steer, agents execute

- Bootstrapping a repo with the CodeFactory CLI

- Detecting stack, risk tiers and architectural boundaries

- Writing CLAUDE.md as the agent control plane

- Versioning prompts and guards as code

- Pre-commit hooks, risk policy gates and protected files

Part 2 – Automated Development in GitHub Actions

You will learn:

- Issue triage, planner and implementer agents

- Read-only review agents with structured verdicts

- Remediation loops and auto-revert of protected files

- Risk-gated CI pipelines with SHA discipline

- Doc gardening and weekly harness metrics

- Running the full issue → PR → merge loop live

- Adapting the harnesses to your own codebase

Showcase Your ExpertiseWith Our Certificate!

Stop babysitting the AI editor

Most developers today use AI the wrong way. They sit in Cursor or Copilot Chat, accept a suggestion, scroll, accept another, undo, retry, paste an error back into the chat, and call it a day. It feels productive, but it is manual work wearing an AI costume. The human is still the bottleneck. The agent is still guessing. Nothing is repeatable, nothing is reviewable, and nothing scales past one developer and one branch.

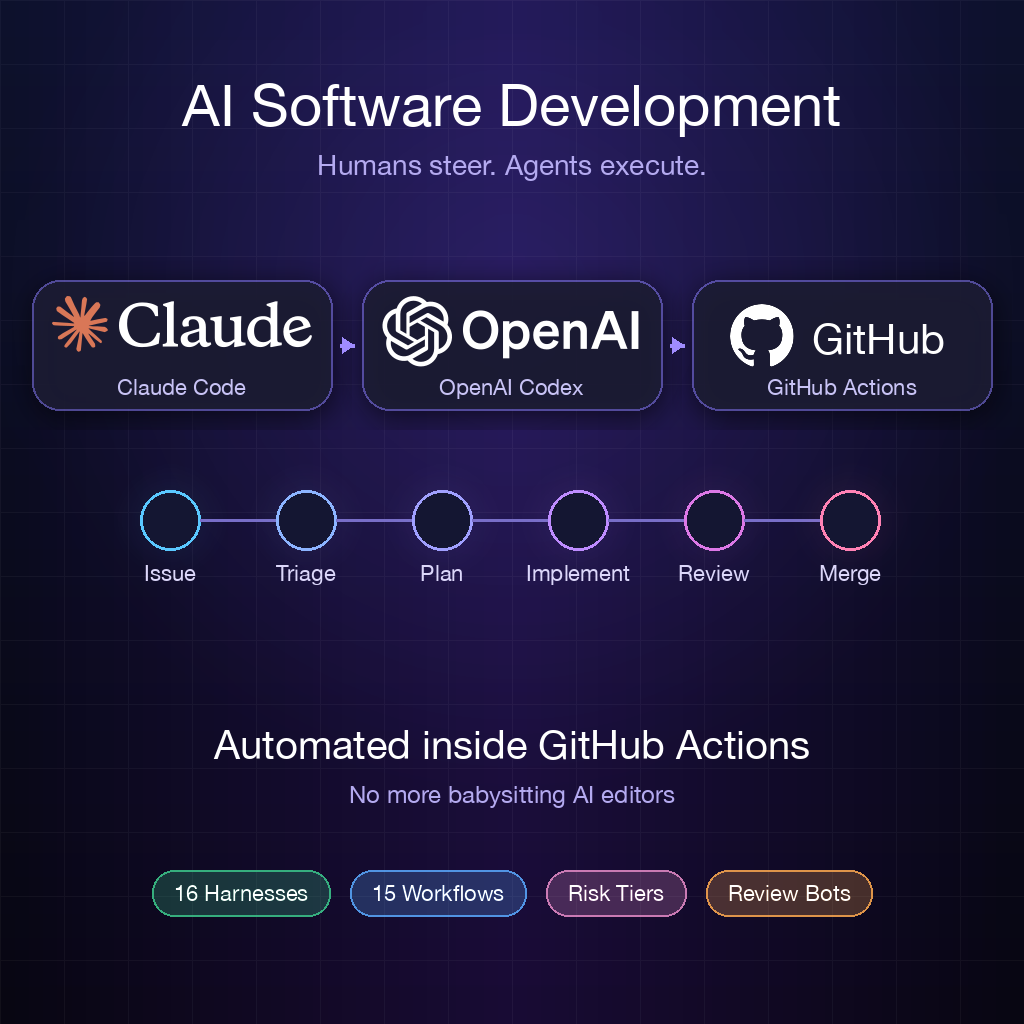

This training flips the model. Your team will learn to move AI coding out of the editor and into GitHub Actions, where agents run in ephemeral runners, guarded by versioned prompts and automated quality gates. The developer opens an issue, reviews a pull request and clicks merge. Everything in between — triage, planning, implementation, code review, remediation — happens automatically, on commodity CI infrastructure.

The CodeFactory harness toolkit

We teach on top of CodeFactory

, an open-source CLI that bootstraps a complete agent-safety harness into any existing repository. One command — codefactory init — and your repo gains 16 harnesses and 14+ GitHub Actions workflows tailored to your stack:

- A risk contract (

harness.config.json) that classifies every file into Tier 1, 2 or 3 and enforces the right level of scrutiny - Agent instructions (

CLAUDE.md) that describe conventions, dependency rules and protected files - An issue triage agent that evaluates clarity, reproducibility and scope before any code is written

- An issue planner that reads the codebase read-only and posts a structured implementation plan

- An issue implementer that creates a branch, implements the change, runs baseline validation and opens a PR

- A review agent that runs with read-only tools and emits an APPROVE / REQUEST_CHANGES / COMMENT verdict classified by a second lightweight model

- A remediation loop that feeds review verdicts back to the implementer for up to three auto-fix cycles before escalating to a human

- Doc gardening, structural tests, harness smoke tests and weekly metrics workflows that keep the harness itself healthy

Everything lives in the repository. No external dashboards, no vendor lock-in, no hidden state. Editing a prompt is a normal pull request.

Real production example: sport-affiliate

We walk through QualityUnit/sport-affiliate , a real production monorepo (three Next.js sites, a shared engine and a Python data pipeline) running the full CodeFactory harness. You will read the actual workflow files, prompts and guard scripts that drive it:

- 15 GitHub Actions workflows orchestrating the complete issue → PR → merge loop

- Four customised prompts in

.codefactory/prompts/(issue-triage.md,issue-planner.md,issue-implementer.md,review-agent.md) - TypeScript guard scripts (

scripts/*-guard.ts) that pre-flight every agent run and decide whether it should even start - A four-stage fail-fast CI pipeline that skips full Next.js builds (25 minutes each) in favour of type-check + lint + structural tests

- SHA discipline: every downstream job checks out the exact SHA reported by the risk gate so an agent cannot race-push mid-pipeline

- Protected files (

.github/workflows/*,harness.config.json,CLAUDE.md, lock files, deployment configs) that are auto-reverted if an agent touches them - The review prompt loaded from

origin/main— not the PR branch — so agent-authored PRs cannot tamper with their own reviewer

The end-to-end developer experience looks like this: a human opens an issue. The triage agent labels it, asks clarifying questions if needed, and hands it to the planner. The planner posts an implementation plan as a comment. The implementer creates issue-N, implements the change, runs quality gates and opens a PR. The review agent reviews. If changes are requested, the implementer is dispatched again in review-fix mode — up to three cycles — before escalating to a human. The only human touch points are opening the issue and approving the final merge.

What your team will take home

By the end of the training your developers will be able to bootstrap this exact setup in their own repositories, write and tune their own agent prompts, define risk tiers that match their architecture, and measure whether the harness is actually working through Mean-Time-To-Harness and SLO metrics. They will leave with a running harness on one of your real repositories — not a toy example.

Join the next cohort

Claim Your Spot Today!

The future won’t wait—contact us now and book your AI software development training to start automating your engineering workflow.