Model Drift

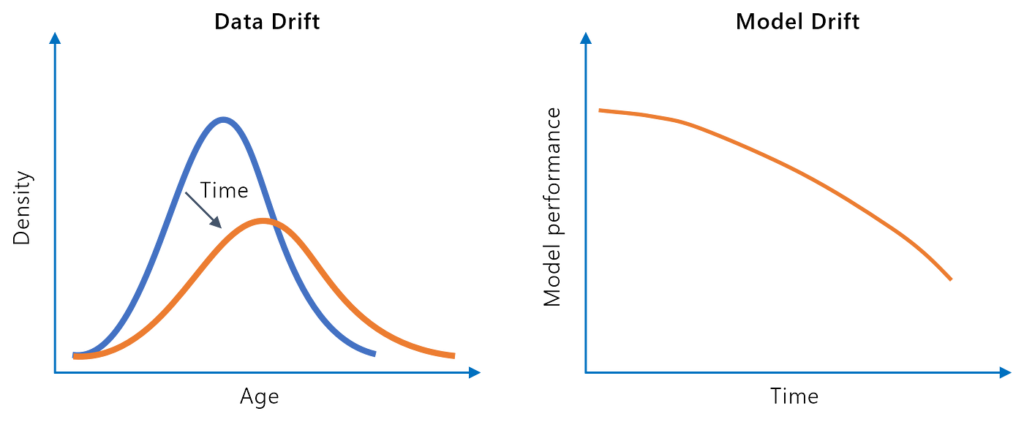

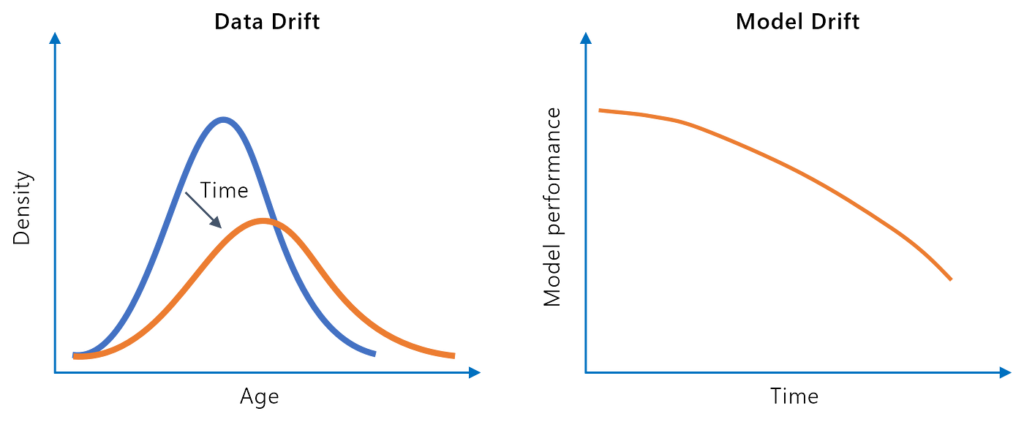

Model drift, or model decay, refers to the decline in a machine learning model’s predictive performance over time due to changes in the real-world environment. ...

Adversarial machine learning studies attacks that deliberately manipulate AI model inputs to cause incorrect outputs, and the defenses against them. Techniques range from imperceptible image perturbations that fool classifiers to crafted text prompts that hijack LLM behavior.

Adversarial machine learning is the study of attacks that cause AI models to produce incorrect, unsafe, or unintended outputs by deliberately manipulating their inputs. It encompasses both the attack techniques that exploit model vulnerabilities and the defensive approaches that make models more robust against them.

Adversarial ML emerged from computer vision research in the early 2010s, when researchers discovered that adding imperceptibly small perturbations to images could cause state-of-the-art classifiers to misclassify them with high confidence. A panda becomes a gibbon; a stop sign becomes a speed limit sign — with pixel changes invisible to human observers.

This discovery revealed that neural networks, despite their impressive performance, learn statistical patterns that can be exploited rather than robust semantic understanding. The same underlying principle — that models can be systematically fooled by carefully designed inputs — applies across all AI modalities, including language models.

The model is attacked at inference time with inputs designed to cause misclassification or unexpected behavior. In computer vision, these are adversarial images. In NLP and LLMs, evasion attacks include:

The model or its data sources are attacked during training or retrieval. Examples include:

Adversaries use repeated queries to extract information about a model’s decision boundaries, reconstruct training data, or replicate model capabilities — a competitive intelligence threat for proprietary AI systems.

Attackers determine whether specific data was used in training, potentially exposing whether sensitive personal information was included in training datasets.

Large language models face adversarial attacks that are distinct from classical ML adversarial examples:

Natural language attacks are human-readable. Unlike image perturbations (imperceptible pixel changes), effective LLM adversarial attacks often use coherent natural language — making them much harder to distinguish from legitimate inputs.

The attack surface is the instruction interface. LLMs are designed to follow instructions. Adversarial attacks exploit this by crafting inputs that look like legitimate instructions to the model but achieve attacker goals.

Gradient-based attacks are viable. For open-source or white-box access models, attackers can compute adversarial suffixes using gradient descent — the same technique used to find adversarial image perturbations. Research has demonstrated that these computed strings transfer surprisingly well to proprietary models.

Social engineering analog. Many LLM adversarial attacks resemble social engineering more than classical ML attacks — exploiting model tendencies toward helpfulness, consistency, and authority compliance.

Including adversarial examples in training improves robustness. Safety alignment training for LLMs incorporates examples of prompt injection and jailbreaking attempts, teaching models to resist them. However, this arms race dynamic means new attacks regularly emerge that bypass current training.

Formal verification techniques provide mathematical guarantees that a model will correctly classify inputs within a certain perturbation bound. Currently limited to smaller models and simpler input domains, but an active research area.

Sanitizing inputs to remove or neutralize potential adversarial components before they reach the model. For LLMs, this includes detecting injection patterns and anomalous input structures.

Using multiple models and requiring agreement reduces adversarial transferability. An attack that fools one model is less likely to fool all models in an ensemble.

Detecting adversarial inputs at runtime by identifying statistical anomalies or behavioral patterns inconsistent with normal use.

For organizations deploying AI chatbots, adversarial ML principles inform:

Adversarial examples are carefully crafted inputs designed to fool a machine learning model into making incorrect predictions. For image classifiers, this might be an image with imperceptible pixel changes that causes misclassification. For LLMs, adversarial examples include crafted prompts that trigger unsafe outputs or bypass safety filters.

LLM security is a specialized application of adversarial ML principles. Prompt injection and jailbreaking are adversarial attacks on LLMs — crafted inputs designed to cause incorrect or harmful behavior. Adversarial suffixes (computed strings that reliably jailbreak models) are a direct application of classical adversarial example research to language models.

Adversarial training is a defense technique that improves model robustness by including adversarial examples in the training dataset. The model learns to correctly handle inputs that were previously adversarial. For LLMs, this is incorporated into safety alignment training — models are trained on examples of attacks to learn to resist them.

Adversarial vulnerabilities in AI chatbots go beyond classic ML attacks. Our assessments cover prompt injection, jailbreaking, and all LLM-specific adversarial techniques.

Model drift, or model decay, refers to the decline in a machine learning model’s predictive performance over time due to changes in the real-world environment. ...

A Generative Adversarial Network (GAN) is a machine learning framework with two neural networks—a generator and a discriminator—that compete to generate data in...

Explainable AI (XAI) is a suite of methods and processes designed to make the outputs of AI models understandable to humans, fostering transparency, interpretab...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.