DesktopCommander MCP Server

DesktopCommander MCP Server empowers AI assistants like Claude with direct desktop automation, providing secure terminal control, file system search, and diff-b...

FlowHunt provides an additional security layer between your internal systems and AI tools, giving you granular control over which tools are accessible from your MCP servers. MCP servers hosted in our infrastructure can be seamlessly integrated with FlowHunt's chatbot as well as popular AI platforms like ChatGPT, Claude, and various AI editors.

The mcp-server-commands MCP (Model Context Protocol) Server acts as a bridge between AI assistants and the ability to execute local or system commands securely. By exposing an interface for running shell commands, it allows AI clients to access external data, interact with the file system, perform diagnostics, or automate workflows directly from their environment. The server processes command requests from LLMs and returns the output, including both STDOUT and STDERR, which can be used for further analysis or actions. This enhances development workflows by enabling tasks such as listing directories, viewing system information, or running scripts, thereby expanding the practical capabilities of AI assistants for developers and power users.

No explicit resources are listed in the available documentation or code.

hostname, ls -al, echo "hello world"). Returns STDOUT and STDERR as text. Supports an optional stdin parameter to pass input (such as code or file contents) to commands that accept it, facilitating scripting and file operations.hostname or top to fetch system status or environment details directly from within the AI assistant.ls -al), create or read files, and manipulate text files using shell commands.stdin, enabling rapid prototyping or automation.mcp-server-commands package:npm install -g mcp-server-commands

{

"mcpServers": {

"mcp-server-commands": {

"command": "npx",

"args": ["mcp-server-commands"]

}

}

}

mcp-server-commands globally:npm install -g mcp-server-commands

~/Library/Application Support/Claude/claude_desktop_config.json%APPDATA%/Claude/claude_desktop_config.json{

"mcpServers": {

"mcp-server-commands": {

"command": "npx",

"args": ["mcp-server-commands"]

}

}

}

mcp-server-commands:npm install -g mcp-server-commands

{

"mcpServers": {

"mcp-server-commands": {

"command": "npx",

"args": ["mcp-server-commands"]

}

}

}

npm install -g mcp-server-commands

{

"mcpServers": {

"mcp-server-commands": {

"command": "npx",

"args": ["mcp-server-commands"]

}

}

}

If you need to supply sensitive environment variables (e.g., API keys), use the env and inputs fields in your configuration:

{

"mcpServers": {

"mcp-server-commands": {

"command": "npx",

"args": ["mcp-server-commands"],

"env": {

"EXAMPLE_API_KEY": "${EXAMPLE_API_KEY}"

},

"inputs": {

"apiKey": "${EXAMPLE_API_KEY}"

}

}

}

}

Replace EXAMPLE_API_KEY with your actual environment variable name.

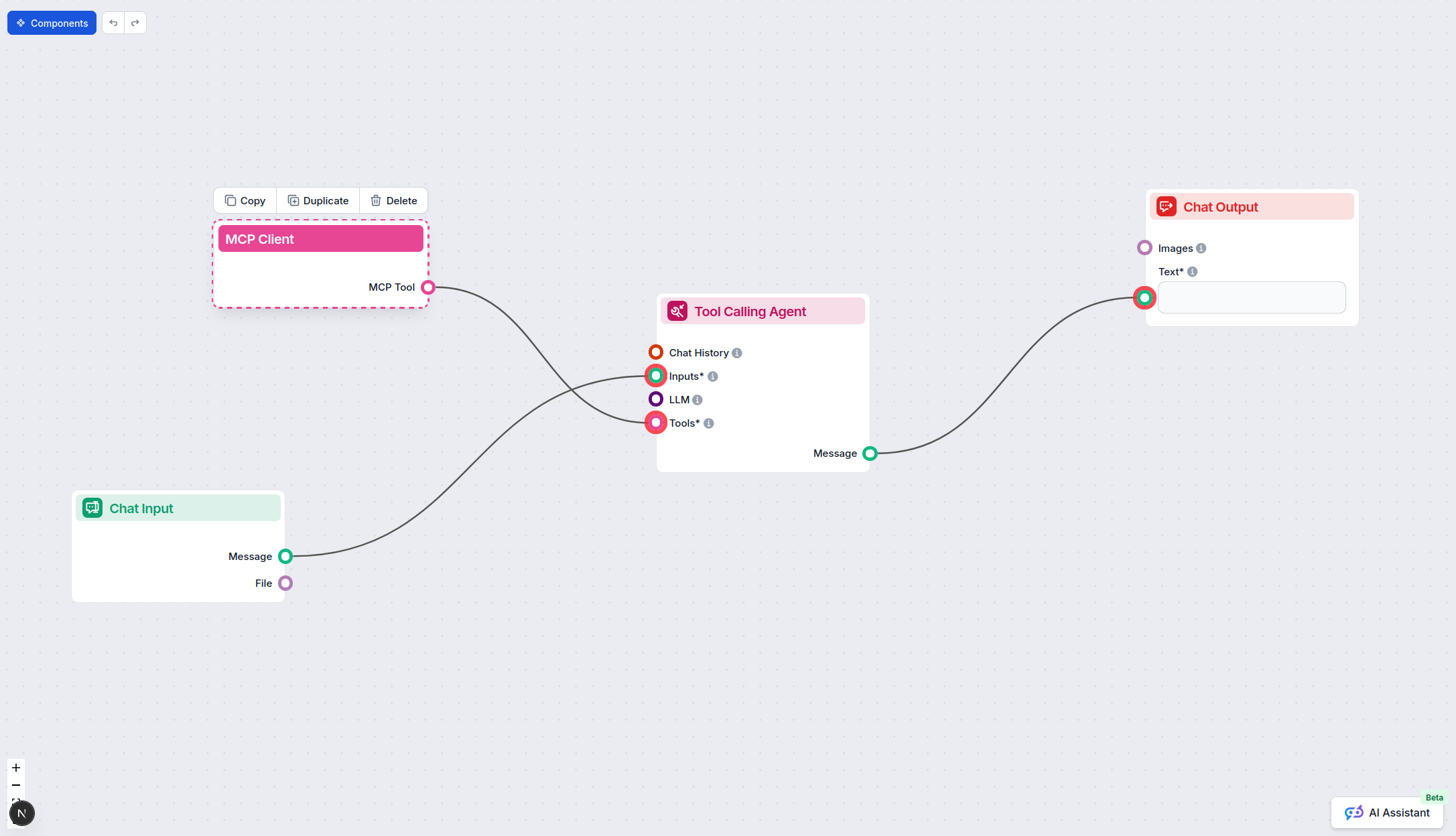

Using MCP in FlowHunt

To integrate MCP servers into your FlowHunt workflow, start by adding the MCP component to your flow and connecting it to your AI agent:

Click on the MCP component to open the configuration panel. In the system MCP configuration section, insert your MCP server details using this JSON format:

{

"mcp-server-commands": {

"transport": "streamable_http",

"url": "https://yourmcpserver.example/pathtothemcp/url"

}

}

Once configured, the AI agent is now able to use this MCP as a tool with access to all its functions and capabilities. Remember to change “mcp-server-commands” to whatever the actual name of your MCP server is and replace the URL with your own MCP server URL.

| Section | Availability | Details/Notes |

|---|---|---|

| Overview | ✅ | Provides shell command execution as a tool for LLMs. |

| List of Prompts | ✅ | run_command |

| List of Resources | ⛔ | No explicit resources listed. |

| List of Tools | ✅ | run_command |

| Securing API Keys | ✅ | Supported via env and inputs in config. |

| Sampling Support (less important in evaluation) | ⛔ | Not mentioned in docs or code. |

Our opinion:

This MCP server is simple but highly effective for its purpose: giving LLMs access to the system shell in a controlled way. It is well-documented, easy to configure, and has clear security warnings. However, its scope is limited (one tool, no explicit resources or prompt templates beyond run_command), and advanced MCP features like Roots and Sampling are not referenced in the documentation or code. Overall, it is well-suited for developers looking for shell access through AI, but lacks broader extensibility.

| Has a LICENSE | ✅ (MIT) |

|---|---|

| Has at least one tool | ✅ |

| Number of Forks | 27 |

| Number of Stars | 159 |

Give your AI assistants secure, configurable shell access for automation, diagnostics, and file management with mcp-server-commands MCP Server.

DesktopCommander MCP Server empowers AI assistants like Claude with direct desktop automation, providing secure terminal control, file system search, and diff-b...

The Windows CLI MCP Server bridges AI assistants with Windows command-line interfaces and remote systems via SSH, providing secure, programmable command executi...

The Terminal Controller MCP Server enables secure execution of terminal commands, directory navigation, and file system operations through a standardized interf...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.