LLMs.txt: The Complete Guide to Optimizing Your Website for AI Agents

Learn how LLMs.txt files help AI agents navigate your website efficiently, prioritize important content, and improve AI-driven visibility for your business.

Private LLM deployments go stale. Knowledge gaps cause incidents. Compliance teams need audit trails. We offer a complete suite of managed services to keep your model accurate, compliant, and trustworthy — without GPU retraining.

Every service in our catalog operates directly on model weights — no retraining, no retrieval infrastructure required. Updates are lightweight, instant, and fully auditable.

Private LLM deployments go stale — new products, changed prices, updated policies surface as wrong answers. RAG adds complexity and latency. Full retraining is expensive. Our subscription service applies targeted knowledge updates directly to your deployed model weights: lightweight, instant, and audit-ready.

Enterprises often don't know what an LLM actually knows about their domain before deploying it. Gaps and errors surface when customers complain. Our structured audit scans the model's internal knowledge across your defined topic areas and delivers a clear report before go-live.

Healthcare, finance, and legal companies face strict regulatory requirements around AI accuracy and explainability. Our industry-specific compliance package combines pre-deployment audit, audit trail generation, ongoing knowledge drift monitoring, and incident response under a single enterprise SLA.

Many companies use RAG for knowledge that rarely changes — adding latency, retrieval errors, and infrastructure costs for facts that could simply be in the model itself. Our one-time embedding service places stable company knowledge directly into model weights with no retrieval overhead.

Companies evaluating which open-source model to deploy run expensive, time-consuming benchmarks that test general capability — not domain-specific knowledge. Our comparison service evaluates multiple candidate models against your actual knowledge requirements and delivers a scored recommendation.

When an LLM deployment causes a real problem — wrong medical advice, incorrect legal guidance, defamatory output — you need to explain what happened, why, and what you did to fix it. Our forensic analysis traces the root cause, produces a report suitable for regulatory or legal review, and implements a targeted fix.

SERVICE TIERS

Our services map to three stages of the enterprise LLM lifecycle: evaluation, deployment, and ongoing compliance

Pre-deployment audit and model procurement comparison. For companies evaluating open-source models before committing to a deployment. Per-engagement pricing.

Model knowledge maintenance subscription and static knowledge embedding. For companies with deployed models that need to stay accurate over time. Monthly subscription.

Full compliance package, hallucination forensics, audit trail generation, and incident response. For regulated industries with strict accuracy and explainability requirements. Annual enterprise contract.

Tell us about your model, your industry, and your challenge. We'll identify the right service and provide a scoping proposal within 2 business days.

Whether you're deploying a model for the first time or operating a production LLM that's drifted from the truth, we have a service to match. Tell us what you're dealing with.

Learn how LLMs.txt files help AI agents navigate your website efficiently, prioritize important content, and improve AI-driven visibility for your business.

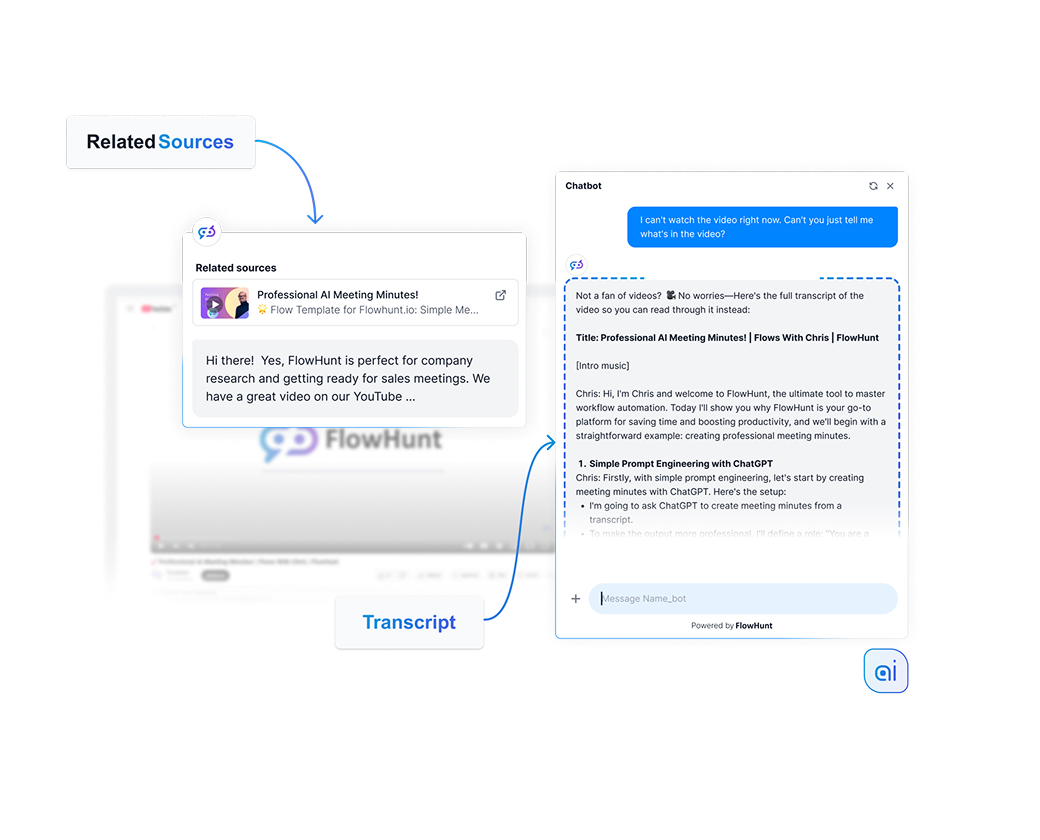

Give AI knowledge and real-time internet access to ensure the relevant and up-to-date answers.

A hands-on introduction to Generative AI and Large Language Models, covering chatbots, prompt engineering, and real-world applications.

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.