LSP MCP Server Integration

The LSP MCP Server connects Language Server Protocol (LSP) servers to AI assistants, enabling advanced code analysis, intelligent completion, diagnostics, and e...

A minimal, functional MCP server for Oat++ that enables AI agents to interact with API endpoints, manage files, and automate workflows using standardized tools and prompt templates.

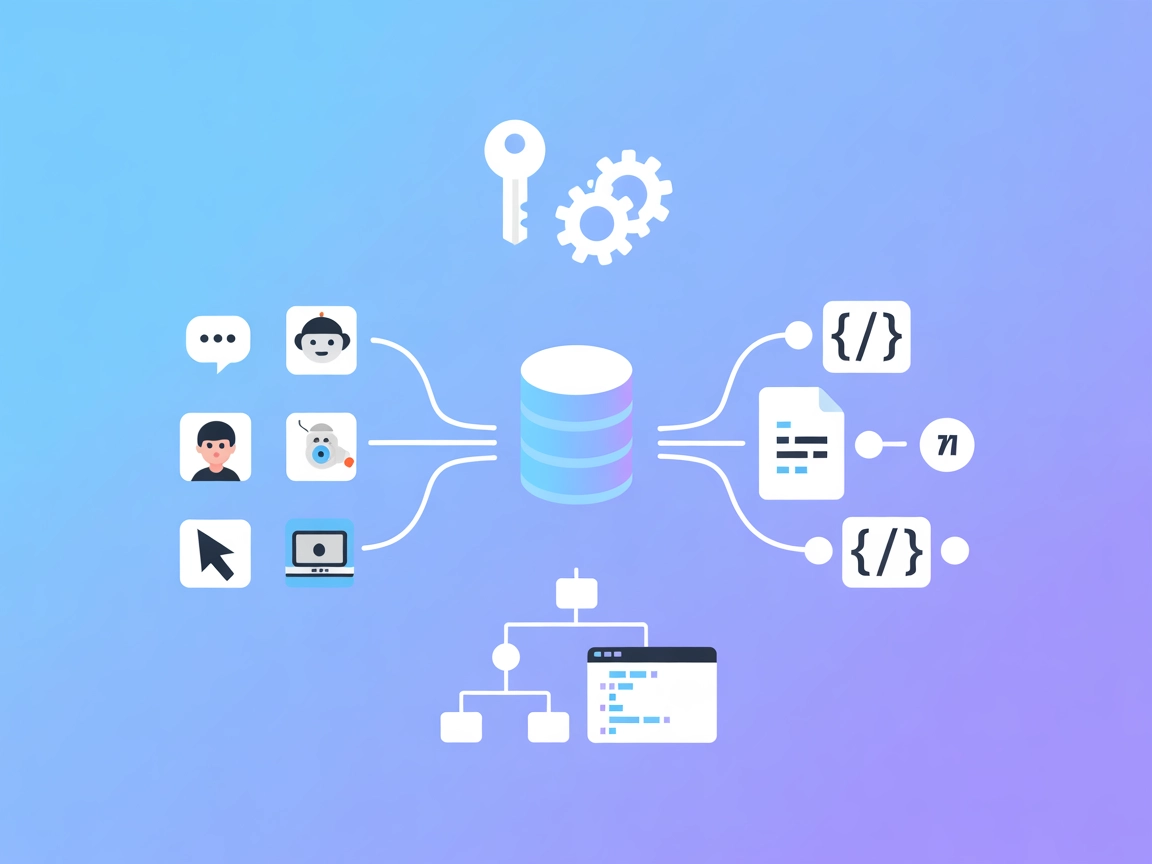

FlowHunt provides an additional security layer between your internal systems and AI tools, giving you granular control over which tools are accessible from your MCP servers. MCP servers hosted in our infrastructure can be seamlessly integrated with FlowHunt's chatbot as well as popular AI platforms like ChatGPT, Claude, and various AI editors.

The oatpp-mcp MCP Server is an implementation of Anthropic’s Model Context Protocol (MCP) for the Oat++ web framework. It acts as a bridge between AI assistants and external APIs or services, enabling seamless integration and interaction. By exposing Oat++ API controllers and resources through the MCP protocol, oatpp-mcp allows AI agents to perform tasks such as querying APIs, managing files, and leveraging server-side tools. This enhances development workflows by enabling large language models (LLMs) and clients to access and manipulate backend data, automate operations, and standardize interactions through reusable prompt templates and workflows. The server can be run over STDIO or HTTP SSE, making it flexible for different deployment environments.

(No other resources are explicitly listed in the available documentation.)

(No other tools are explicitly listed in the available documentation.)

settings.json).mcpServers object:{

"mcpServers": {

"oatpp-mcp": {

"command": "oatpp-mcp",

"args": []

}

}

}

Securing API Keys

{

"mcpServers": {

"oatpp-mcp": {

"command": "oatpp-mcp",

"env": {

"API_KEY": "env:OATPP_API_KEY"

},

"inputs": {

"api_key": "${API_KEY}"

}

}

}

}

{

"mcpServers": {

"oatpp-mcp": {

"command": "oatpp-mcp",

"args": []

}

}

}

Securing API Keys

Follow the same pattern as in Windsurf.

{

"mcpServers": {

"oatpp-mcp": {

"command": "oatpp-mcp",

"args": []

}

}

}

Securing API Keys

Same as above.

{

"mcpServers": {

"oatpp-mcp": {

"command": "oatpp-mcp",

"args": []

}

}

}

Securing API Keys

Same as above.

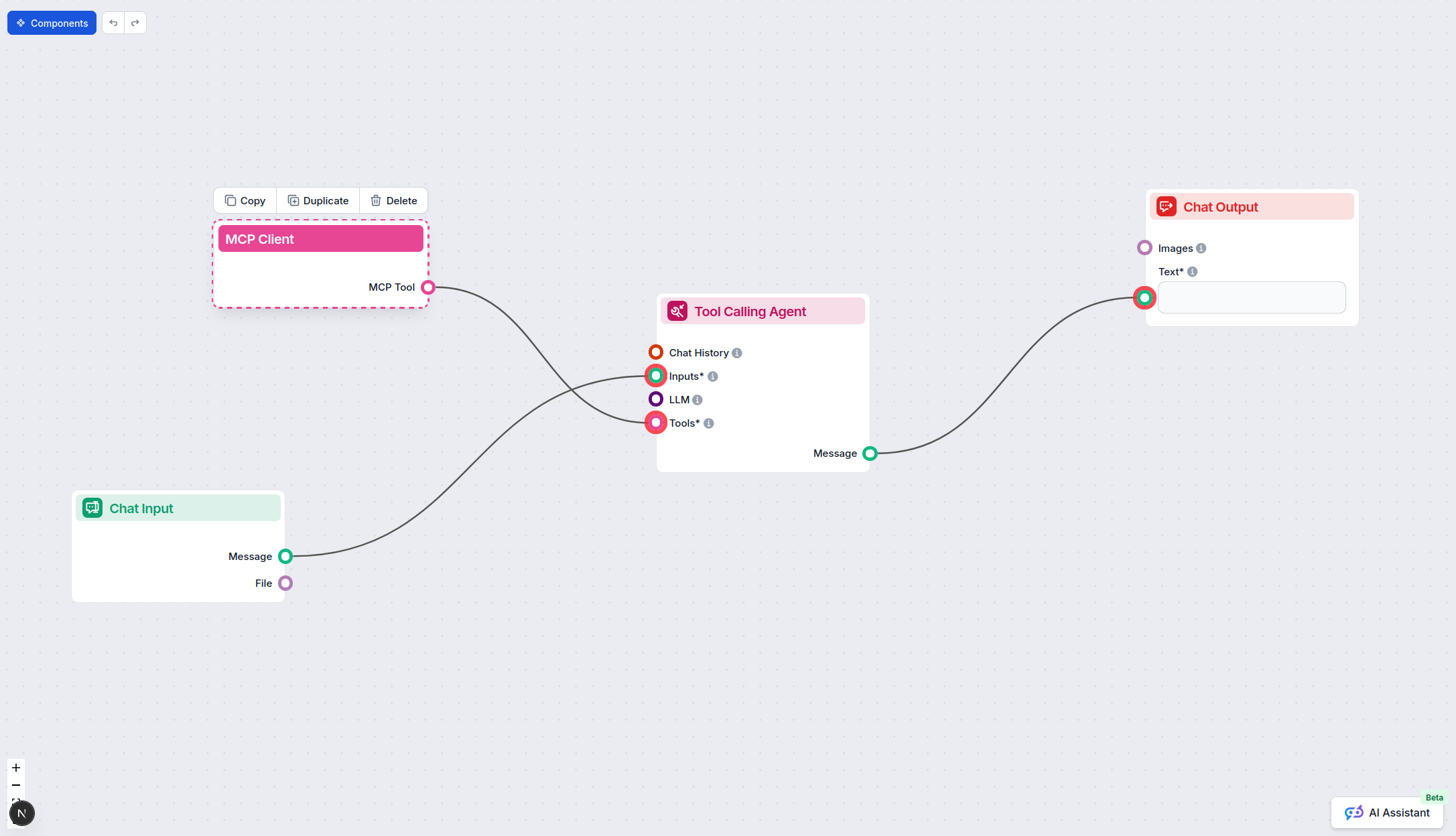

Using MCP in FlowHunt

To integrate MCP servers into your FlowHunt workflow, start by adding the MCP component to your flow and connecting it to your AI agent:

Click on the MCP component to open the configuration panel. In the system MCP configuration section, insert your MCP server details using this JSON format:

{

"oatpp-mcp": {

"transport": "streamable_http",

"url": "https://yourmcpserver.example/pathtothemcp/url"

}

}

Once configured, the AI agent is now able to use this MCP as a tool with access to all its functions and capabilities. Remember to change “oatpp-mcp” to whatever the actual name of your MCP server is and replace the URL with your own MCP server URL.

| Section | Availability | Details/Notes |

|---|---|---|

| Overview | ✅ | |

| List of Prompts | ✅ | Only “CodeReview” explicitly mentioned |

| List of Resources | ✅ | Only “File” resource explicitly mentioned |

| List of Tools | ✅ | Only “Logger” tool explicitly mentioned |

| Securing API Keys | ✅ | Example provided for securing API keys using environment variables |

| Sampling Support (less important in evaluation) | ⛔ | Not mentioned |

Based on the documentation, oatpp-mcp provides a minimal but functional MCP server implementation, covering the protocol’s basics (prompts, resources, tools, and setup) but lacks evidence of advanced features like sampling or roots. The documentation is clear and covers the essentials but is limited in scope and detail.

| Has a LICENSE | ✅ (Apache-2.0) |

|---|---|

| Has at least one tool | ✅ |

| Number of Forks | 3 |

| Number of Stars | 41 |

Our opinion:

oatpp-mcp offers a clean, functional, and compliant MCP implementation for Oat++. While it covers the essentials (with at least one tool, prompt, and resource), it is not feature-rich and lacks documentation or evidence for roots, sampling, or a broader set of primitives. It is a good starting point for Oat++ users but may require extension for advanced workflows.

Rating:

6/10 – Good foundation and protocol compliance, but limited in feature exposure and extensibility based on available documentation.

Integrate oatpp-mcp in your FlowHunt flows to standardize AI agent access to APIs, files, and tools. Start automating backend tasks and streamline code review, logging, and data operations.

The LSP MCP Server connects Language Server Protocol (LSP) servers to AI assistants, enabling advanced code analysis, intelligent completion, diagnostics, and e...

Integrate your AI agents with Outline documentation using the Outline MCP Server. Enable document search, content management, collection handling, and comment w...

The OpenAPI MCP Server connects AI assistants with the ability to explore and understand OpenAPI specifications, offering detailed API context, summaries, and e...

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.