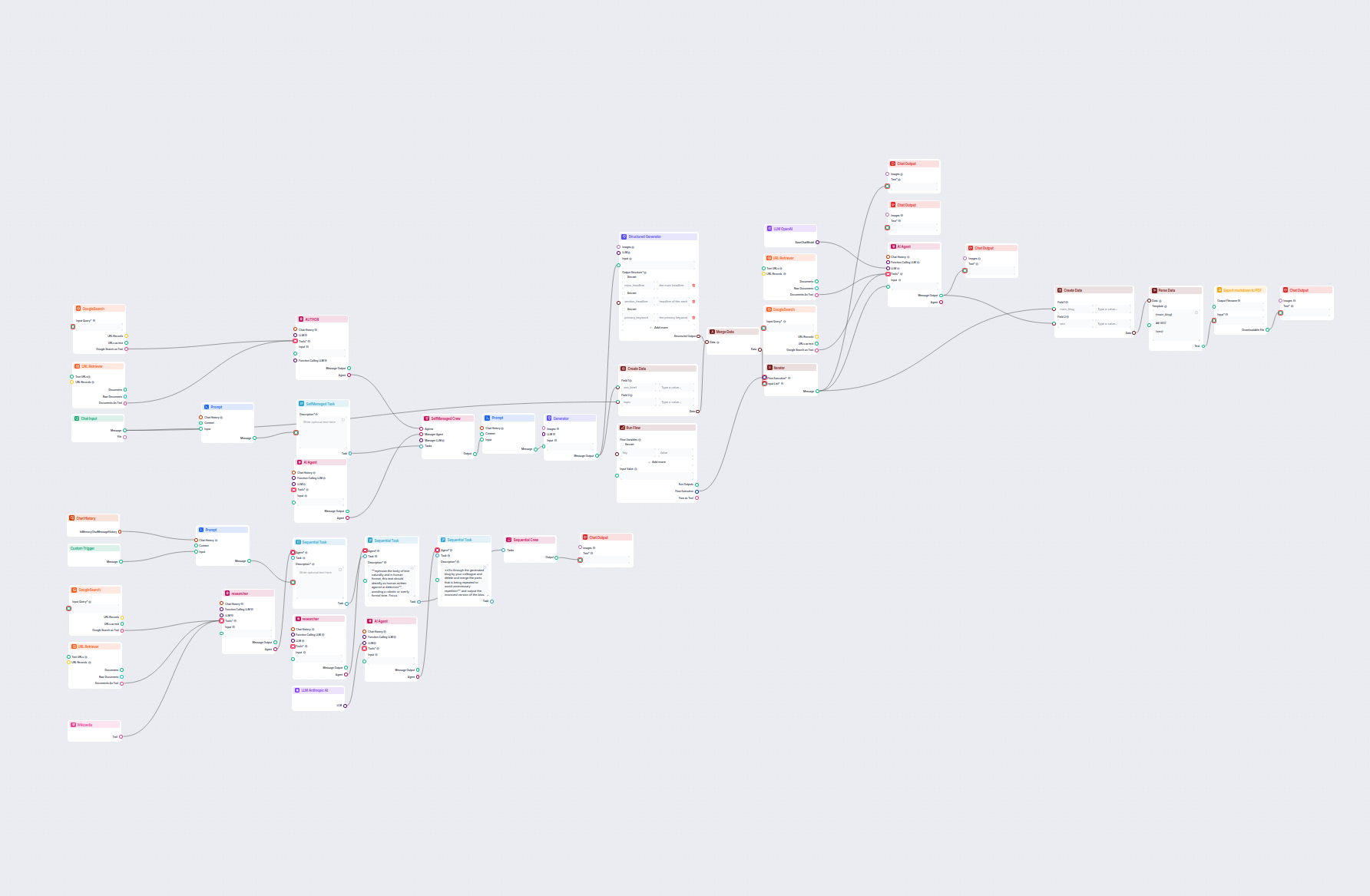

Advanced AI Blog Post Generator

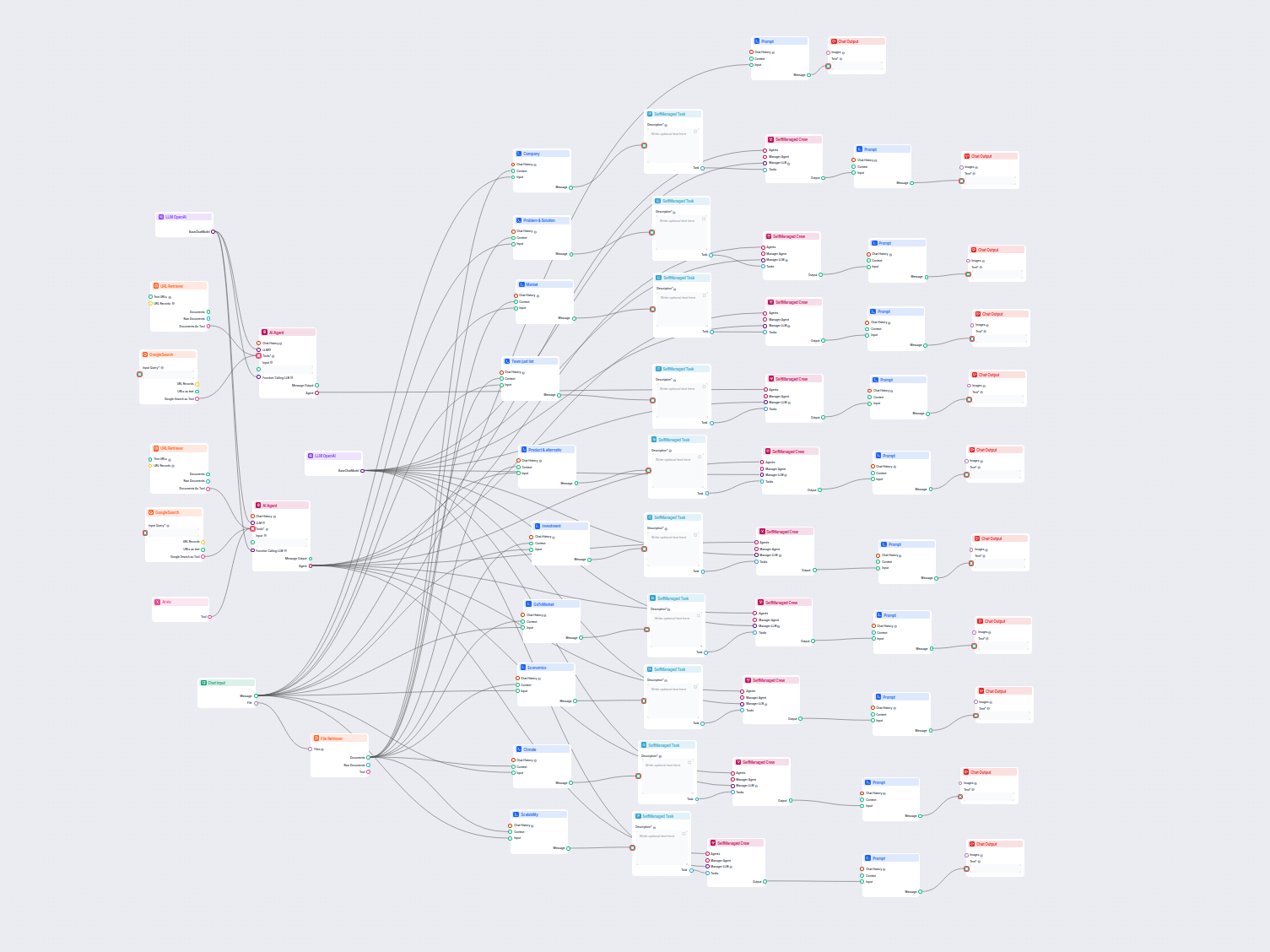

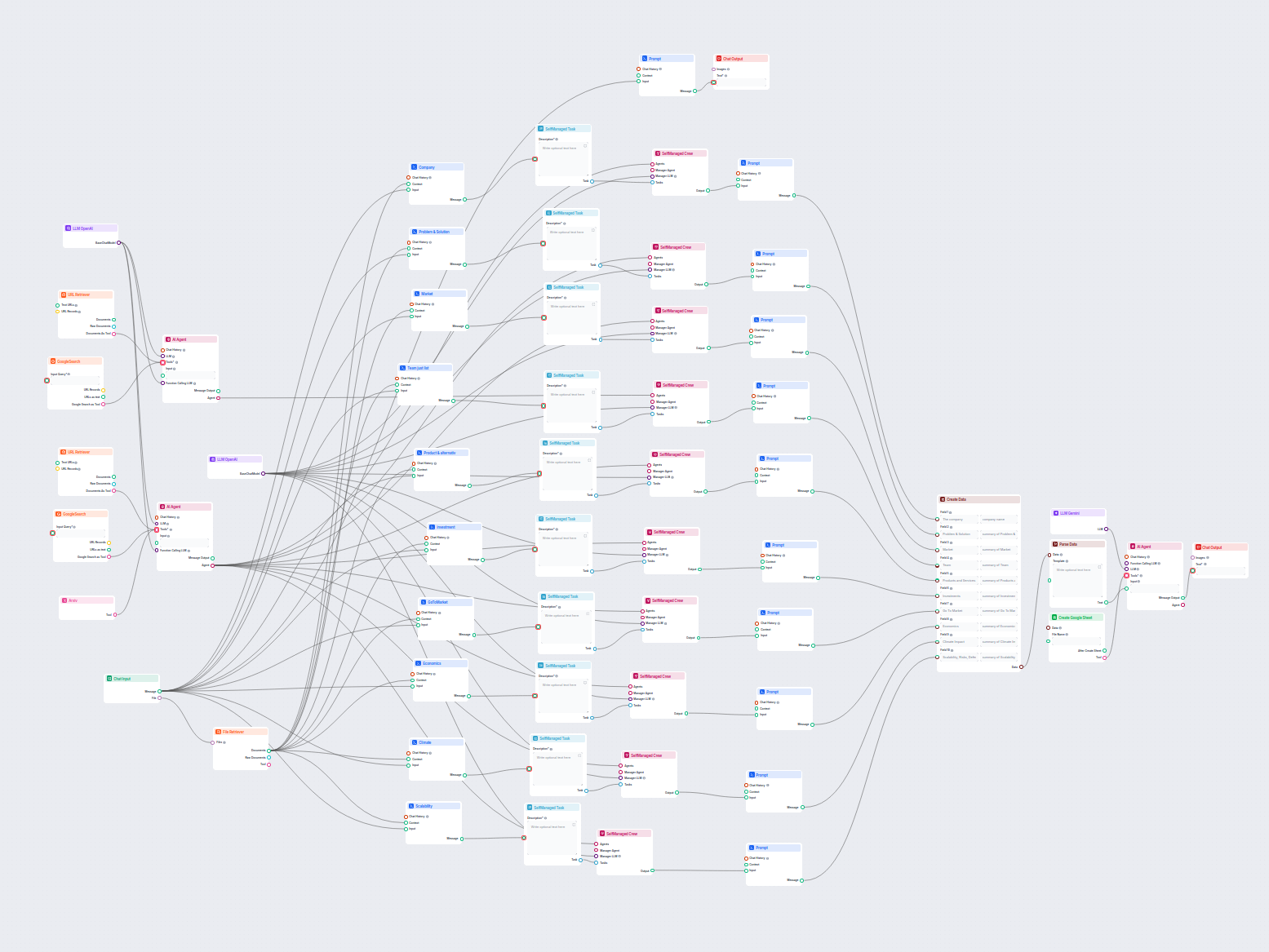

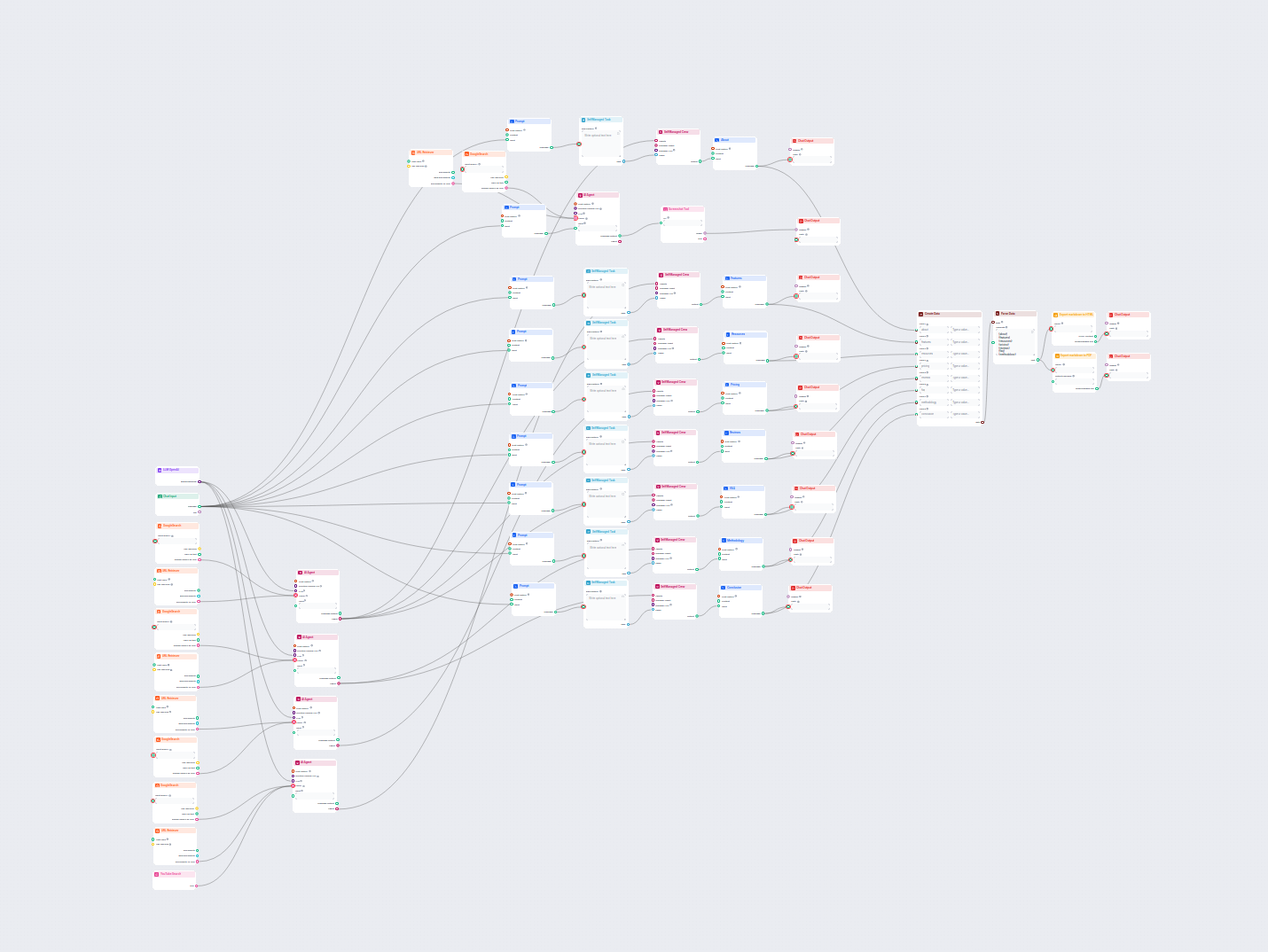

Generate comprehensive, SEO-optimized blog posts with advanced structure and high word count using multiple AI agents. The workflow includes automated research,...

Component description

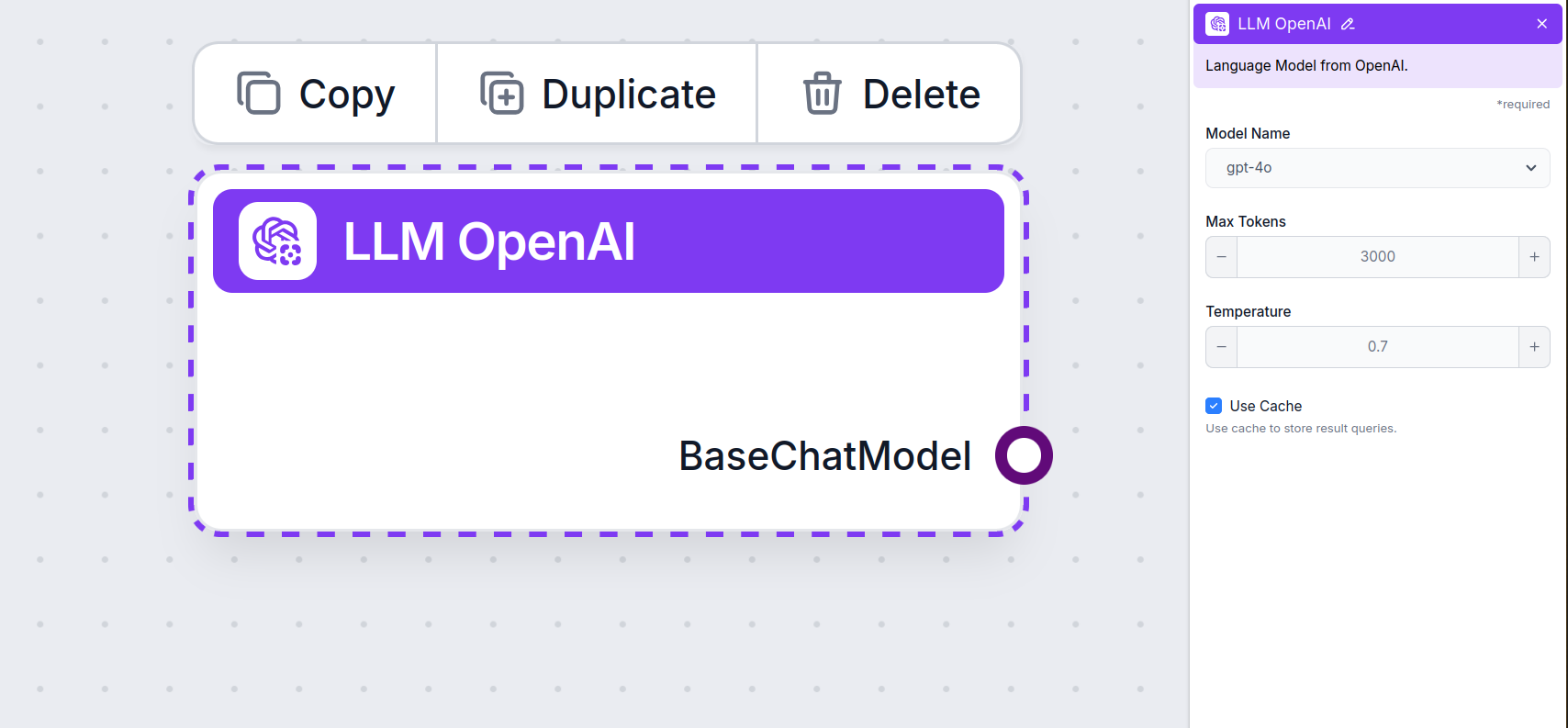

The LLM OpenAI component connects ChatGPT models to your flow. While the Generators and Agents are where the actual magic happens, LLM components allow you to control the model used. All components come with ChatGPT-4 by default. You can connect this component if you wish to change the model or gain more control over it.

This is the model picker. Here, you’ll find all OpenAI models FlowHunt supports. ChatGPT offers a full list of differently capable and differently priced models. For example, using the less advanced and older GPT-3.5 will cost less than using the newest 4o, but the quality and speed of the output will suffer.

OpenAI models available in FlowHunt:

When choosing the right model for the task, consider the quality and speed the task requires. Older models are great for saving money on simple bulk tasks and chatting. If you’re generating content or searching the web, we suggest you opt for a newer, more refined model.

Tokens represent the individual units of text the model processes and generates. Token usage varies with models, and a single token can be anything from words or subwords to a single character. Models are usually priced in millions of tokens.

The max tokens setting limits the total number of tokens that can be processed in a single interaction or request, ensuring the responses are generated within reasonable bounds. The default limit is 4,000 tokens, which is the optimal size for summarizing documents and several sources to generate an answer.

Temperature controls the variability of answers, ranging from 0 to 1.

A temperature of 0.1 will make the responses very to the point but potentially repetitive and deficient.

A high temperature of 1 allows for maximum creativity in answers but creates the risk of irrelevant or even hallucinatory responses.

For example, the recommended temperature for a customer service bot is between 0.2 and 0.5. This level should keep the answers relevant and to the script while allowing for a natural level of variation in responses.

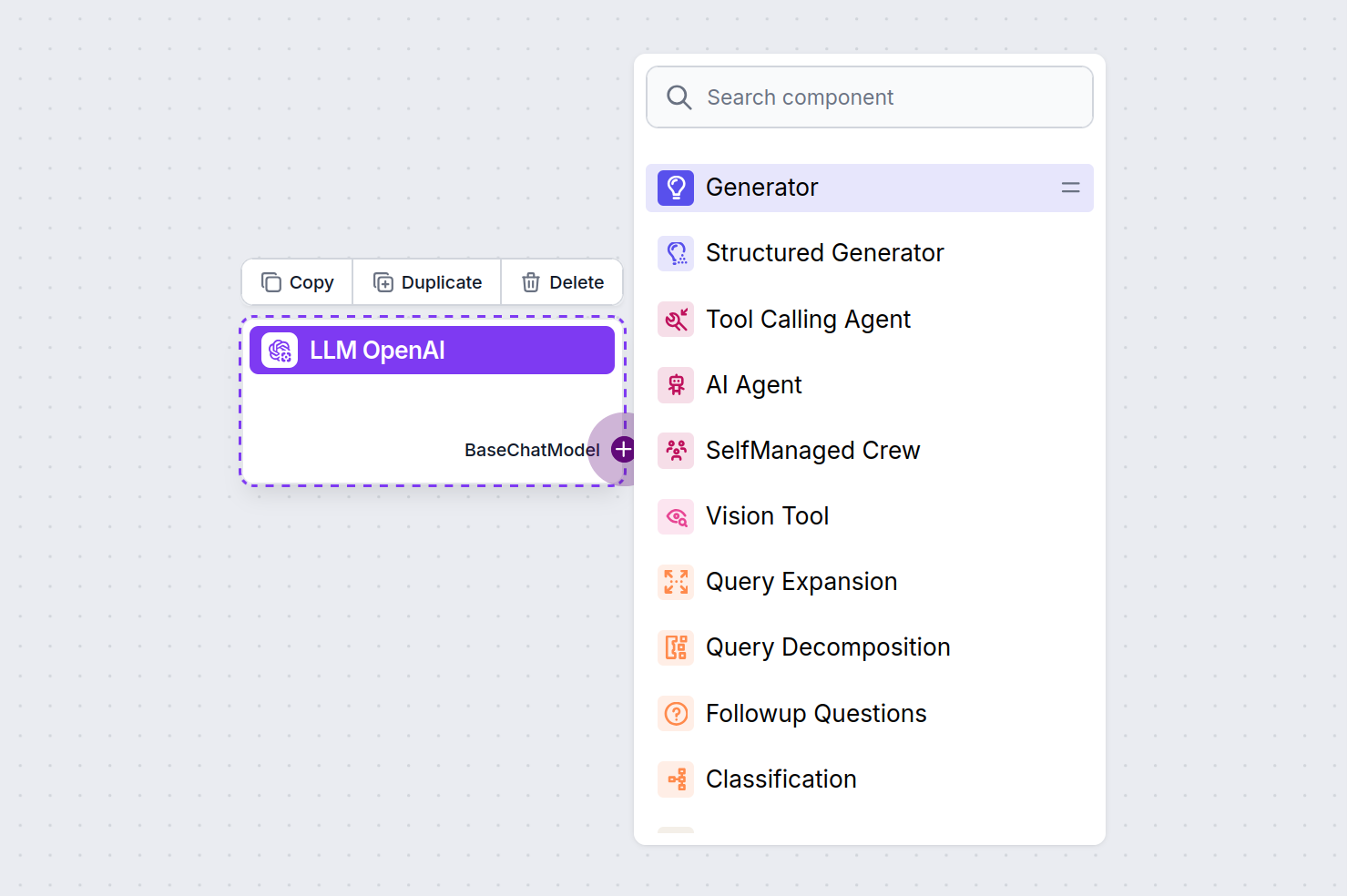

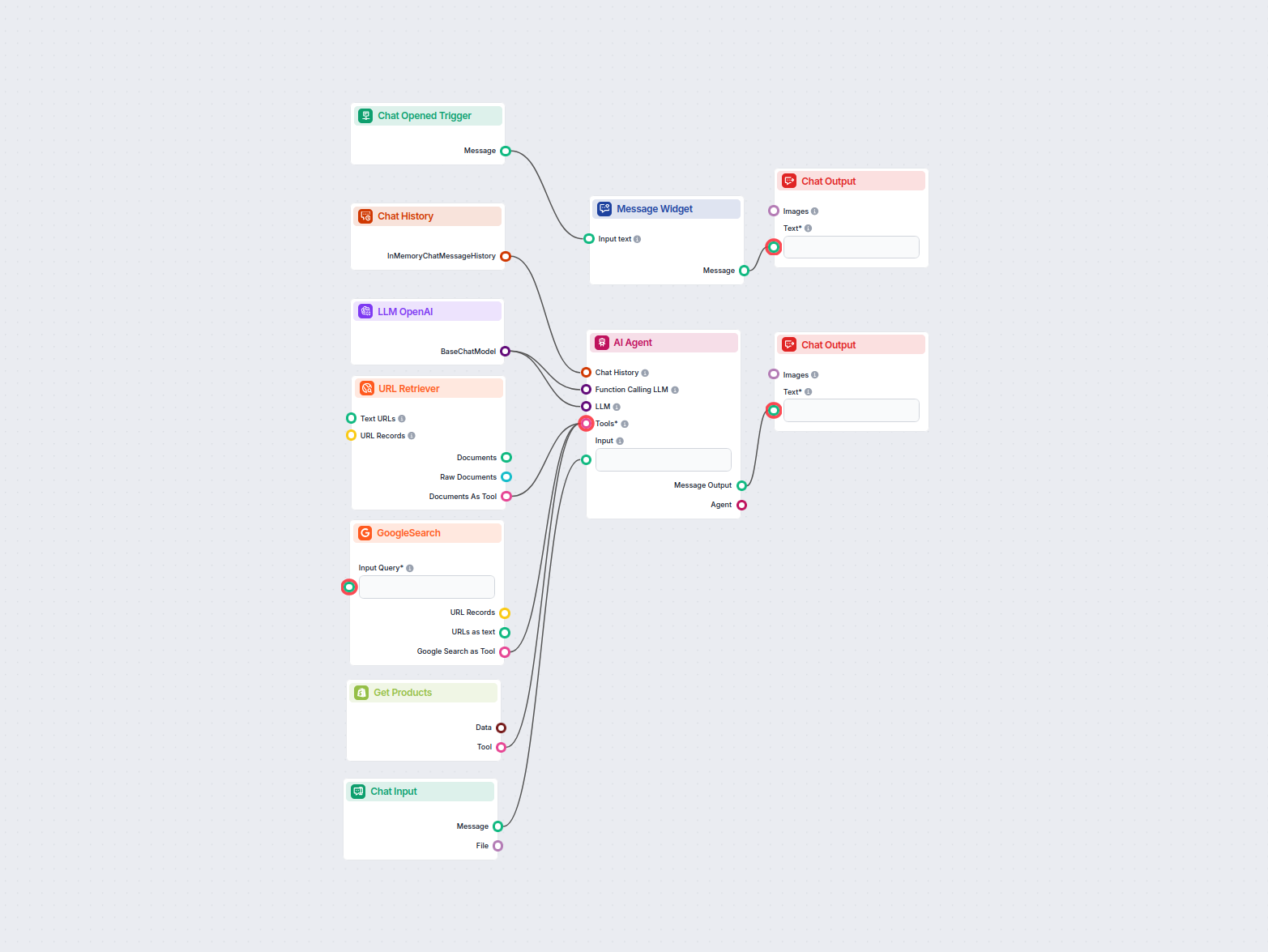

You’ll notice that all LLM components only have an output handle. Input doesn’t pass through the component, as it only represents the model, while the actual generation happens in AI Agents and Generators.

The LLM handle is always purple. The LLM input handle is found on any component that uses AI to generate text or process data. You can see the options by clicking the handle:

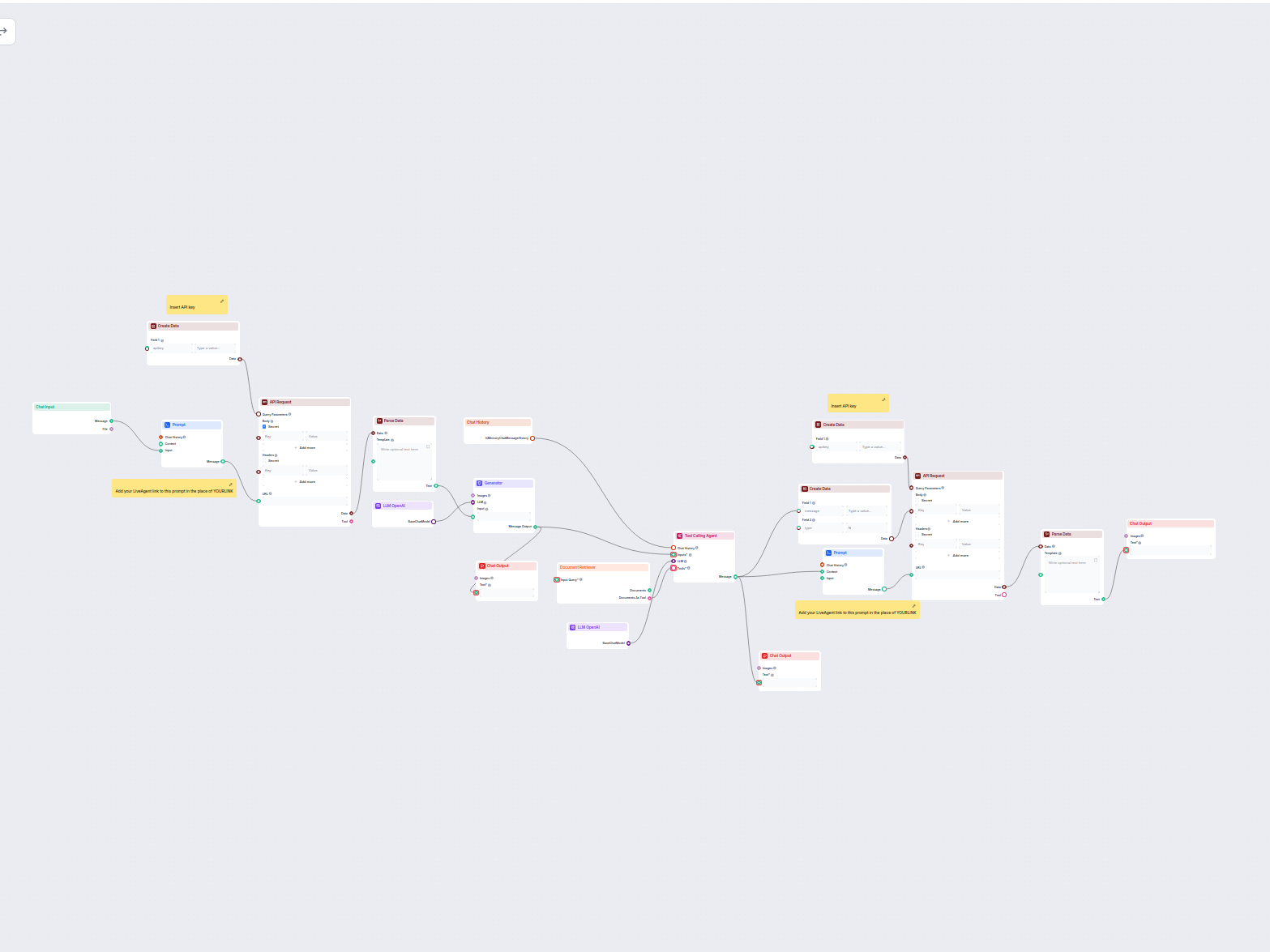

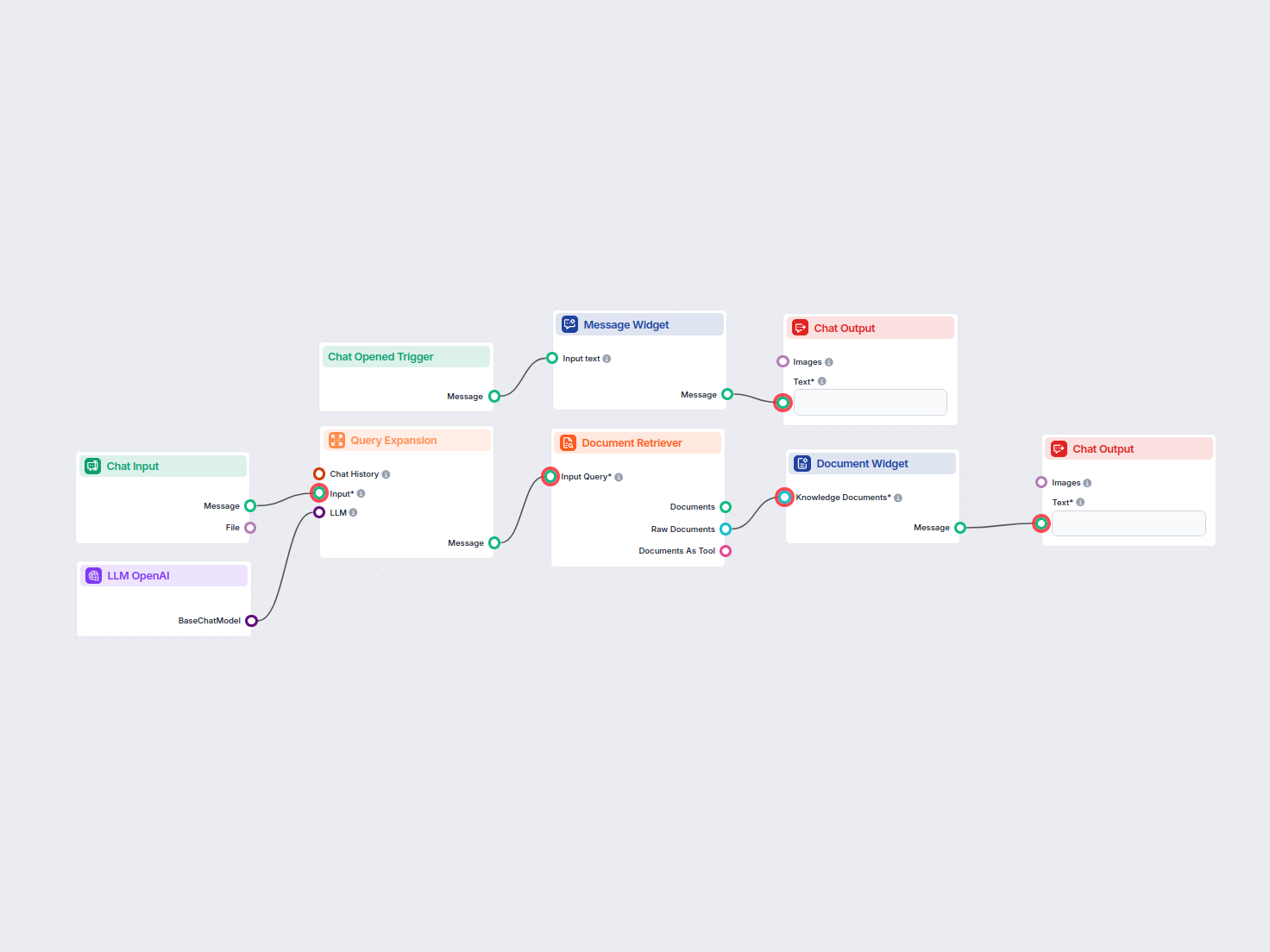

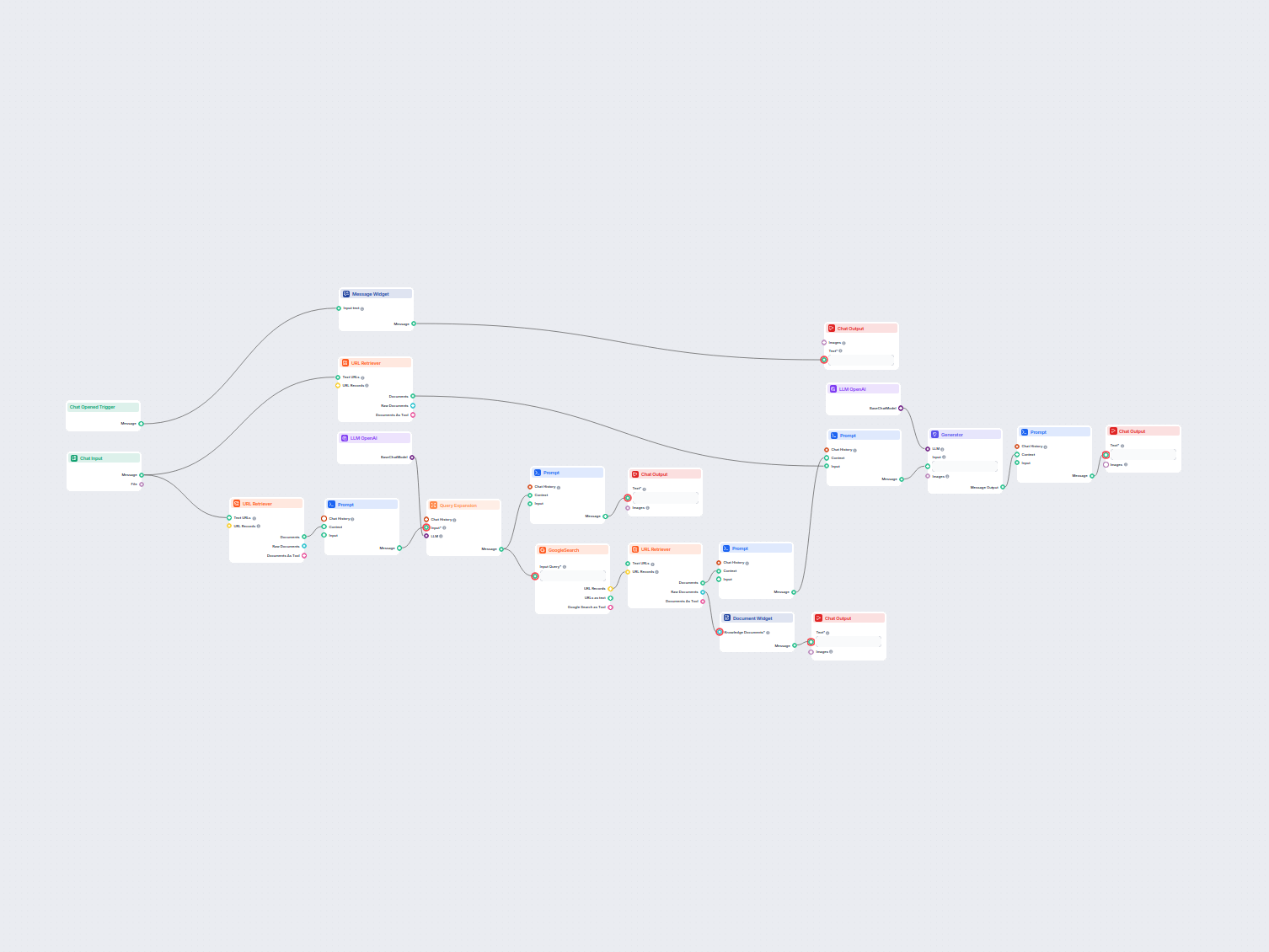

This allows you to create all sorts of tools. Let’s see the component in action. Here’s a simple Agent-powered chatbot Flow using o1 Preview to generate responses. You can think of it as a basic ChatGPT chatbot.

This simple Chatbot Flow includes:

To help you get started quickly, we have prepared several example flow templates that demonstrate how to use the LLM OpenAI component effectively. These templates showcase different use cases and best practices, making it easier for you to understand and implement the component in your own projects.

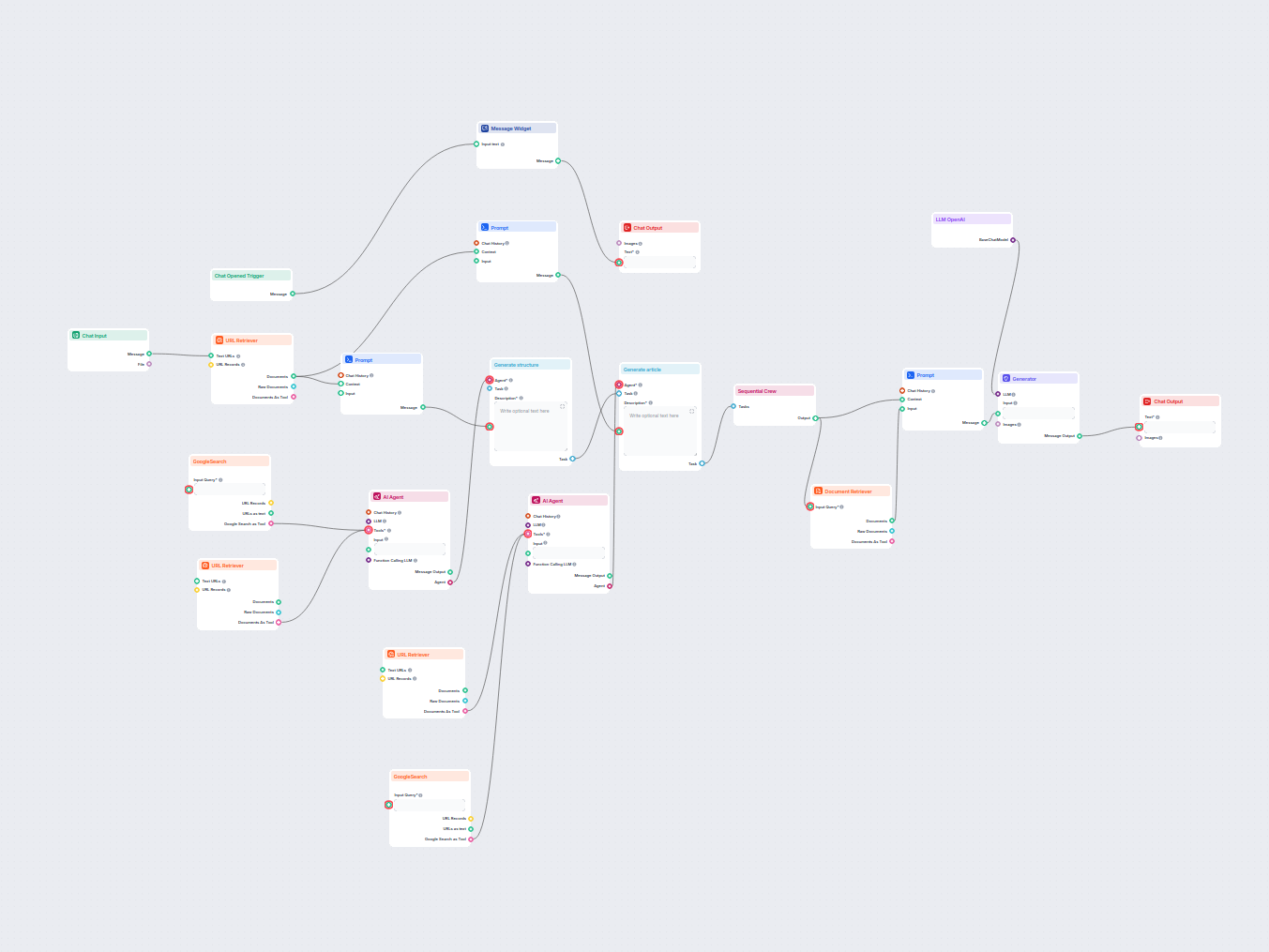

Generate comprehensive, SEO-optimized blog posts with advanced structure and high word count using multiple AI agents. The workflow includes automated research,...

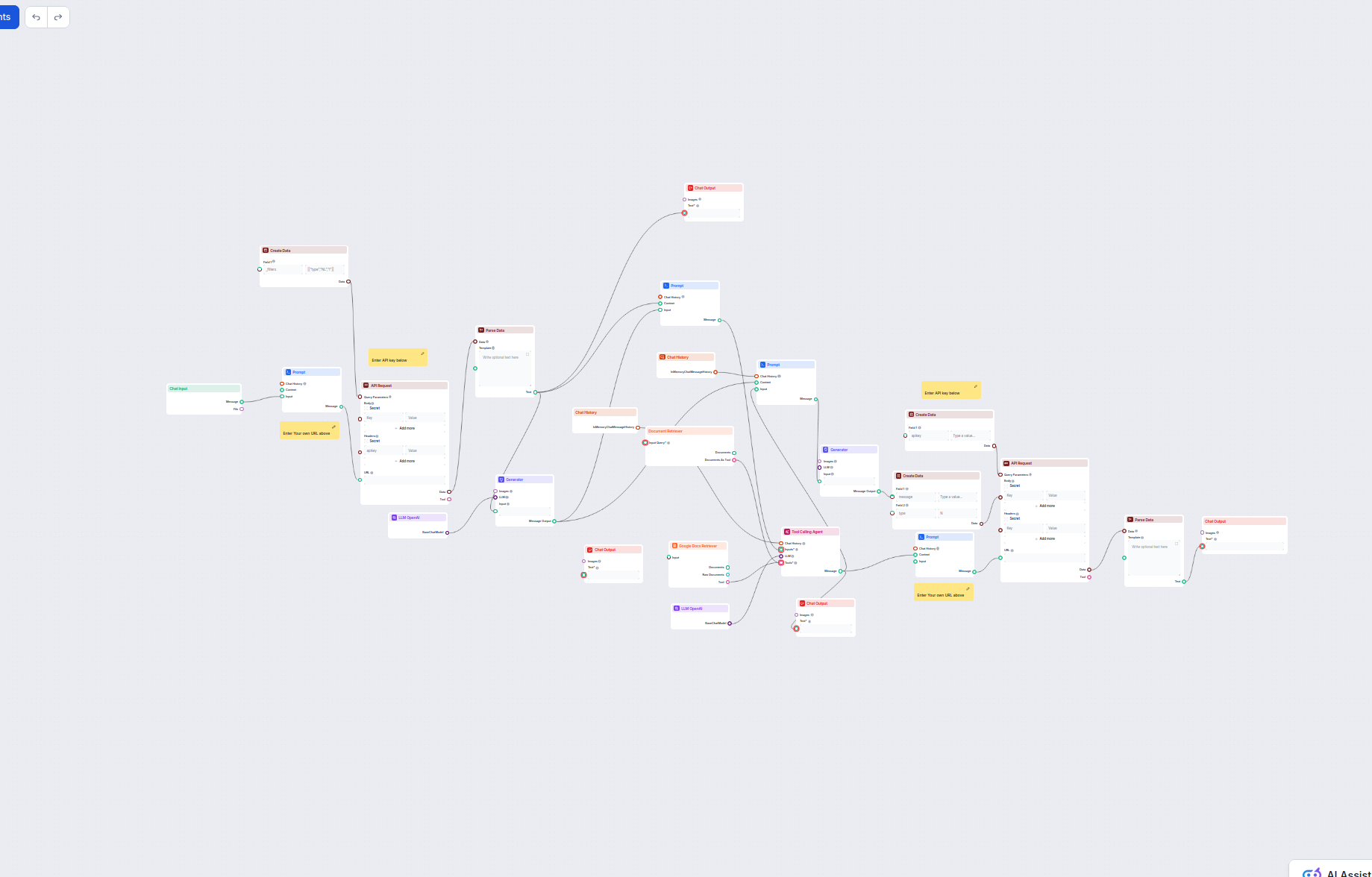

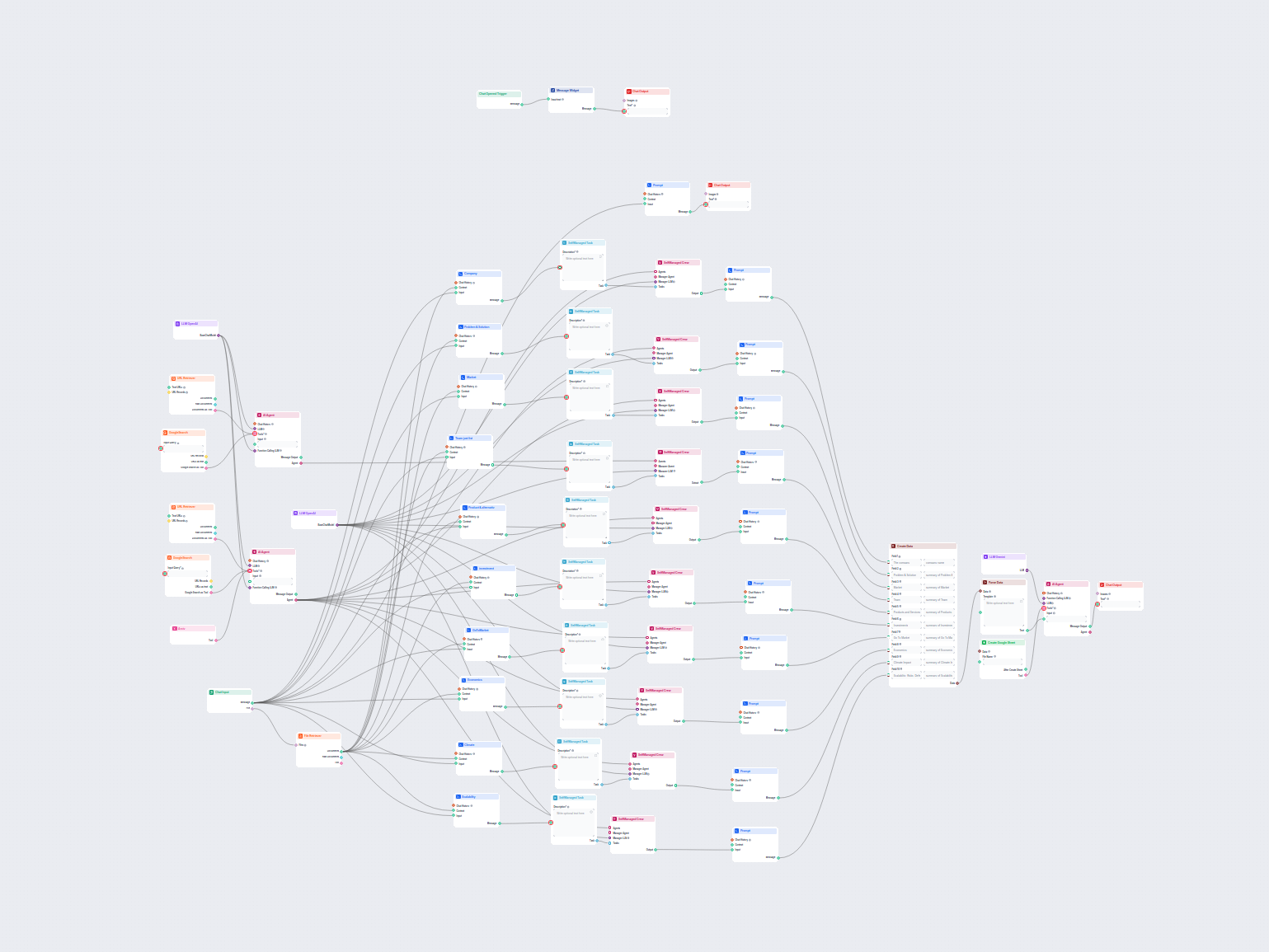

Comprehensive AI-driven workflow for company analysis and market research. Automatically gathers and analyzes data on company background, market position, produ...

This AI-powered workflow delivers a comprehensive, data-driven company analysis. It gathers information on company background, market landscape, team, products,...

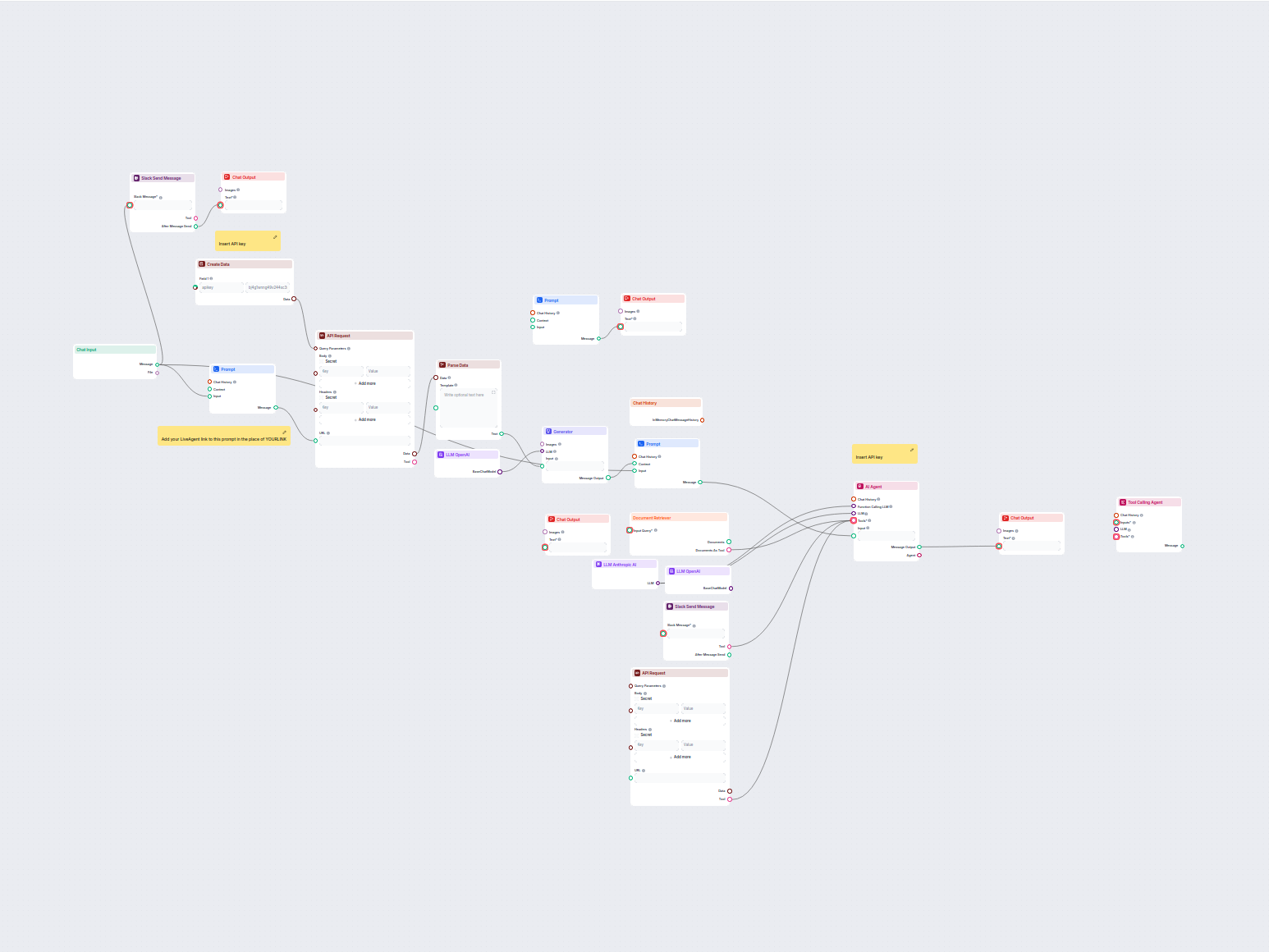

This workflow automates customer support for your company by integrating LiveAgent conversations, extracting relevant conversation data, generating responses us...

This AI-powered workflow automates customer support by combining internal knowledge base search, Google Docs knowledge retrieval, API integration, and advanced ...

This AI-powered workflow automates customer support by connecting user queries to company knowledge sources, external APIs (such as LiveAgent), and a language m...

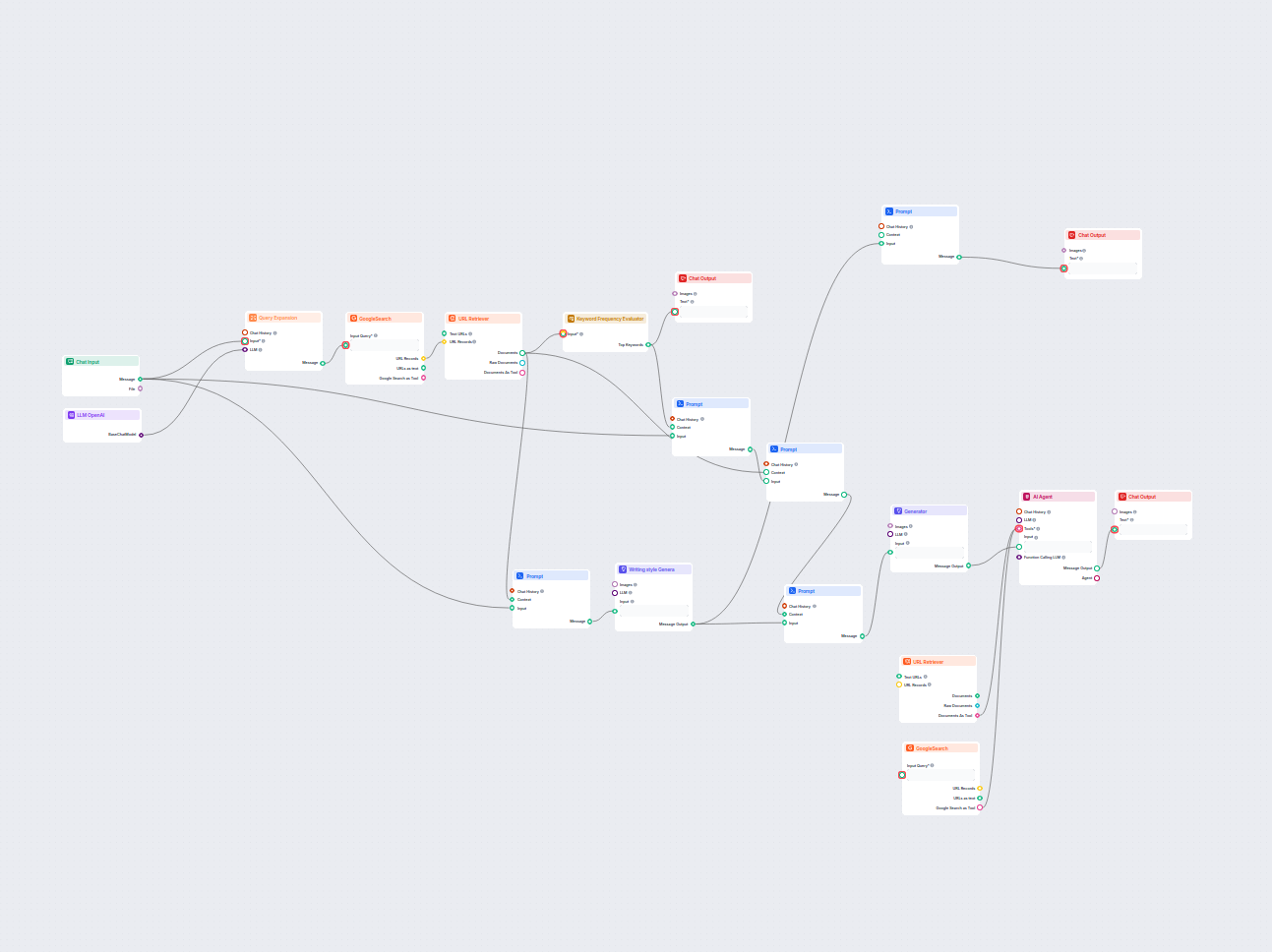

Generate in-depth, SEO-optimized glossary articles by leveraging AI and real-time web research. This flow analyzes the top-ranking content and writing styles, e...

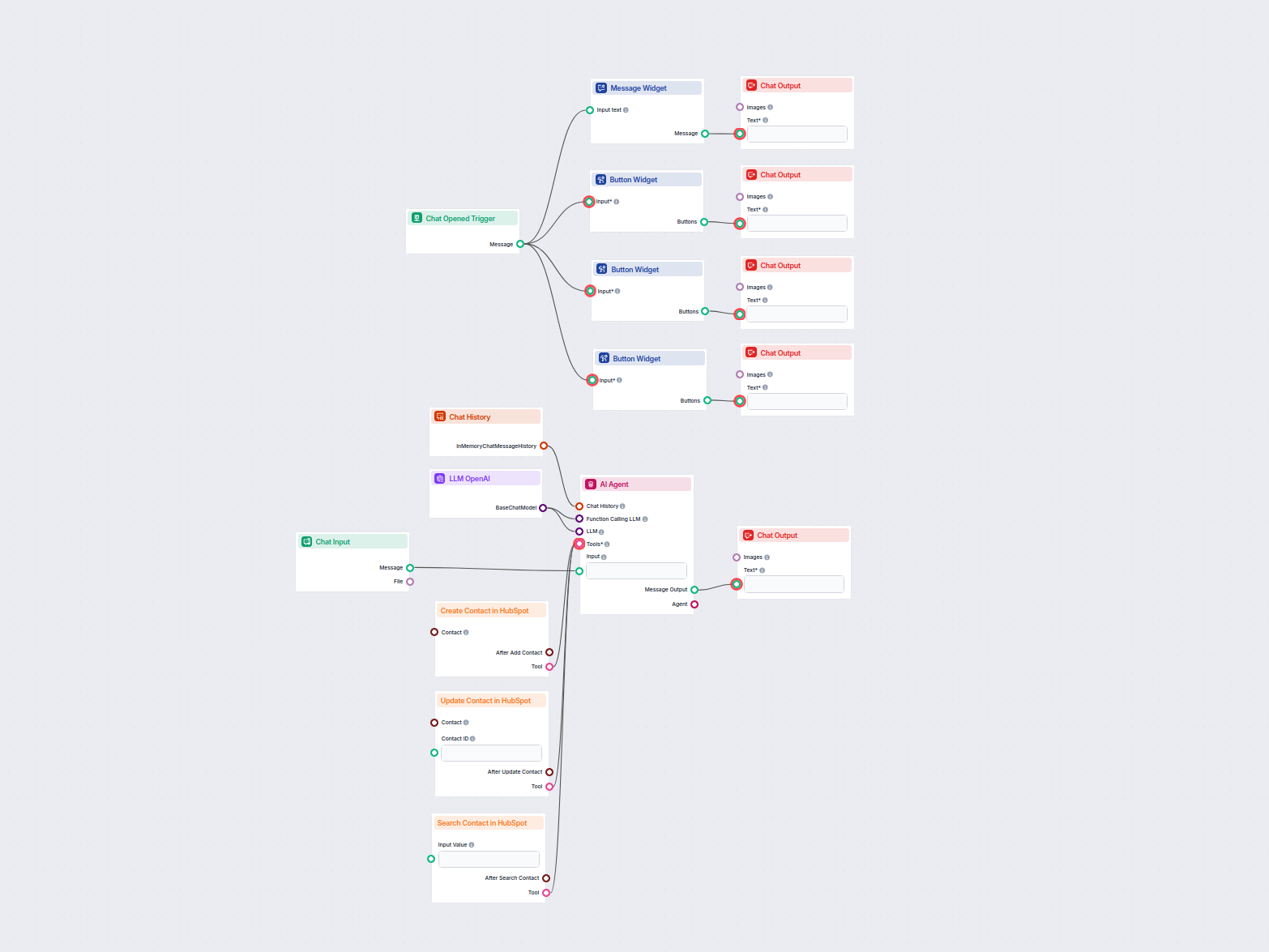

This AI-powered workflow automates contact management in HubSpot CRM. Users can easily search, create, or update contacts through a chat interface powered by an...

Generate unique, SEO-optimized web page titles using AI and live Google search data. Enter your target keywords and receive a high-ranking title suggestion tail...

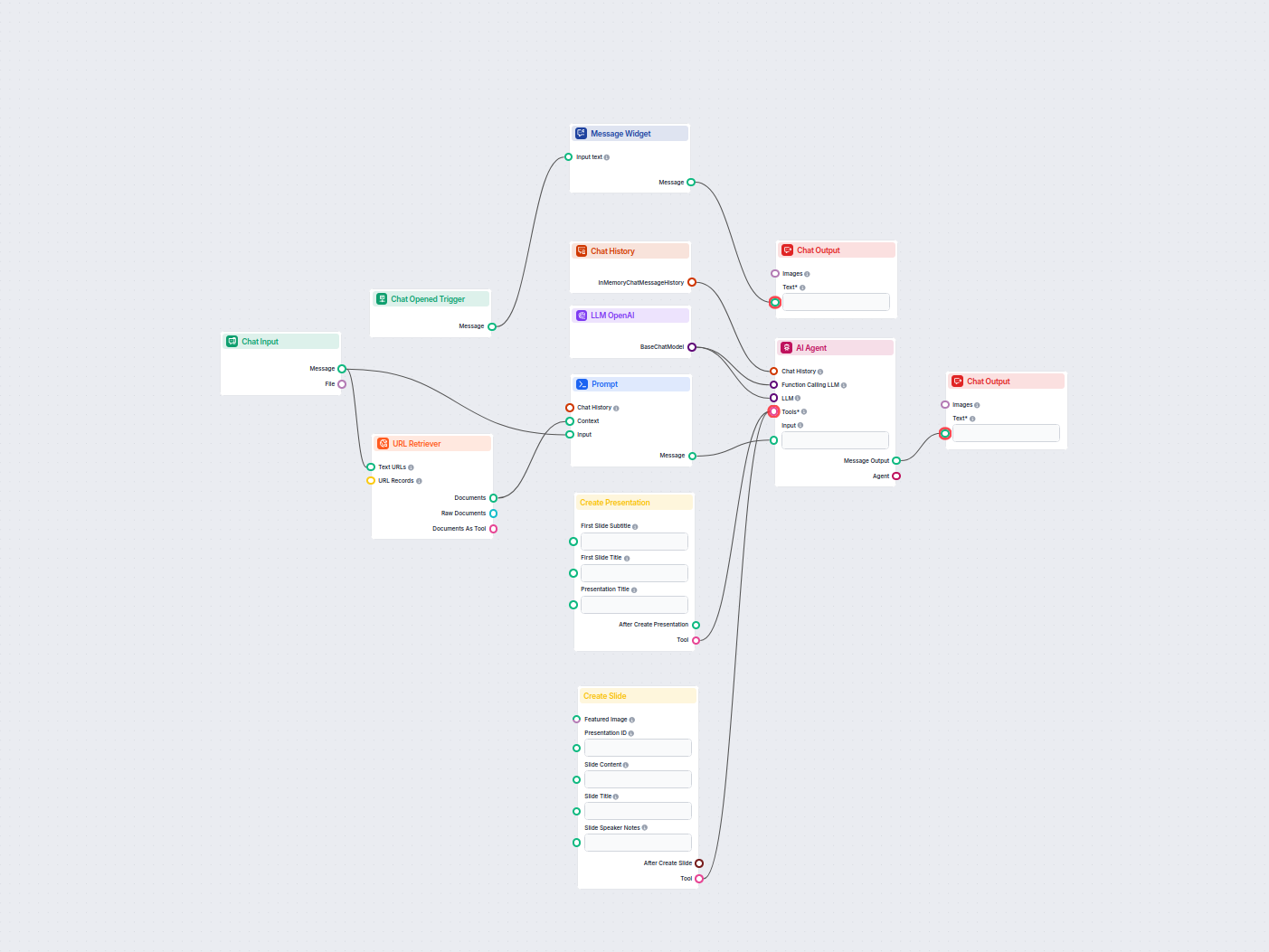

Automatically generate professional pitch decks in Google Slides using AI and live web research. This workflow gathers user input, searches Google for relevant ...

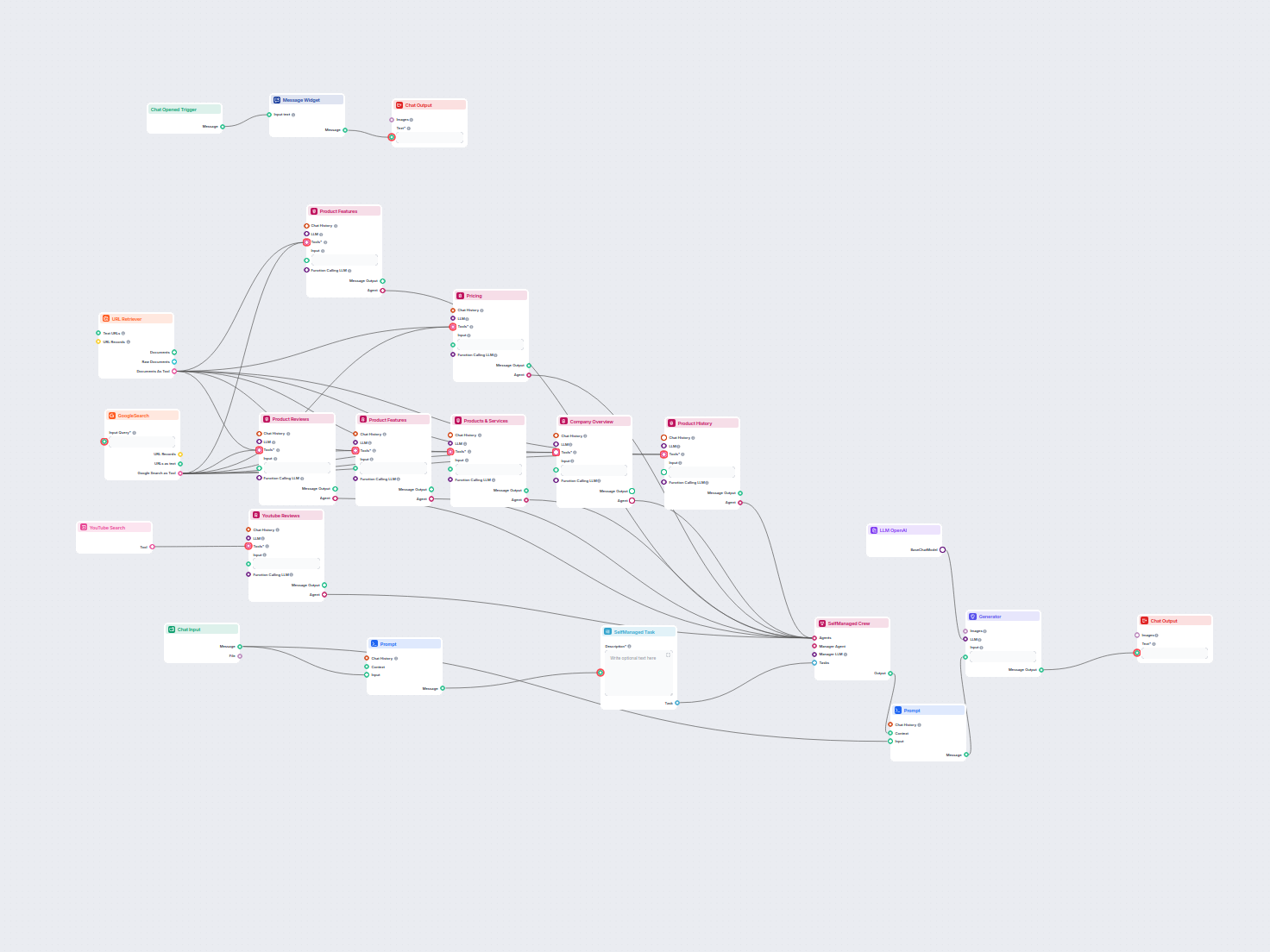

Generate comprehensive product analyses using AI agents that gather and summarize product information, pricing, features, reviews, alternatives, and more from p...

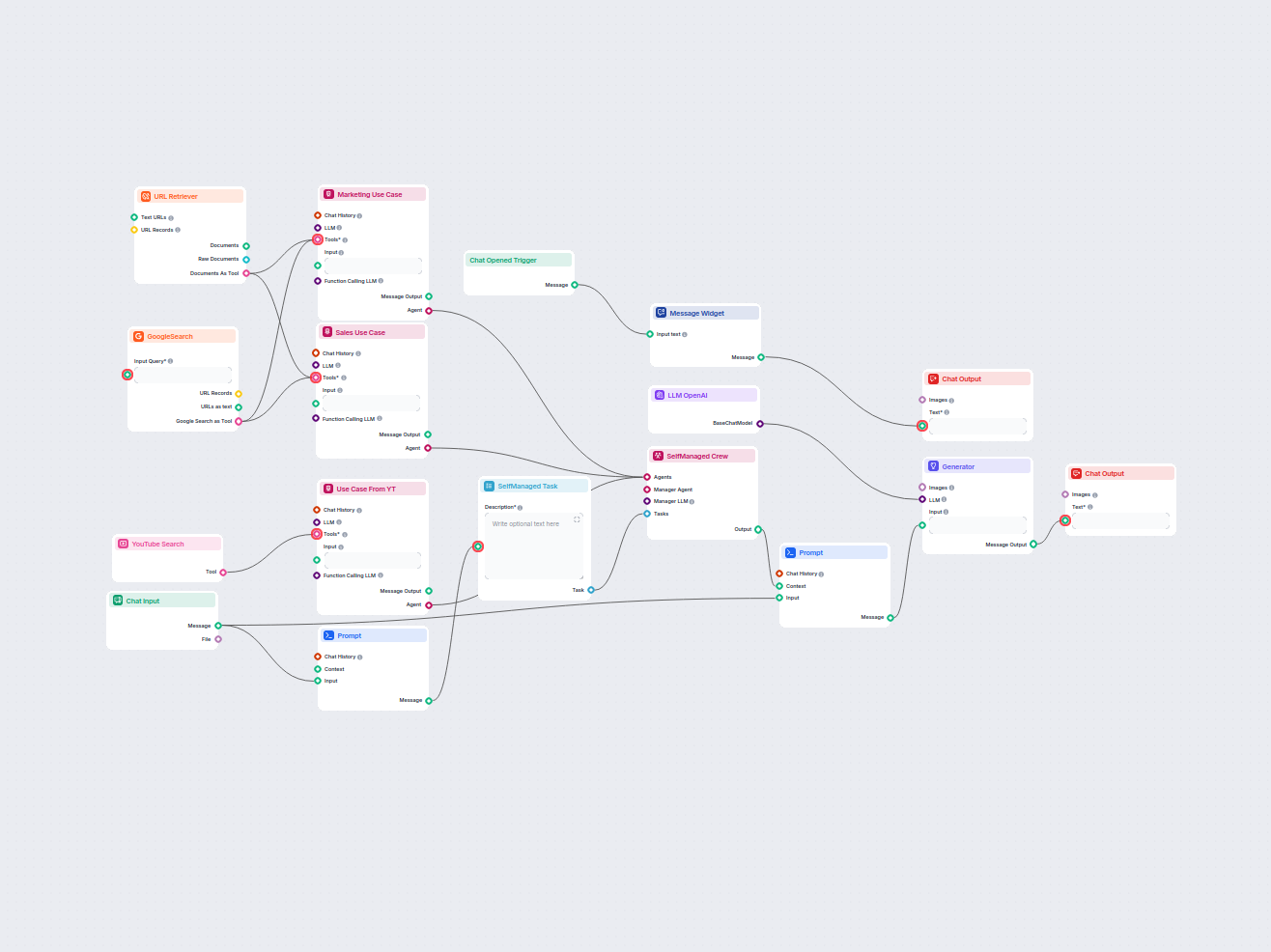

Generate comprehensive, AI-driven reports on software product use cases for marketing and sales. This workflow researches the product across web sources and You...

Generate comprehensive, SEO-optimized product review articles for software tools, including detailed features, pricing, user reviews, resources, and more, with ...

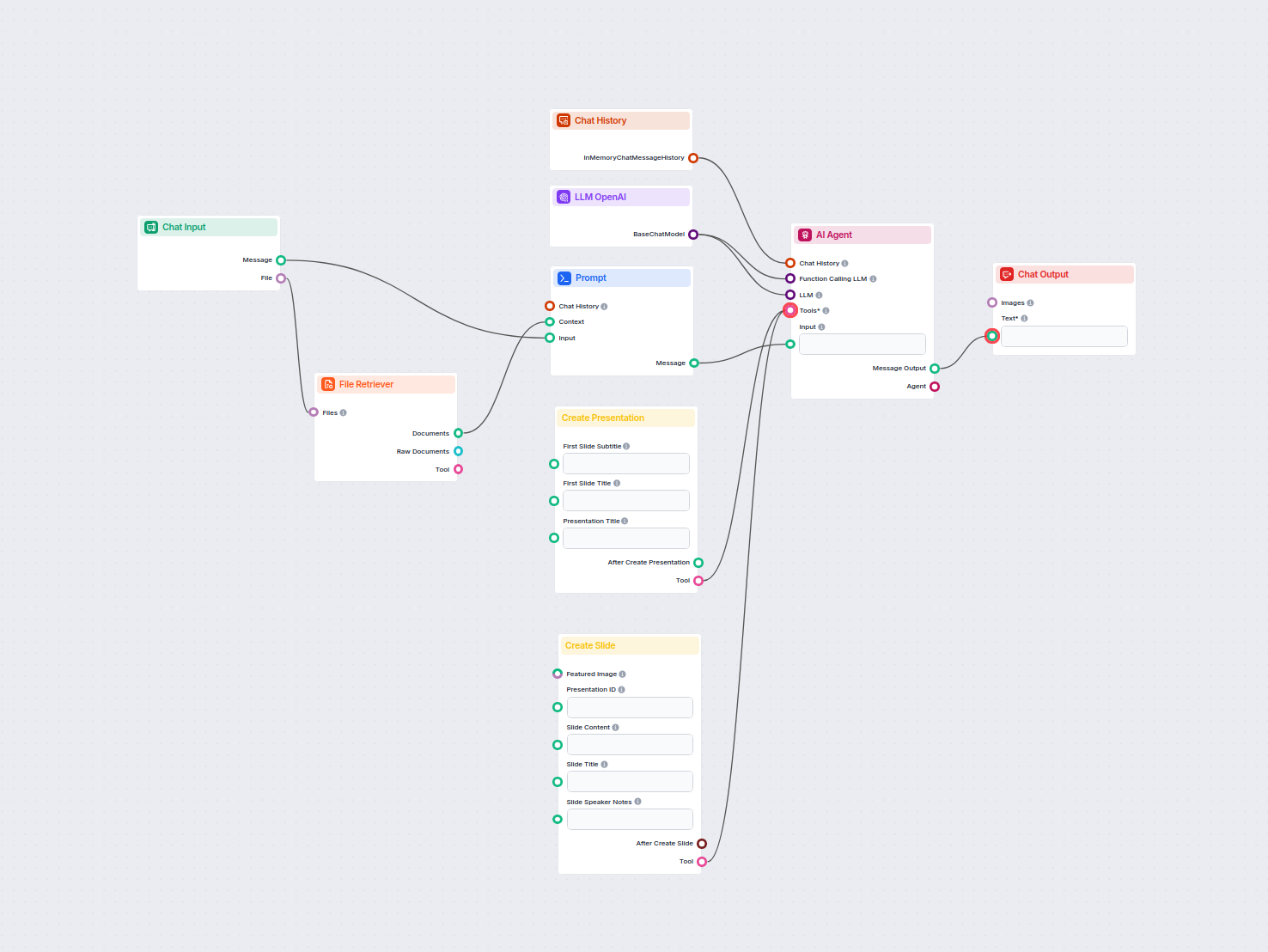

Automate the creation of professional Google Slide presentations from any uploaded document using AI. This workflow extracts document content, generates structu...

This AI workflow analyzes any company in depth by researching public data and documents, covering market, team, products, investments, and more. It synthesizes ...

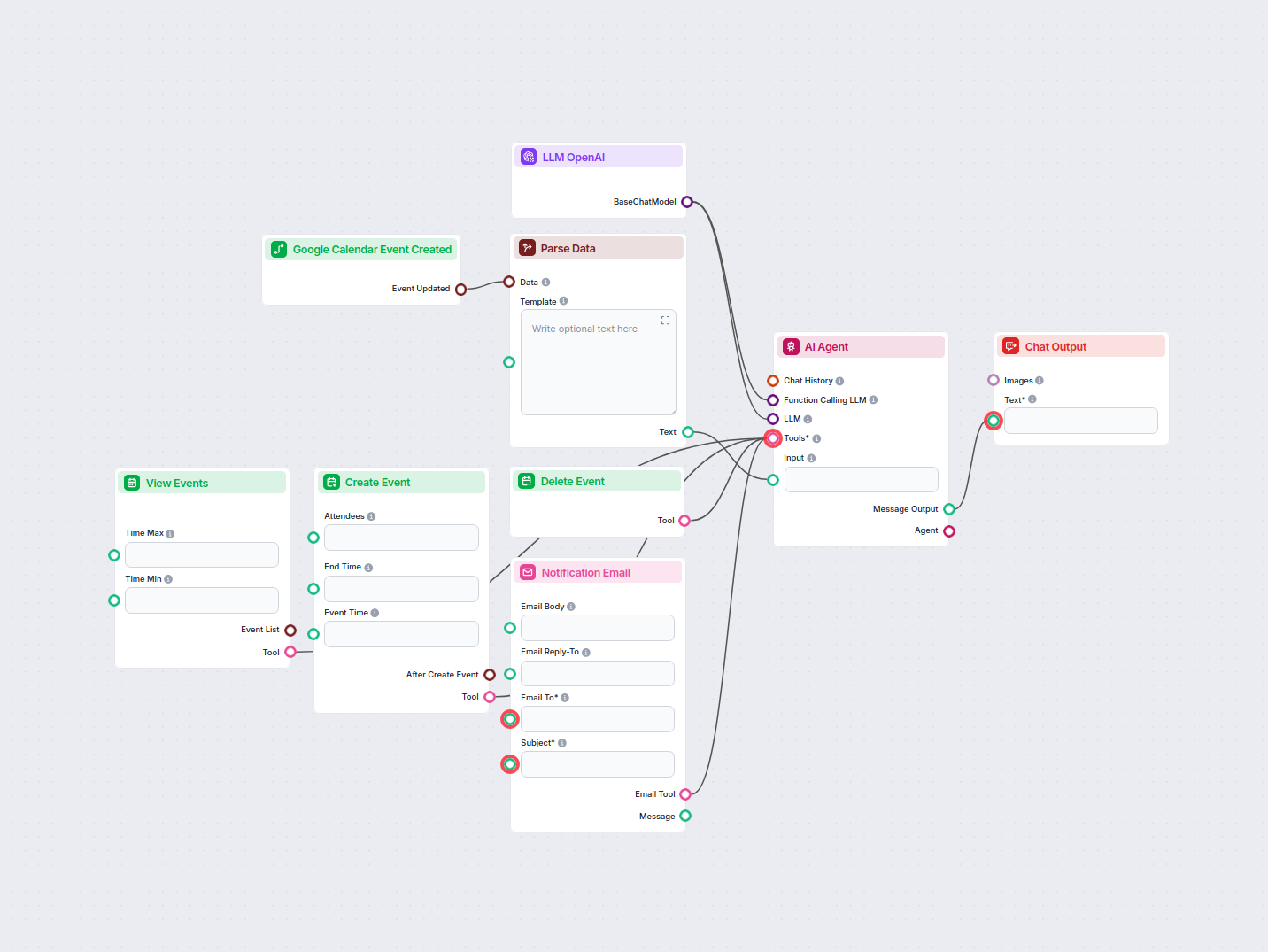

Automate and streamline vacation request approvals in Google Calendar using an AI agent. This workflow detects new vacation requests, evaluates them against com...

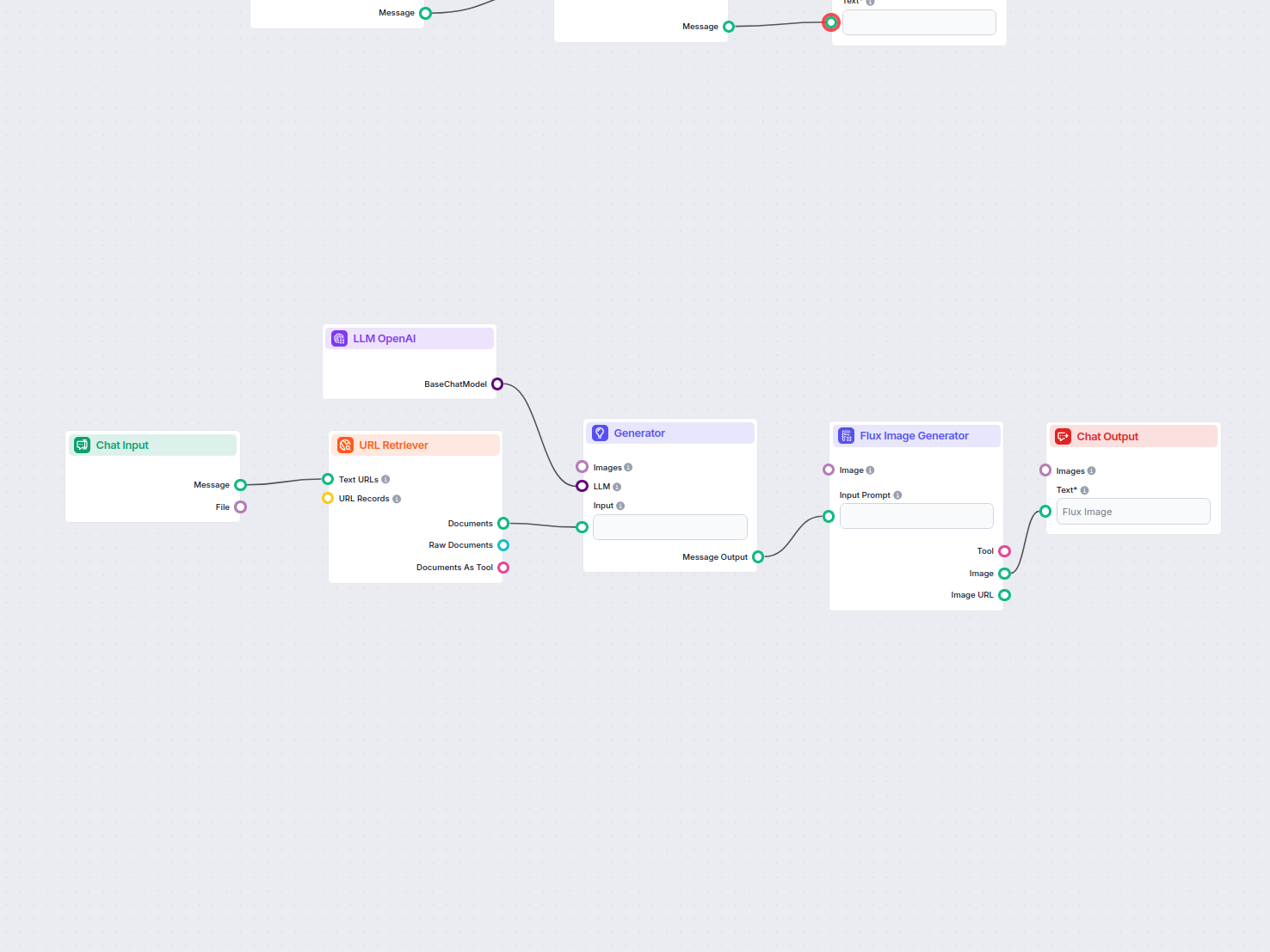

Automatically generates an engaging feature image for any blog post by analyzing its content. Just provide the blog URL, and the workflow uses AI to understand ...

Transform technical documentation from a URL into a compelling, SEO-optimized article for your website. This flow analyzes top-ranking competitor content, gener...

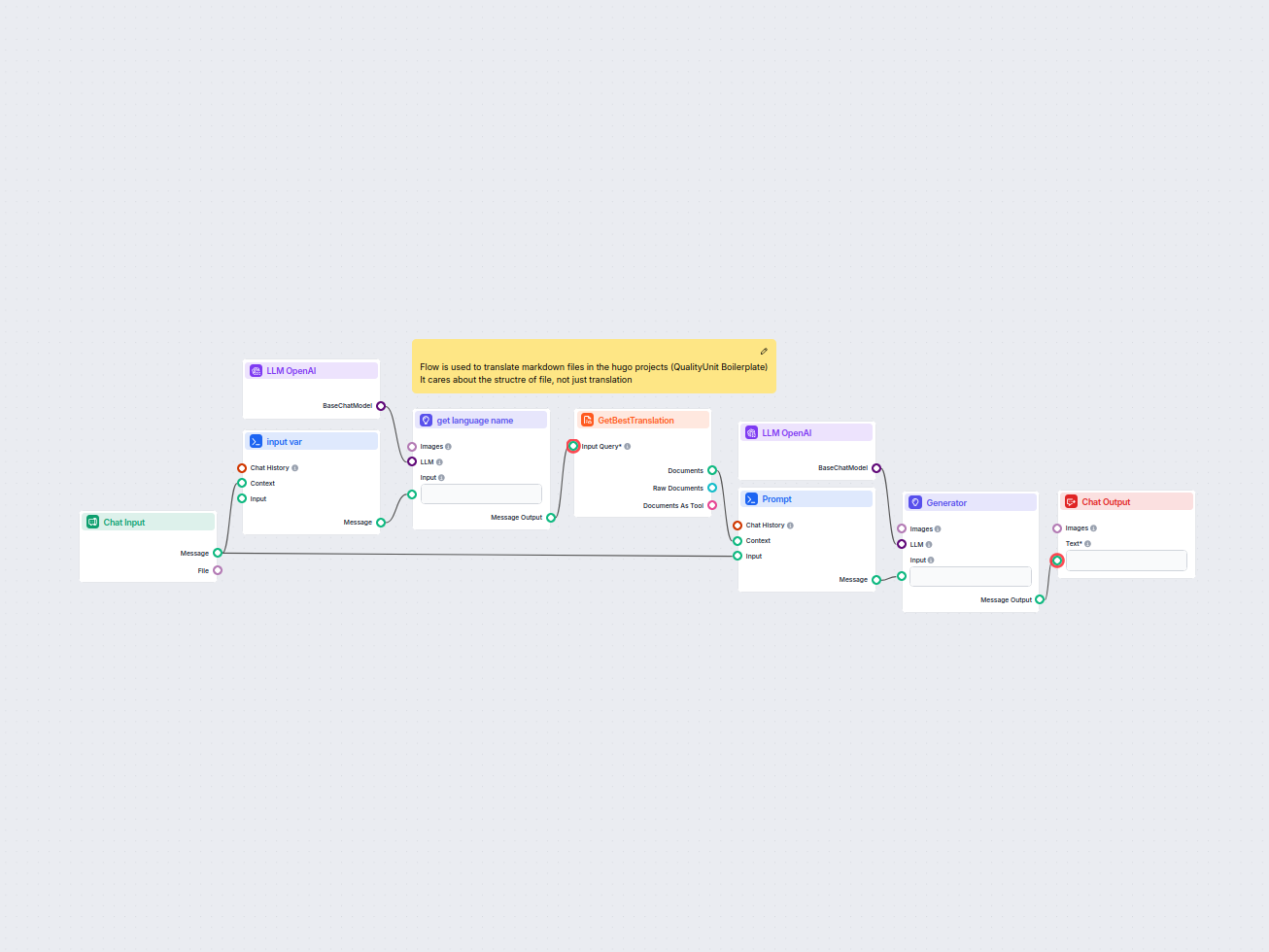

This workflow streamlines the translation of HUGO markdown files into target languages while preserving file structure and formatting. Leveraging AI language mo...

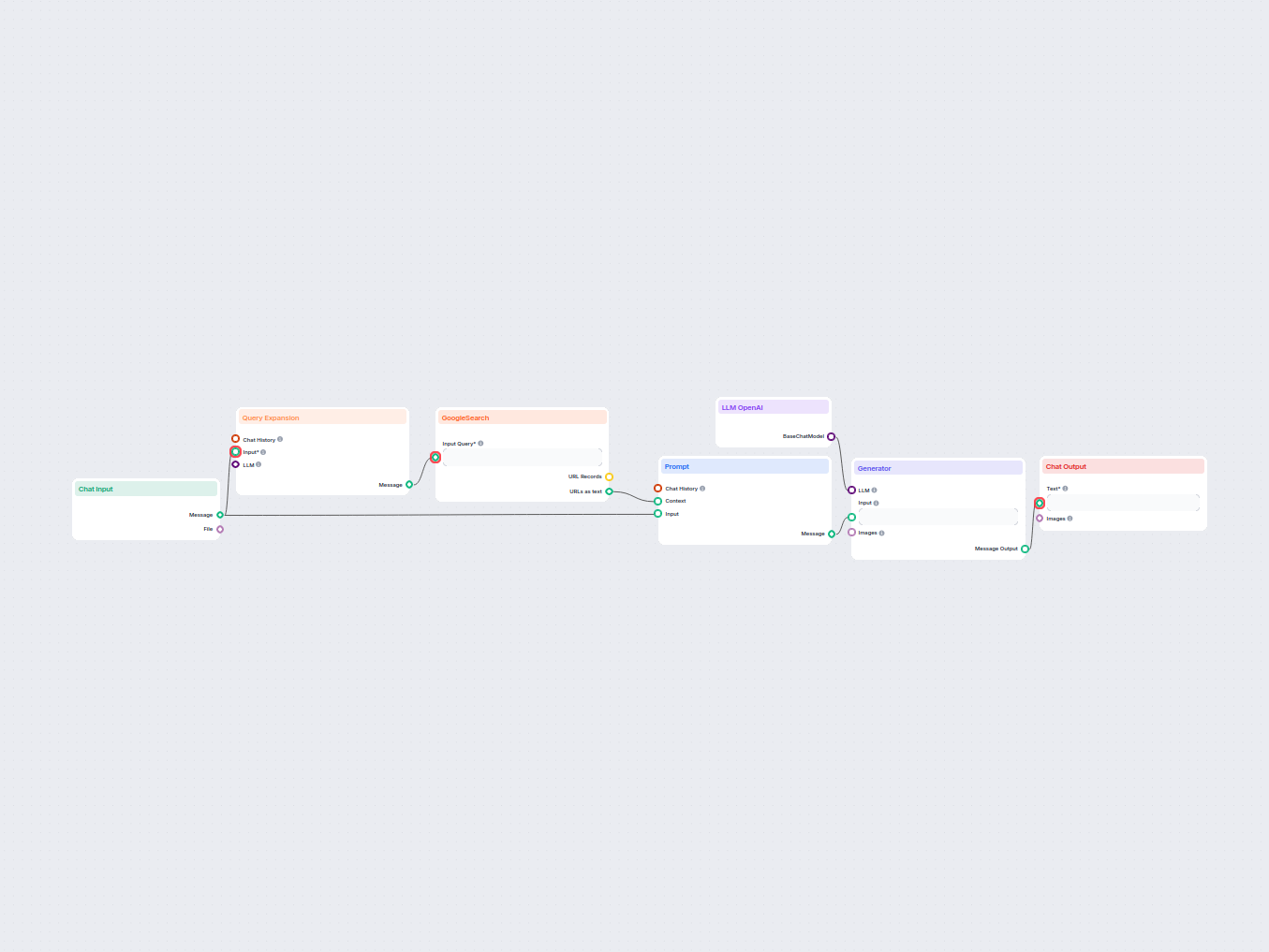

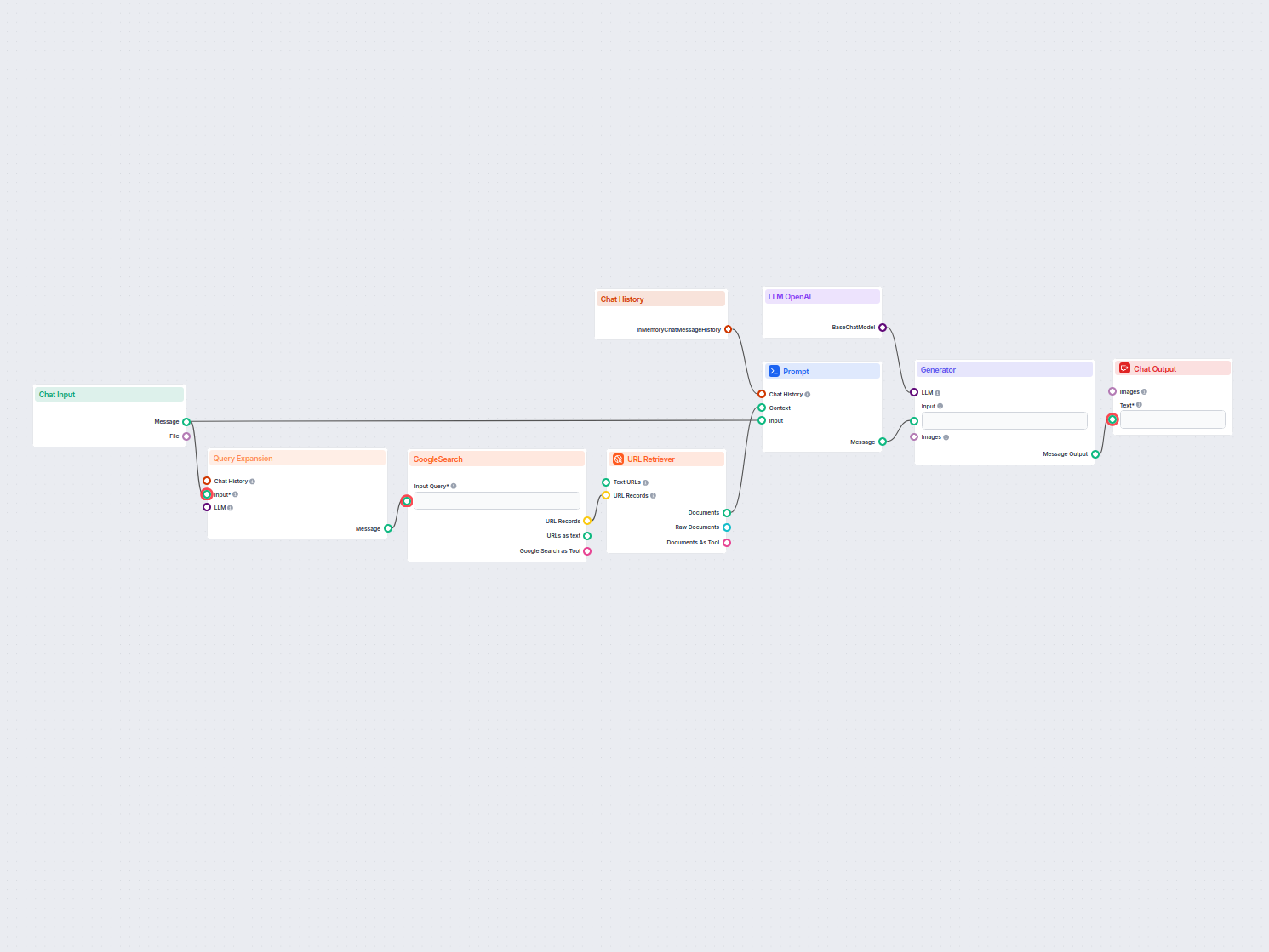

A real-time chatbot that uses Google Search restricted to your own domain, retrieves relevant web content, and leverages OpenAI LLM to answer user queries with ...

Easily search and retrieve information from private knowledgebase documents using semantic search powered by AI. The flow expands user queries, searches across ...

This AI-powered workflow analyzes the content structure of your web page, compares it with top-ranking competitor pages, and provides tailored recommendations o...

This AI-powered workflow helps Shopify merchants analyze competitor products, research market trends, and generate optimized pricing strategies. By combining Sh...

Turn any YouTube video into a professional Google Slides presentation in minutes. This AI-powered workflow extracts content from a provided YouTube URL, analyze...

Large language models are types of AI trained to process, understand, and generate human-like text. A common example is ChatGPT, which can provide elaborate responses to almost any query.

No, the LLM component is only a representation of the AI model. It changes the model the Generator will use. The default LLM in the Generator is ChatGPT-4o.

At the moment, only the OpenAI component is available. We plan to add more in the future.

No, Flows are a versatile feature with many use cases without the need for an LLM. You add an LLM if you want to build a conversational chatbot that generates text answers freely.

Not really. The component only represents the model and creates rules for it to follow. It’s the generator component that connects it to the input and runs the query through the LLM to create output.

FlowHunt supports dozens of AI models, including Claude models by Anthropic. Learn how to use Claude in your AI tools and chatbots with customizable settings fo...

FlowHunt supports dozens of text generation models, including Meta's Llama models. Learn how to integrate Llama into your AI tools and chatbots, customize setti...

FlowHunt supports dozens of text generation models, including models by xAI. Here's how to use the xAI models in your AI tools and chatbots.

Cookie Consent

We use cookies to enhance your browsing experience and analyze our traffic. See our privacy policy.